## Diagram: Sequence-based Interpretation of XAI

### Overview

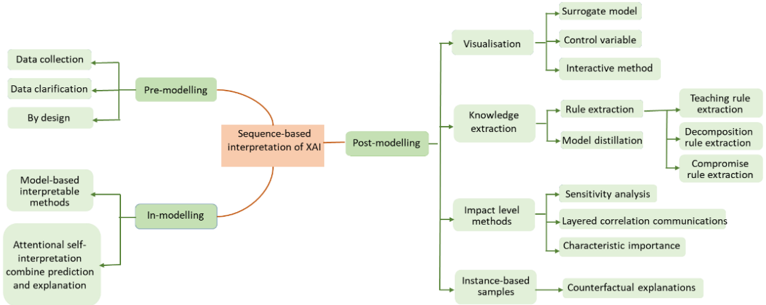

The image is a diagram illustrating the sequence-based interpretation of Explainable Artificial Intelligence (XAI). It outlines the process through pre-modelling, in-modelling, and post-modelling stages, detailing various methods and techniques associated with each stage.

### Components/Axes

* **Central Node:** "Sequence-based interpretation of XAI" (orange box)

* **Stages:**

* Pre-modelling (green box, top-left)

* In-modelling (green box, bottom-left)

* Post-modelling (green box, right)

* **Pre-modelling Sub-components:**

* Data collection (green box)

* Data clarification (green box)

* By design (green box)

* **In-modelling Sub-components:**

* Model-based interpretable methods (green box)

* Attentional self-interpretation combine prediction and explanation (green box)

* **Post-modelling Sub-components:**

* Visualisation (green box)

* Surrogate model (green box)

* Control variable (green box)

* Interactive method (green box)

* Knowledge extraction (green box)

* Rule extraction (green box)

* Teaching rule extraction (green box)

* Decomposition rule extraction (green box)

* Compromise rule extraction (green box)

* Model distillation (green box)

* Impact level methods (green box)

* Sensitivity analysis (green box)

* Layered correlation communications (green box)

* Characteristic importance (green box)

* Instance-based samples (green box)

* Counterfactual explanations (green box)

### Detailed Analysis

The diagram presents a sequential flow, starting with pre-modelling, moving to in-modelling, and culminating in post-modelling. Each stage is connected to the central node "Sequence-based interpretation of XAI" via curved arrows. Each stage has sub-components, which are connected via straight arrows.

* **Pre-modelling:** This stage focuses on preparing the data and defining the design for the XAI process. It includes data collection, data clarification, and design considerations.

* **In-modelling:** This stage involves the actual modelling process, emphasizing interpretable methods and self-interpretation techniques that combine prediction and explanation.

* **Post-modelling:** This stage focuses on extracting knowledge and insights from the model. It includes visualization techniques (surrogate models, control variables, interactive methods), knowledge extraction (rule extraction, model distillation), impact level methods (sensitivity analysis, layered correlation communications, characteristic importance), and instance-based samples (counterfactual explanations).

### Key Observations

* The diagram provides a structured overview of the XAI interpretation process.

* It highlights the importance of each stage, from data preparation to knowledge extraction.

* The diagram emphasizes the use of various methods and techniques to enhance the interpretability of AI models.

### Interpretation

The diagram illustrates a comprehensive approach to XAI, emphasizing the importance of a sequence-based interpretation. It suggests that effective XAI requires careful consideration of data preparation, model building, and post-hoc analysis. The diagram highlights the interconnectedness of these stages and the various methods that can be employed to enhance the interpretability of AI models. The inclusion of specific techniques like surrogate models, rule extraction, and sensitivity analysis suggests a focus on making AI models more transparent and understandable to users.