\n

## Diagram: Sequence-based Interpretation of XAI

### Overview

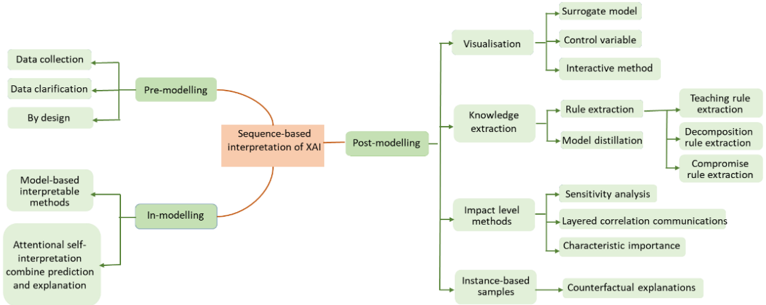

The image is a diagram illustrating the sequence-based interpretation of Explainable Artificial Intelligence (XAI). It depicts a flow chart with three main stages: Pre-modelling, In-modelling, and Post-modelling, connected to the central concept of "Sequence-based Interpretation of XAI". Each stage branches out into several methods or techniques.

### Components/Axes

The diagram is organized around a central node labeled "Sequence-based Interpretation of XAI". The three main branches are:

* **Pre-modelling:** Located on the left side of the central node.

* **In-modelling:** Located on the lower-left side of the central node.

* **Post-modelling:** Located on the right side of the central node.

Each branch then splits into sub-branches representing specific techniques.

### Detailed Analysis or Content Details

**Pre-modelling:**

* Data collection

* Data clarification

* By design

**In-modelling:**

* Model-based interpretable methods

* Attention-based self-interpretation combine prediction and explanation

**Post-modelling:**

* **Visualization:**

* Surrogate model

* Control variable

* Interactive method

* **Knowledge extraction:**

* Rule extraction

* Teaching rule extraction

* Decomposition rule extraction

* Compromise rule extraction

* Model distillation

* **Impact level methods:**

* Sensitivity analysis

* Layered correlation communications

* Characteristic importance

* **Instance-based samples:**

* Counterfactual explanations

The connections between the stages and techniques are represented by arrows indicating the flow of information or process. The central node "Sequence-based Interpretation of XAI" is highlighted in orange, while the other nodes are green.

### Key Observations

The diagram emphasizes a sequential approach to XAI interpretation, starting with data preparation (Pre-modelling), moving through model building (In-modelling), and culminating in analysis and explanation (Post-modelling). The Post-modelling stage is the most complex, with four distinct branches representing different methods for understanding and interpreting the model's behavior. The hierarchical structure within the "Knowledge extraction" branch suggests a refinement process, moving from general rule extraction to more specific techniques.

### Interpretation

This diagram illustrates a comprehensive framework for interpreting XAI models. It suggests that a successful XAI strategy requires attention to all stages of the model lifecycle, from data collection to post-hoc analysis. The branching structure of the Post-modelling stage indicates that there is no single "best" method for XAI interpretation; rather, the appropriate technique depends on the specific goals and context of the application. The diagram highlights the importance of both global (e.g., rule extraction, model distillation) and local (e.g., counterfactual explanations) interpretability methods. The emphasis on visualization suggests that effective communication of XAI results is crucial for building trust and understanding. The diagram is a high-level overview and does not provide specific details about the implementation of each technique. It serves as a conceptual map for navigating the complex landscape of XAI interpretation.