## Diagram: Sequence-based Interpretation of XAI (Explainable Artificial Intelligence)

### Overview

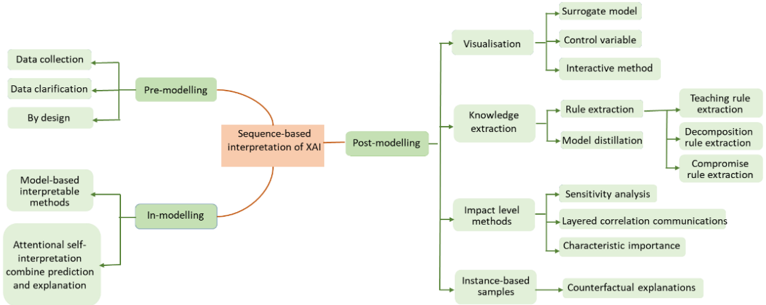

The image is a hierarchical flowchart or mind map illustrating a taxonomy of methods for interpreting Explainable AI (XAI). The central concept is "Sequence-based interpretation of XAI," which branches into three primary phases of the modeling pipeline: Pre-modelling, In-modelling, and Post-modelling. Each phase contains specific categories and sub-categories of interpretive techniques.

### Components/Structure

The diagram is structured as a tree with a central node and three main branches extending left and right.

* **Central Node (Orange Box):** "Sequence-based interpretation of XAI"

* **Primary Branches (Green Boxes):**

* **Left, Upper:** "Pre-modelling"

* **Left, Lower:** "In-modelling"

* **Right:** "Post-modelling"

* **Secondary & Tertiary Branches (Light Green Boxes):** These extend from the primary branches, detailing specific methods. Connections are shown with directional arrows.

### Detailed Analysis

**1. Pre-modelling Branch (Left, Upper):**

This branch lists methods applied before model training.

* **Sub-nodes connected to "Pre-modelling":**

* "Data collection"

* "Data clarification"

* "By design"

**2. In-modelling Branch (Left, Lower):**

This branch lists methods integrated into the model's architecture or training process.

* **Sub-nodes connected to "In-modelling":**

* "Model-based interpretable methods"

* "Attentional self-interpretation combine prediction and explanation" (Note: This is a single, lengthy label).

**3. Post-modelling Branch (Right):**

This is the most extensive branch, detailing methods applied after a model is trained. It splits into four main categories.

* **Category 1: "Visualisation"**

* "Surrogate model"

* "Control variable"

* "Interactive method"

* **Category 2: "Knowledge extraction"**

* "Rule extraction"

* "Teaching rule extraction"

* "Decomposition rule extraction"

* "Compromise rule extraction"

* "Model distillation"

* **Category 3: "Impact level methods"**

* "Sensitivity analysis"

* "Layered correlation communications"

* "Characteristic importance"

* **Category 4: "Instance-based samples"**

* "Counterfactual explanations"

### Key Observations

* **Taxonomy by Pipeline Stage:** The primary organizational principle is the sequence of the machine learning workflow (Pre, In, Post), not the technical approach of the XAI method itself.

* **Asymmetry in Detail:** The "Post-modelling" branch is significantly more detailed than the others, suggesting a greater variety or emphasis on techniques applied after model training in this framework.

* **Hierarchical Depth:** The "Knowledge extraction" category under "Post-modelling" has the deepest hierarchy, with three distinct types of "rule extraction" specified.

* **Label Specificity:** Some labels are very specific (e.g., "Attentional self-interpretation combine prediction and explanation"), while others are broad categories (e.g., "Visualisation").

### Interpretation

This diagram presents a structured, process-oriented framework for categorizing XAI techniques. It argues that interpretability is not a single step but a concern to be addressed throughout the entire model development lifecycle.

* **Pre-modelling** focuses on **foundational transparency**—ensuring the data and design choices are sound and understandable from the start.

* **In-modelling** focuses on **intrinsic interpretability**—building models whose internal mechanisms are inherently easier to understand (like decision trees) or incorporating explanation generation directly into the model's operation (like attention mechanisms).

* **Post-modelling** focuses on **post-hoc explanation**—applying external tools to a trained "black-box" model to probe its behavior, extract simplified rules, visualize its decision boundaries, or explain individual predictions (e.g., via counterfactuals).

The depth in the "Post-modelling" section reflects the current research and application landscape, where significant effort is devoted to explaining complex, pre-existing models. The framework implies that a comprehensive XAI strategy should consider methods from all three phases to achieve robust and trustworthy interpretability. The inclusion of "By design" under Pre-modelling and "Model-based interpretable methods" under In-modelling highlights the value of building interpretability into the system, rather than relying solely on post-hoc analysis.