## Flowchart: Sequence-based Interpretation of XAI

### Overview

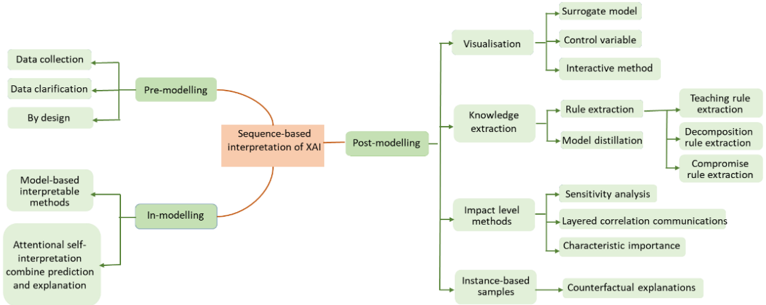

The flowchart illustrates a structured process for interpreting Explainable Artificial Intelligence (XAI), divided into three phases: **Pre-modelling**, **In-modelling**, and **Post-modelling**. Each phase contains specific methods and components, with arrows indicating the flow of information and processes.

---

### Components/Axes

- **Nodes**: Represent stages, methods, or concepts (e.g., "Data collection," "Surrogate model").

- **Arrows**: Indicate directional relationships between nodes.

- **Color Coding**:

- **Green**: Most nodes (standard processes).

- **Orange**: Central node ("Sequence-based interpretation of XAI").

- **Red**: Highlighted central node (key focus).

---

### Detailed Analysis

#### Pre-modelling

1. **Data collection**: Initial phase for gathering raw data.

2. **Data clarification**: Refining data for usability.

3. **By design**: Ensuring data aligns with predefined objectives.

#### In-modelling

1. **Model-based interpretable methods**: Techniques to build inherently interpretable models.

2. **Attentional self-interpretation**: Combines prediction and explanation during model training.

#### Post-modelling

1. **Visualisation**:

- **Surrogate model**: Simplified model approximating complex ones.

- **Control variable**: Isolating variables to test impact.

- **Interactive method**: User-driven exploration of model behavior.

2. **Knowledge extraction**:

- **Rule extraction**: Deriving decision rules from models.

- **Model distillation**: Simplifying complex models into smaller ones.

- **Compromise rule extraction**: Balancing accuracy and interpretability.

3. **Impact level methods**:

- **Sensitivity analysis**: Testing how input changes affect outputs.

- **Layered correlation communications**: Explaining relationships between variables.

- **Characteristic importance**: Identifying key features influencing predictions.

4. **Instance-based samples**:

- **Counterfactual explanations**: Hypothetical scenarios altering predictions.

---

### Key Observations

- **Central Focus**: The orange "Sequence-based interpretation of XAI" node acts as the core, connecting all phases.

- **Hierarchical Structure**: Pre-modelling sets the foundation, In-modelling integrates interpretability, and Post-modelling refines explanations.

- **Color Emphasis**: The red central node draws attention to the overarching goal of sequence-based XAI interpretation.

---

### Interpretation

The flowchart emphasizes a **systematic, iterative approach** to XAI, ensuring interpretability is embedded at every stage:

1. **Pre-modelling** ensures data quality and alignment with objectives.

2. **In-modelling** prioritizes transparency by integrating interpretability directly into model design (e.g., attentional self-interpretation).

3. **Post-modelling** focuses on post-hoc explanations, using visualization and rule extraction to make complex models understandable. Techniques like counterfactual explanations and sensitivity analysis highlight the model's behavior and robustness.

This structure suggests that effective XAI requires **cross-phase collaboration**, where data preparation, model design, and post-hoc analysis work together to produce transparent, trustworthy AI systems.