## Diagram: LLM-based Knowledge Graph Construction

### Overview

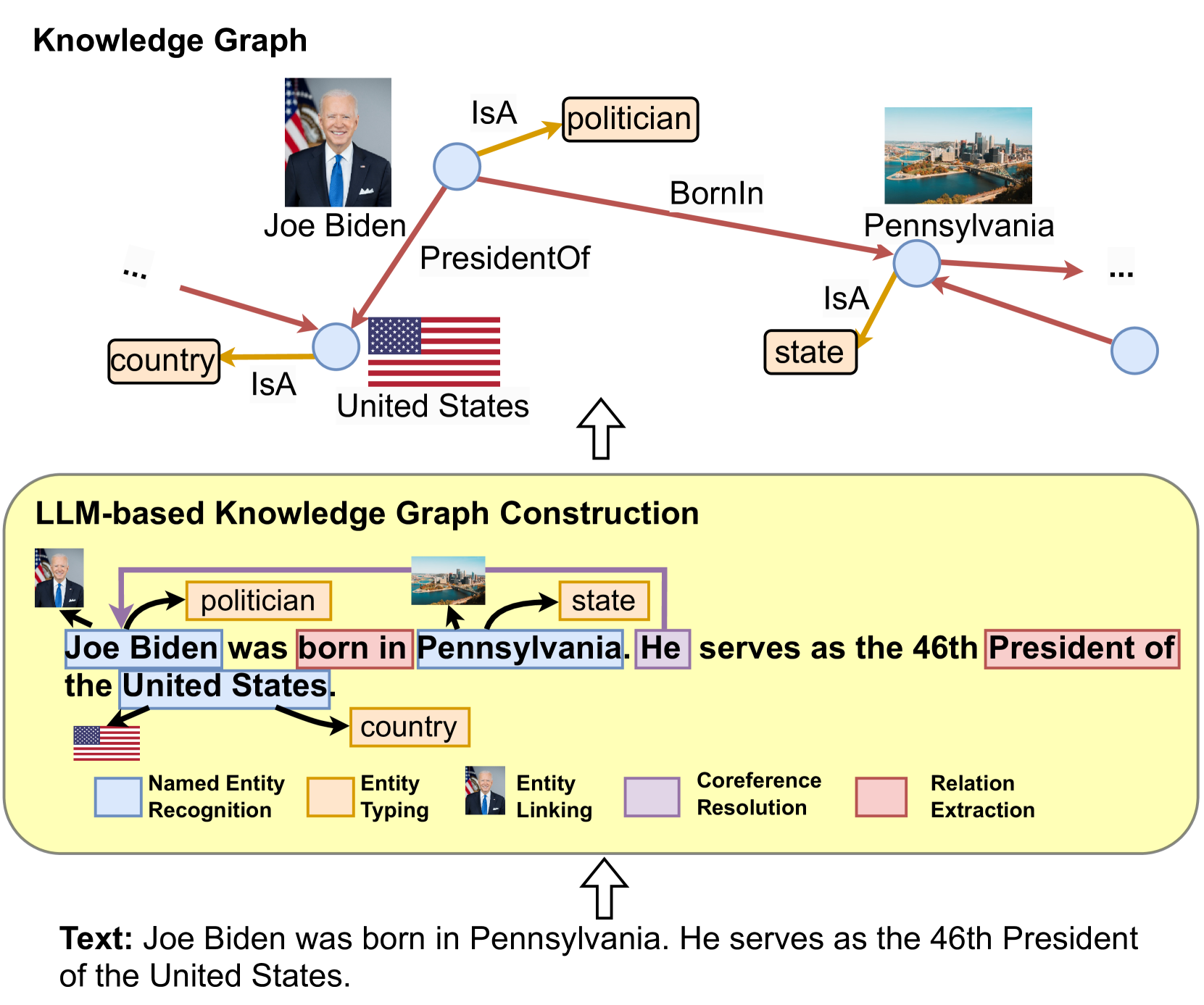

This image is a two-part diagram illustrating the process of constructing a knowledge graph from unstructured text using a Large Language Model (LLM). The top section displays the resulting knowledge graph, while the bottom section details the LLM-based extraction and linking pipeline that generates it. The overall flow is indicated by upward-pointing arrows, showing that the source text at the very bottom is processed to create the structured graph at the top.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Top Region (Knowledge Graph):** A network diagram with nodes (blue circles) and labeled, colored edges representing relationships.

2. **Middle Region (LLM-based Knowledge Graph Construction):** A yellow box containing the source sentence with color-coded annotations and a legend explaining the annotation scheme.

3. **Bottom Region (Source Text):** The raw input text.

**Legend (Located in the middle region, bottom):**

* **Light Blue Box:** Named Entity Recognition

* **Light Orange Box:** Entity Typing

* **Image of Joe Biden:** Entity Linking

* **Light Purple Box:** Coreference Resolution

* **Light Red Box:** Relation Extraction

**Nodes and Edges in the Knowledge Graph (Top Region):**

* **Nodes:** Represent entities. Visualized as blue circles, some accompanied by images (Joe Biden's portrait, US flag, Pennsylvania skyline).

* **Edges:** Represent relationships. Color-coded and labeled:

* **Yellow Edges:** Labeled "IsA". Connects an entity to its type (e.g., Joe Biden -> politician, United States -> country, Pennsylvania -> state).

* **Red/Brown Edges:** Labeled with specific relations. Connects entities (e.g., Joe Biden -> BornIn -> Pennsylvania, Joe Biden -> PresidentOf -> United States).

### Detailed Analysis

**Source Text (Bottom Region):**

The input sentence is: "Joe Biden was born in Pennsylvania. He serves as the 46th President of the United States."

**LLM-based Construction Process (Middle Region):**

The source sentence is annotated to show the NLP tasks performed:

1. **Named Entity Recognition (Light Blue):** Identifies "Joe Biden", "Pennsylvania", "United States".

2. **Entity Typing (Light Orange):** Assigns types: "politician" (to Joe Biden), "state" (to Pennsylvania), "country" (to United States).

3. **Entity Linking (Image Icon):** Links the text "Joe Biden" to a specific real-world entity (represented by his portrait). Similarly, "Pennsylvania" is linked to a skyline image, and "United States" to a flag.

4. **Coreference Resolution (Light Purple):** Links the pronoun "He" back to the antecedent "Joe Biden".

5. **Relation Extraction (Light Red):** Extracts the relationships "born in" and "President of the".

**Resulting Knowledge Graph (Top Region):**

The extracted information is structured into a graph:

* **Central Node:** Joe Biden (linked to his portrait).

* **Relationships from Joe Biden:**

* `IsA` -> `politician` (yellow edge).

* `BornIn` -> `Pennsylvania` (red edge).

* `PresidentOf` -> `United States` (red edge).

* **Sub-graphs for Linked Entities:**

* `Pennsylvania` (linked to skyline) `IsA` -> `state` (yellow edge). It has an outgoing red edge to an unspecified node ("...").

* `United States` (linked to flag) `IsA` -> `country` (yellow edge). It has an incoming red edge from an unspecified node ("...").

### Key Observations

* The diagram explicitly maps each NLP sub-task (from the legend) to specific highlights in the source text.

* The knowledge graph uses a consistent visual language: blue circles for nodes, yellow "IsA" edges for typing, and red/brown edges for specific relations.

* The graph is incomplete, indicated by nodes with "..." and edges leading to unspecified nodes, suggesting it is a fragment of a larger knowledge base.

* The use of images (portrait, flag, skyline) alongside text labels serves as a form of entity disambiguation and visual grounding.

### Interpretation

This diagram serves as a pedagogical or technical illustration of how modern LLMs can be used for **structured information extraction**. It demonstrates the pipeline from unstructured natural language to a formal, queryable knowledge representation.

The process highlights several advanced NLP capabilities:

1. **Disambiguation:** Entity Linking ensures "Joe Biden" refers to the specific U.S. President, not another person with the same name.

2. **Contextual Understanding:** Coreference Resolution ("He" -> "Joe Biden") is crucial for connecting facts across sentences.

3. **Schema Induction:** The system automatically identifies entity types ("politician", "state", "country") and relation types ("BornIn", "PresidentOf"), which could be part of a predefined schema or dynamically inferred.

The output knowledge graph transforms a simple biographical sentence into a set of **subject-predicate-object triples** (e.g., `(Joe Biden, BornIn, Pennsylvania)`). This structured format is fundamental for semantic search, question answering systems, and building larger AI knowledge bases. The diagram effectively argues that LLMs can act as the "engine" for this complex extraction and structuring task, bridging the gap between human language and machine-readable knowledge.