\n

## Bar Chart: Performance Benchmarks

### Overview

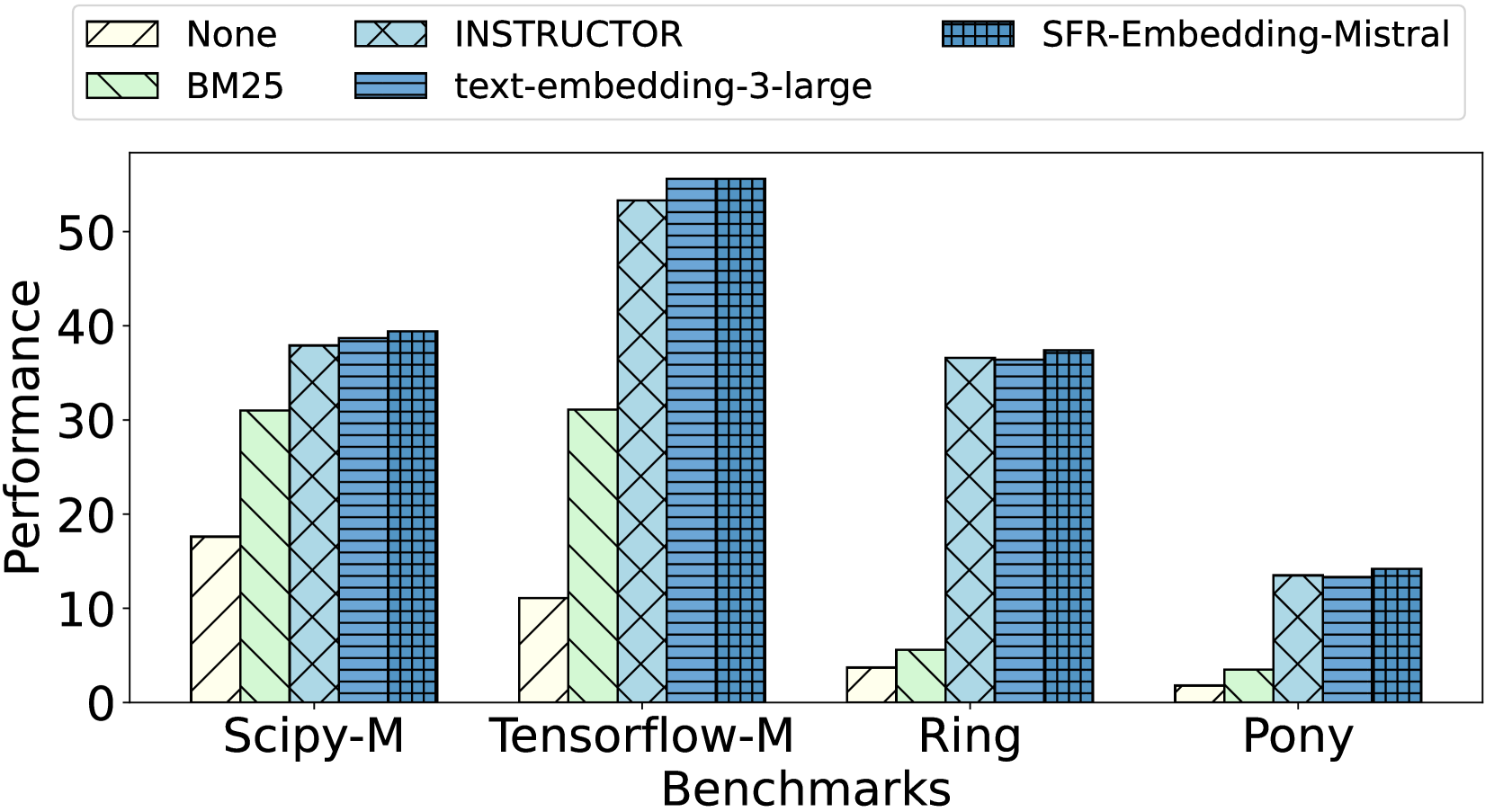

This is a bar chart comparing the performance of several models (None, INSTRUCTOR, SFR-Embedding-Mistral, BM25, and text-embedding-3-large) across four benchmarks: Scipy-M, Tensorflow-M, Ring, and Pony. Performance is measured on the y-axis.

### Components/Axes

* **X-axis:** Benchmarks (Scipy-M, Tensorflow-M, Ring, Pony)

* **Y-axis:** Performance (Scale from 0 to 50, increments of 10)

* **Legend:**

* None (Light Gray, diagonal stripes)

* INSTRUCTOR (Blue, hatched)

* SFR-Embedding-Mistral (Dark Blue, solid)

* BM25 (Light Green, diagonal stripes)

* text-embedding-3-large (Medium Blue, solid)

### Detailed Analysis

The chart consists of grouped bar plots for each benchmark.

**Scipy-M:**

* None: Approximately 17.

* INSTRUCTOR: Approximately 38.

* SFR-Embedding-Mistral: Approximately 41.

* BM25: Approximately 32.

* text-embedding-3-large: Approximately 40.

**Tensorflow-M:**

* None: Approximately 28.

* INSTRUCTOR: Approximately 54.

* SFR-Embedding-Mistral: Approximately 56.

* BM25: Approximately 30.

* text-embedding-3-large: Approximately 52.

**Ring:**

* None: Approximately 2.

* INSTRUCTOR: Approximately 38.

* SFR-Embedding-Mistral: Approximately 40.

* BM25: Approximately 34.

* text-embedding-3-large: Approximately 36.

**Pony:**

* None: Approximately 4.

* INSTRUCTOR: Approximately 12.

* SFR-Embedding-Mistral: Approximately 14.

* BM25: Approximately 8.

* text-embedding-3-large: Approximately 12.

### Key Observations

* SFR-Embedding-Mistral consistently performs well across all benchmarks, often achieving the highest scores.

* The "None" model generally exhibits the lowest performance.

* Tensorflow-M shows the largest performance differences between models.

* Ring and Pony benchmarks have relatively lower overall performance scores compared to Scipy-M and Tensorflow-M.

* INSTRUCTOR and text-embedding-3-large perform similarly across most benchmarks.

### Interpretation

The data suggests that the SFR-Embedding-Mistral model is the most effective across these benchmarks, consistently outperforming other models. The large performance gap observed in Tensorflow-M indicates that this benchmark is particularly sensitive to the choice of embedding model. The low performance of the "None" model highlights the importance of using an embedding model for these tasks. The relatively low scores on the Ring and Pony benchmarks might suggest these benchmarks are more challenging or require different model characteristics. The consistent performance of INSTRUCTOR and text-embedding-3-large suggests they are comparable options, potentially offering a trade-off between performance and computational cost. The chart provides a clear comparison of different embedding models, allowing for informed decisions based on specific benchmark requirements.