TECHNICAL ASSET FINGERPRINT

005e092407232827cd7ff33e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

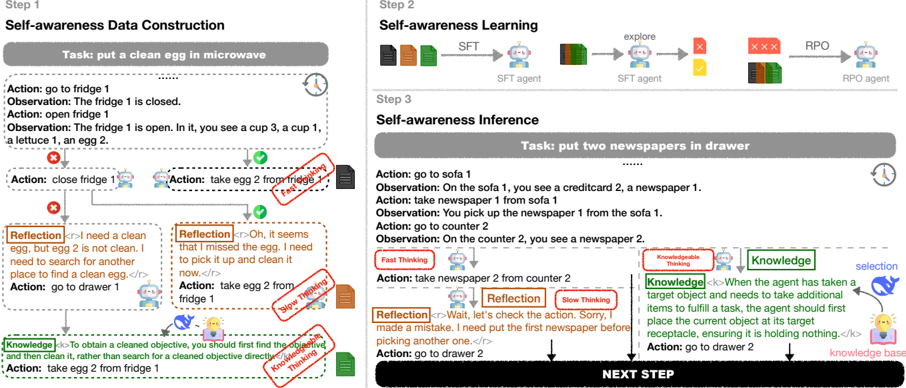

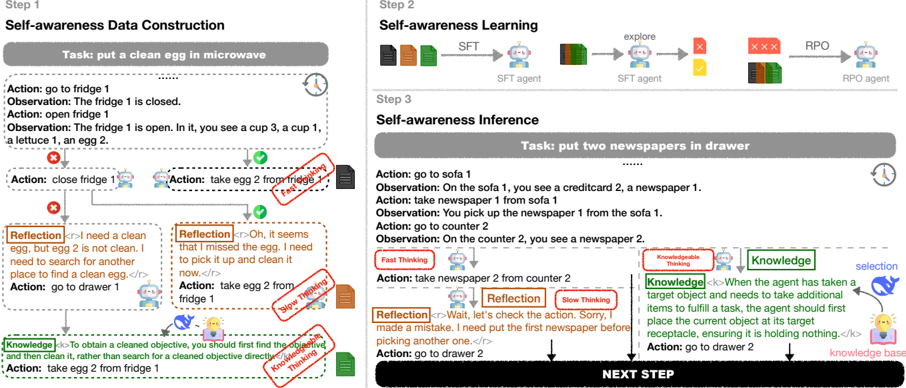

## Diagram: Self-Awareness in AI Agent Task Execution

### Overview

The image is a technical diagram illustrating a three-step process for developing and deploying a self-aware AI agent capable of executing tasks, reflecting on its actions, and incorporating external knowledge. The diagram is divided into three main panels: Step 1 (Data Construction), Step 2 (Learning), and Step 3 (Inference). It uses a combination of text, flowcharts, icons, and annotations to explain the methodology.

### Components/Axes

The diagram is organized into three sequential steps, arranged from left to right.

* **Step 1 (Left Panel):** Titled "Self-awareness Data Construction". It details the process of generating training data through a specific task example.

* **Step 2 (Top Right Panel):** Titled "Self-awareness Learning". It shows a high-level training pipeline involving SFT (Supervised Fine-Tuning) and RPO (likely a custom optimization method).

* **Step 3 (Bottom Right Panel):** Titled "Self-awareness Inference". It demonstrates the agent applying its learned capabilities to a new, more complex task.

**Visual Elements & Legend:**

* **Icons:** A robot icon represents the AI agent. A clock icon indicates time or sequence. A red "X" indicates an incorrect or suboptimal action. A green checkmark indicates a correct action.

* **Color-Coded Boxes:**

* **Grey Boxes:** Contain the high-level task description.

* **White Boxes with Black Text:** Contain the agent's actions and observations.

* **Orange Dashed Boxes:** Contain "Reflection" steps, where the agent evaluates its past actions.

* **Green Dashed Boxes:** Contain "Knowledge" retrieved from an external knowledge base.

* **Red Stamps:** Annotate specific processes: "Data Processing", "Data Checking", "Knowledge Checking", "Knowledge Updating".

* **Arrows:** Indicate the flow of the process, sequence of steps, and data flow between components in the learning pipeline.

### Detailed Analysis

#### **Step 1: Self-awareness Data Construction**

* **Task:** "put a clean egg in microwave"

* **Process Flow:**

1. **Action:** "go to fridge 1" -> **Observation:** "The fridge 1 is closed."

2. **Action:** "open fridge 1" -> **Observation:** "The fridge 1 is open. In it, you see a cup 3, a cup 1, a lettuce 1, and egg 2."

3. **Branching Path:**

* **Incorrect Path (Marked with Red X):** Action: "close fridge 1". This leads to a **Reflection**: "I need a clean egg, but egg 2 is not clean. I need to search for another one to finish the task." -> Subsequent Action: "go to drawer 1".

* **Correct Path (Marked with Green Check):** Action: "take egg 2 from fridge 1". This is annotated with a red "Data Processing" stamp.

4. **Reflection (on correct path):** "Oh, it seems that I missed the egg. I need to pick it up and clean it now." -> Annotated with a red "Data Checking" stamp.

5. **Action:** "take egg 2 from fridge 1" (This appears to be a repeated or corrected action after reflection).

6. **Knowledge Integration:** A green "Knowledge" box states: "<->To obtain a cleaned objective, you should first find the objective, then clean it, which constitutes a cleaned objective directly." This is annotated with a red "Knowledge Checking" stamp.

7. **Final Action:** "take egg 2 from fridge 1".

#### **Step 2: Self-awareness Learning**

This panel shows a simplified training pipeline:

1. **Input:** Three colored blocks (representing data).

2. **SFT Stage:** Data flows into an "SFT agent" (robot icon). The output is labeled "SFT".

3. **Exploration Stage:** The SFT agent interacts with an environment (grid world icon), generating trajectories marked with red "X"s and a yellow "?".

4. **RPO Stage:** The exploration data (red X's and yellow ?) and original data (colored blocks) are fed into an "RPO agent". The output is labeled "RPO".

#### **Step 3: Self-awareness Inference**

* **Task:** "put two newspapers in drawer"

* **Process Flow:**

1. **Action:** "go to sofa 1" -> **Observation:** "On the sofa 1, you see a creditcard2, a newspaper 1."

2. **Action:** "take newspaper 1 from sofa 1" -> **Observation:** "You pick up the newspaper 1 from the sofa 1."

3. **Action:** "go to counter 2" -> **Observation:** "On counter 2, you see a newspaper 2."

4. **Fast Thinking:** Action: "take newspaper 2 from counter 2".

5. **Slow Thinking (Reflection):** A "Reflection" box interrupts: "Wait, let's check the action. Sorry, I made a mistake. I need put the first newspaper before picking another one."

6. **Knowledge Integration:** A "Knowledge" box (green outline) is queried. The retrieved "Knowledge" states: "<->When the agent has taken a target object and needs to take additional items to fulfill a task, the agent should first place the taken object in its designated receptacle, ensuring it is holding nothing.</>" This is linked to a "knowledge base" icon and a "selection" process.

7. **Corrected Action:** "go to drawer 2".

8. **Final Prompt:** A large black box at the bottom reads "NEXT STEP".

### Key Observations

1. **Dual-Process Architecture:** The diagram explicitly models "Fast Thinking" (immediate action) and "Slow Thinking" (reflection and correction), mirroring cognitive science theories.

2. **Knowledge-Augmented Reflection:** The agent doesn't just reflect internally; it queries an external "Knowledge" base to guide its corrections, as seen in both Step 1 and Step 3.

3. **Error-Driven Learning:** The construction of training data (Step 1) and the inference process (Step 3) both highlight and correct suboptimal actions, suggesting the system learns from mistakes.

4. **Procedural vs. Declarative Knowledge:** The "Knowledge" in Step 1 is a general procedural rule ("find, then clean"). The "Knowledge" in Step 3 is a more complex, context-dependent procedural rule about task sequencing and object management.

5. **Spatial Layout:** The learning pipeline (Step 2) is positioned above the inference example (Step 3), visually separating the training phase from the deployment phase.

### Interpretation

This diagram presents a framework for creating AI agents that exhibit a form of **procedural self-awareness**. The agent is not merely executing a policy but is capable of monitoring its own state (e.g., "I am holding nothing"), evaluating the sufficiency of its actions against a goal ("I need a *clean* egg"), and consulting a knowledge base to resolve uncertainties or correct errors in its plan.

The process demonstrates a move from **reactive execution** to **deliberative planning**. The "Reflection" mechanism acts as a internal critic, while the "Knowledge" component serves as an external guide. The transition from Step 1 (constructing data with reflections) to Step 2 (learning from such data) to Step 3 (applying reflections and knowledge in a new task) suggests a methodology for instilling this deliberative capacity. The key innovation appears to be the structured integration of fast action, slow reflection, and external knowledge to handle long-horizon, multi-step tasks where object states (like "clean") and task dependencies (like "place X before picking Y") are critical. The red stamps ("Data Processing", "Knowledge Checking") imply a rigorous pipeline for curating and validating the data and knowledge that fuel this self-aware process.

DECODING INTELLIGENCE...