## Diagram: Taxonomy of Explainability Techniques for Large Language Models (LLMs)

### Overview

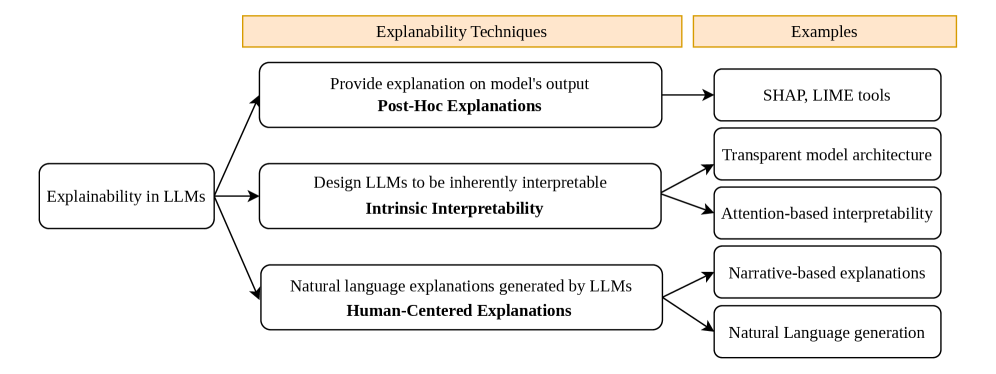

The image is a hierarchical flowchart diagram illustrating a taxonomy of techniques for achieving explainability in Large Language Models (LLMs). It categorizes approaches into three primary methods, each with associated examples. The diagram flows from a central concept on the left to specific techniques and their implementations on the right.

### Components/Axes

The diagram is structured with two header boxes at the top and a tree-like flow below.

**Header Boxes (Top, Orange Background):**

* **Left Header:** "Explainability Techniques"

* **Right Header:** "Examples"

**Main Flow (Left to Right):**

1. **Root Node (Far Left):** A single rounded rectangle labeled "Explainability in LLMs".

2. **Primary Technique Nodes (Center Column):** Three rounded rectangles branch from the root node, each representing a core technique category. The technique name is in bold at the bottom of each box.

* Top: "Provide explanation on model's output" / **Post-Hoc Explanations**

* Middle: "Design LLMs to be inherently interpretable" / **Intrinsic Interpretability**

* Bottom: "Natural language explanations generated by LLMs" / **Human-Centered Explanations**

3. **Example Nodes (Right Column):** Five rounded rectangles provide specific examples, connected by arrows from their parent technique node.

* Connected to *Post-Hoc Explanations*: "SHAP, LIME tools"

* Connected to *Intrinsic Interpretability*: "Transparent model architecture" and "Attention-based interpretability"

* Connected to *Human-Centered Explanations*: "Narrative-based explanations" and "Natural Language generation"

**Visual Relationships:** Black arrows indicate the flow of categorization, originating from the "Explainability in LLMs" node and pointing to the three technique nodes. Further arrows connect each technique node to its corresponding example nodes.

### Detailed Analysis

The diagram presents a clear, three-tiered classification system:

1. **Post-Hoc Explanations:** This technique focuses on analyzing a trained model after the fact. The description "Provide explanation on model's output" indicates these methods are applied externally to the model's decision process. The examples given are "SHAP, LIME tools," which are well-known model-agnostic interpretability frameworks.

2. **Intrinsic Interpretability:** This technique involves building interpretability into the model's design from the start, as stated by "Design LLMs to be inherently interpretable." It branches into two sub-approaches:

* "Transparent model architecture": Suggesting models designed with simpler, more understandable structures.

* "Attention-based interpretability": Leveraging the attention mechanism's weights to infer what parts of the input the model focuses on.

3. **Human-Centered Explanations:** This technique uses the LLM's own generative capability to explain itself, described as "Natural language explanations generated by LLMs." It also has two sub-approaches:

* "Narrative-based explanations": Generating coherent stories or step-by-step reasoning.

* "Natural Language generation": A broader category for producing explanatory text.

### Key Observations

* The diagram establishes a clear hierarchy: a single problem ("Explainability in LLMs") is addressed by three distinct philosophical approaches (Post-Hoc, Intrinsic, Human-Centered), which are then grounded in concrete methods or tools.

* The "Intrinsic Interpretability" and "Human-Centered Explanations" categories are further subdivided, indicating they encompass a wider range of strategies compared to the more tool-focused "Post-Hoc Explanations."

* The visual layout uses consistent shapes (rounded rectangles) and arrow styles, with color used only in the header boxes to separate the conceptual labels ("Explainability Techniques", "Examples") from the content.

### Interpretation

This diagram serves as a conceptual map for understanding the landscape of LLM explainability. It suggests that there is no single solution; rather, the field employs a multi-pronged strategy.

* **The data suggests a progression in approach:** From external analysis (Post-Hoc), to internal design (Intrinsic), to collaborative dialogue (Human-Centered). This reflects an evolution from treating the model as a black box to be probed, to a transparent system, to an interactive partner.

* **The elements relate to each other as a taxonomy.** The root defines the domain, the primary nodes define the strategic categories, and the leaf nodes provide actionable instances. This structure helps researchers and practitioners定位 (locate) specific methods within a broader framework.

* **A notable insight is the inclusion of "Human-Centered Explanations."** This category acknowledges that for complex systems like LLMs, a technically perfect explanation may be less useful than one that is naturally understandable to a human user, even if it is generated by the model itself. This highlights a key tension in the field between mechanistic interpretability and practical utility.

* The diagram implies that "Intrinsic Interpretability" might be the most challenging, as it requires fundamental changes to model architecture, whereas "Post-Hoc" and "Human-Centered" methods can often be applied to existing models.