## Flowchart: Explainability in LLMs

### Overview

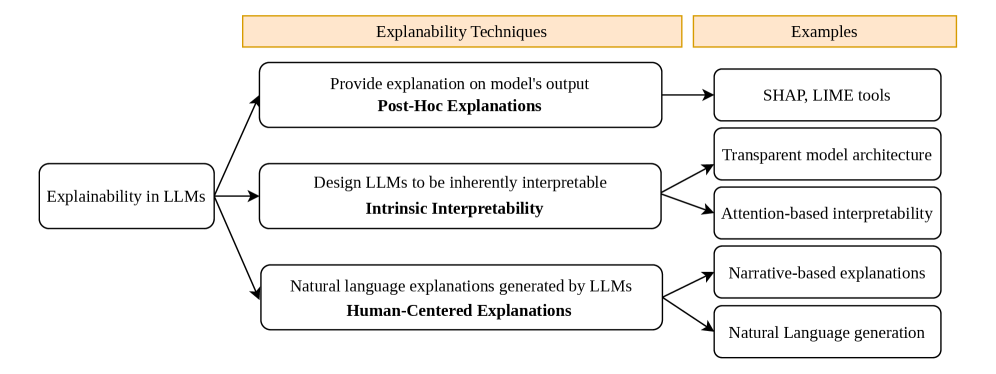

The flowchart illustrates the relationship between **Explainability Techniques** and their corresponding **Examples** in the context of Large Language Models (LLMs). It categorizes three core explainability approaches and maps them to specific tools, methodologies, or outcomes.

---

### Components/Axes

1. **Left Column (Explainability Techniques)**:

- **Post-Hoc Explanations**: "Provide explanation on model's output"

- **Intrinsic Interpretability**: "Design LLMs to be inherently interpretable"

- **Human-Centered Explanations**: "Natural language explanations generated by LLMs"

2. **Right Column (Examples)**:

- **Post-Hoc Explanations** → SHAP, LIME tools

- **Intrinsic Interpretability** → Transparent model architecture, Attention-based interpretability

- **Human-Centered Explanations** → Narrative-based explanations, Natural Language generation

3. **Arrows**: Connect techniques to their examples, indicating direct relationships.

---

### Detailed Analysis

- **Post-Hoc Explanations** (reactive explanations applied after model output):

- Tools: SHAP (SHapley Additive exPlanations), LIME (Local Interpretable Model-agnostic Explanations).

- **Intrinsic Interpretability** (built into model design):

- Methods: Transparent model architecture (e.g., decision trees), Attention-based interpretability (leveraging attention mechanisms in transformers).

- **Human-Centered Explanations** (natural language outputs):

- Outputs: Narrative-based explanations (storytelling), Natural Language generation (automated text generation).

---

### Key Observations

1. **Hierarchical Structure**: Techniques are grouped into three distinct categories, each with specific examples.

2. **Bidirectional Flow**: Arrows show a one-to-many relationship (e.g., one technique maps to multiple examples).

3. **Technical Focus**: Examples emphasize tools (SHAP, LIME), architectural choices (transparent models), and output types (narratives).

---

### Interpretation

The flowchart highlights the **diverse strategies for enhancing LLM transparency**:

- **Post-Hoc Methods** (e.g., SHAP, LIME) are reactive, explaining outputs after the fact.

- **Intrinsic Approaches** (e.g., transparent architectures) prioritize interpretability during model design.

- **Human-Centered Explanations** bridge technical outputs with human understanding via natural language.

This structure underscores the importance of aligning explainability goals with model development stages (design vs. post-hoc analysis) and end-user needs (technical vs. layperson interpretations).