## Horizontal Bar Chart: Relationship Extraction Model Performance Comparison

### Overview

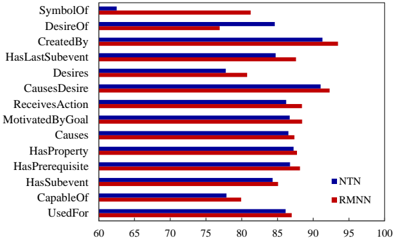

The image displays a horizontal bar chart comparing the performance of two models, labeled "NTN" (blue bars) and "RMNN" (red bars), across 14 different semantic relationship categories. The chart measures performance on a numerical scale from 60 to 100, likely representing accuracy or F1-score percentages.

### Components/Axes

* **Chart Type:** Horizontal grouped bar chart.

* **Y-Axis (Vertical):** Lists 14 semantic relationship categories. From top to bottom:

1. SymbolOf

2. DesireOf

3. CreatedBy

4. HasLastSubevent

5. Desires

6. CausesDesire

7. ReceivesAction

8. MotivatedByGoal

9. UsedFor

10. HasProperty

11. HasPrerequisite

12. HasSubevent

13. CapableOf

14. UsedFor (Note: This category appears twice, at positions 9 and 14).

* **X-Axis (Horizontal):** Numerical scale labeled from 60 to 100, with major tick marks at 60, 65, 70, 75, 80, 85, 90, 95, 100. The axis title is not explicitly shown but implies a performance metric (e.g., Accuracy %).

* **Legend:** Located in the bottom-right corner of the chart area.

* Blue square: **NTN**

* Red square: **RMNN**

### Detailed Analysis

Performance values are approximate, read from the x-axis scale.

| Category | NTN (Blue) Approx. Value | RMNN (Red) Approx. Value | Performance Leader (Visual) |

| :--- | :--- | :--- | :--- |

| **SymbolOf** | ~63 | ~82 | RMNN |

| **DesireOf** | ~85 | ~77 | NTN |

| **CreatedBy** | ~92 | ~94 | RMNN |

| **HasLastSubevent** | ~85 | ~88 | RMNN |

| **Desires** | ~78 | ~81 | RMNN |

| **CausesDesire** | ~92 | ~93 | RMNN |

| **ReceivesAction** | ~87 | ~86 | NTN (slight) |

| **MotivatedByGoal** | ~88 | ~87 | NTN (slight) |

| **UsedFor** (1st) | ~88 | ~87 | NTN (slight) |

| **HasProperty** | ~88 | ~87 | NTN (slight) |

| **HasPrerequisite** | ~88 | ~87 | NTN (slight) |

| **HasSubevent** | ~85 | ~86 | RMNN |

| **CapableOf** | ~78 | ~80 | RMNN |

| **UsedFor** (2nd) | ~87 | ~86 | NTN (slight) |

**Trend Verification:**

* **NTN (Blue):** Shows a wide performance range. Its lowest score is for "SymbolOf" (~63), and its highest are for "CreatedBy" and "CausesDesire" (~92). It generally scores in the mid-80s to high-80s for most categories.

* **RMNN (Red):** Also shows variation but has a higher floor. Its lowest score is for "DesireOf" (~77), and its highest is for "CreatedBy" (~94). It frequently scores in the high-80s to low-90s.

* **Comparison:** RMNN outperforms NTN on 8 of the 14 categories listed. NTN has a slight edge (1-2 points) on 5 categories. The most significant performance gap is on "SymbolOf," where RMNN leads by approximately 19 points.

### Key Observations

1. **Largest Disparity:** The "SymbolOf" relationship shows the most dramatic difference, with RMNN significantly outperforming NTN.

2. **Consistent High Performers:** Both models achieve their highest scores on "CreatedBy" and "CausesDesire," suggesting these relationships may be easier for the models to learn or are better represented in the training data.

3. **Duplicate Category:** The category "UsedFor" appears twice on the y-axis (positions 9 and 14). The performance values for both instances are very similar for each respective model, which may indicate a data entry error in the original chart or a specific experimental condition not labeled here.

4. **Tight Clustering:** For the middle block of categories from "ReceivesAction" to "HasPrerequisite," the performance of both models is very close, differing by only 1-2 percentage points, with NTN holding a marginal lead in most of these.

### Interpretation

This chart likely comes from a research paper evaluating neural network models (NTN: Neural Tensor Network? RMNN: Relational Memory Neural Network?) on a semantic relationship classification task, possibly using a dataset like ConceptNet.

The data suggests that the **RMNN model has a more robust and generally superior performance** across a diverse set of semantic relationships compared to the NTN model. Its significant advantage on abstract relations like "SymbolOf" might indicate better capability in capturing non-taxonomic, associative knowledge.

The tight clustering of scores in the middle categories implies that for many common, possibly more concrete relationships (e.g., "UsedFor," "HasProperty"), the architectural differences between NTN and RMNN yield marginal performance gains. The models' peak performance on "CreatedBy" and "CausesDesire" could reflect the nature of the evaluation dataset, where causal and creation links are prominent and well-defined.

The duplicate "UsedFor" entry is an anomaly that requires context from the original source to interpret—it could represent a sub-category, a different experimental fold, or a simple error. Overall, the chart effectively communicates that while both models are competent, RMNN offers a notable improvement, particularly for specific, challenging relationship types.