\n

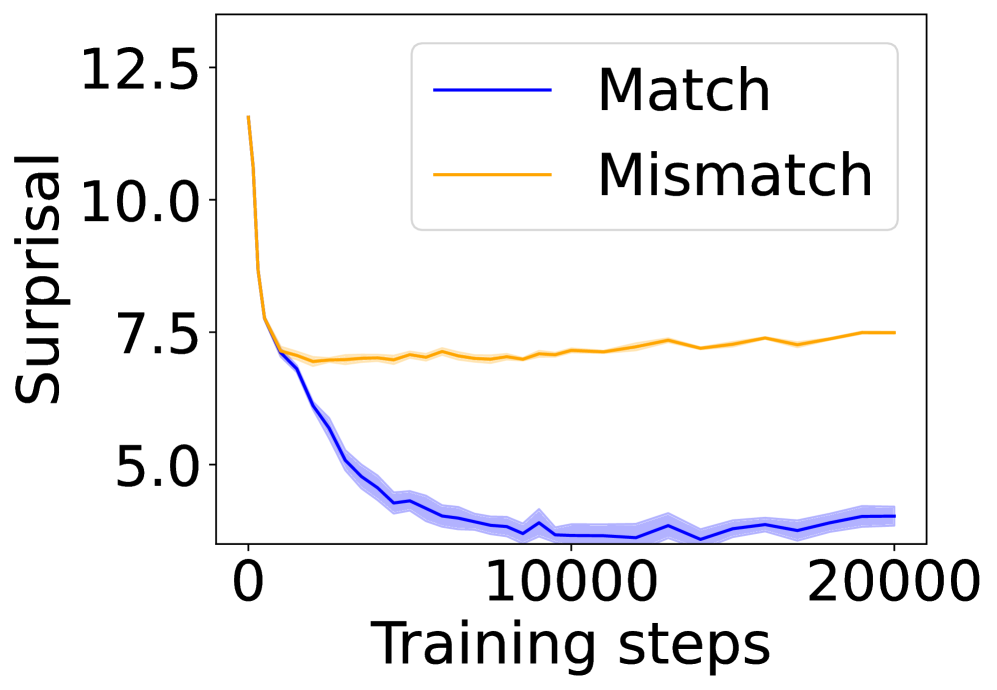

## Line Chart: Surprisal vs. Training Steps for Match and Mismatch Conditions

### Overview

The image is a line chart comparing the "Surprisal" metric over the course of "Training steps" for two distinct conditions: "Match" and "Mismatch". The chart demonstrates how the surprisal value evolves for each condition as training progresses.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence intervals or standard deviation bands.

* **X-Axis:**

* **Label:** "Training steps"

* **Scale:** Linear scale from 0 to 20,000.

* **Major Tick Marks:** 0, 10000, 20000.

* **Y-Axis:**

* **Label:** "Surprisal"

* **Scale:** Linear scale from approximately 4.0 to 12.5.

* **Major Tick Marks:** 5.0, 7.5, 10.0, 12.5.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Items:**

1. **"Match"** - Represented by a solid blue line.

2. **"Mismatch"** - Represented by a solid orange line.

* **Data Series:**

1. **Blue Line ("Match"):** A solid blue line with a light blue shaded area around it, indicating variability (e.g., standard deviation or confidence interval).

2. **Orange Line ("Mismatch"):** A solid orange line with no visible shaded area.

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

* **"Match" (Blue Line):**

* **Trend:** The line exhibits a steep, downward slope initially, followed by a gradual flattening. It shows a strong decreasing trend in surprisal as training steps increase.

* **Key Points:**

* At Step 0: Surprisal ≈ 12.5 (starting point).

* At Step ~2,500: Surprisal ≈ 7.5.

* At Step ~5,000: Surprisal ≈ 5.0.

* At Step 10,000: Surprisal ≈ 4.0 (reaches a plateau).

* From Step 10,000 to 20,000: Surprisal fluctuates slightly between ≈ 4.0 and 4.5, showing a stable, low value.

* **Shaded Area:** The light blue band is widest during the initial descent (steps 0-5000), suggesting higher variance in measurements during rapid learning. It narrows significantly after step 10,000, indicating more consistent results as the model stabilizes.

* **"Mismatch" (Orange Line):**

* **Trend:** The line shows a very different pattern. It starts lower than the "Match" line, dips slightly, and then exhibits a very gradual, slight upward trend over the long term.

* **Key Points:**

* At Step 0: Surprisal ≈ 7.5 (starting point, notably lower than "Match").

* At Step ~2,500: Surprisal dips to its lowest point, ≈ 7.0.

* From Step ~2,500 to 20,000: The line shows a slow, steady increase.

* At Step 10,000: Surprisal ≈ 7.2.

* At Step 20,000: Surprisal ≈ 7.5, returning to near its initial value.

### Key Observations

1. **Divergent Paths:** The two conditions start at different surprisal levels and follow completely opposite long-term trends. "Match" improves dramatically, while "Mismatch" stagnates and slightly worsens.

2. **Crossover Point:** The lines cross early in training, around step 1,500-2,000. Before this point, "Mismatch" has lower surprisal; after this point, "Match" has significantly lower surprisal.

3. **Plateau vs. Drift:** The "Match" condition successfully converges to a stable, low surprisal plateau. The "Mismatch" condition fails to improve and shows a concerning slight upward drift in surprisal over extended training.

4. **Variance:** The presence of a shaded band only for the "Match" line suggests that the "Mismatch" condition's results were either more consistent (less variable) or that the variance was not plotted for it.

### Interpretation

This chart likely visualizes the performance of a machine learning model, possibly a language model, during training. "Surprisal" is a common metric in information theory and NLP, measuring how unexpected a given data point (e.g., a word) is according to the model's current predictions. Lower surprisal indicates better predictive performance.

* **"Match" Condition:** Represents the model training on data that is **in-distribution** or consistent with its training objective. The steep drop in surprisal shows the model is effectively learning patterns from this data, leading to confident and accurate predictions (low surprisal) that stabilize over time.

* **"Mismatch" Condition:** Represents the model encountering **out-of-distribution** data, adversarial examples, or data from a different domain than it was trained on. The initial dip might reflect a brief period of adaptation, but the subsequent flat or slightly rising trend indicates the model **fails to learn** from this mismatched data. Its predictions remain relatively poor (high surprisal) and do not improve with more training steps on this data, suggesting a fundamental inability to generalize to this condition.

**Conclusion:** The data demonstrates a clear and significant performance gap between matched and mismatched conditions. It highlights the model's capacity to learn from consistent data and its limitation or failure mode when faced with distributional shift. The "Mismatch" line's slight upward drift could even indicate a form of negative transfer or catastrophic interference, where prolonged training on mismatched data slightly degrades the model's performance on that specific data type.