## Diagram: Challenges and Research Directions of XAI in the Deployment Phase

### Overview

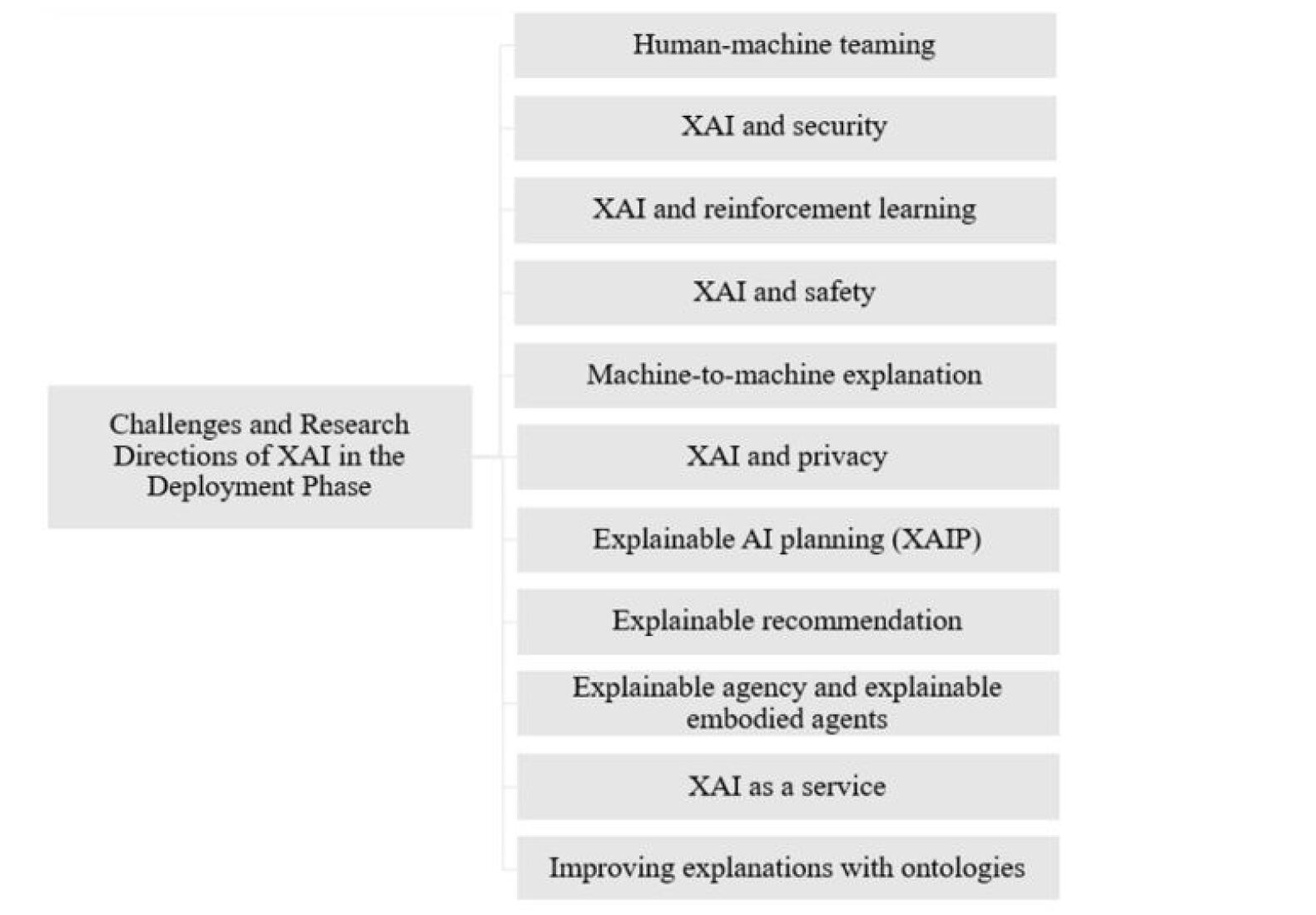

The image is a hierarchical tree diagram illustrating the key challenges and research directions associated with deploying Explainable AI (XAI) systems. It features a central topic on the left, which branches out into a vertical list of specific sub-topics on the right.

### Components/Axes

* **Central Node (Left):** A single rectangular box containing the main title.

* **Branching Nodes (Right):** A vertical column of eleven rectangular boxes, each containing a specific research direction or challenge.

* **Connections:** Thin, gray lines connect the central node to each of the eleven branching nodes, indicating a parent-child relationship.

* **Layout:** The central node is positioned on the left side of the image, vertically centered. The list of branching nodes is aligned to the right, stacked vertically with even spacing. The entire diagram is set against a plain white background.

### Detailed Analysis / Content Details

**Central Node Text:**

* "Challenges and Research Directions of XAI in the Deployment Phase"

**Branching Nodes Text (listed from top to bottom):**

1. Human-machine teaming

2. XAI and security

3. XAI and reinforcement learning

4. XAI and safety

5. Machine-to-machine explanation

6. XAI and privacy

7. Explainable AI planning (XAIP)

8. Explainable recommendation

9. Explainable agency and explainable embodied agents

10. XAI as a service

11. Improving explanations with ontologies

### Key Observations

* The diagram presents a comprehensive, non-hierarchical list of topics. All eleven items are directly connected to the central theme, suggesting they are considered parallel and equally important facets of XAI deployment.

* The topics range from broad, cross-cutting concerns (e.g., security, safety, privacy) to specific technical sub-fields (e.g., reinforcement learning, AI planning, recommendation systems).

* The inclusion of "Human-machine teaming" as the first item highlights the fundamental role of human interaction in XAI.

* The final item, "Improving explanations with ontologies," points to a specific methodological approach for enhancing explanation quality.

### Interpretation

This diagram serves as a conceptual map or taxonomy for the field of XAI when it moves from development to real-world deployment. It suggests that successful deployment is not a single challenge but a multifaceted problem space.

The data (the list of topics) demonstrates that XAI research in the deployment phase must address:

1. **Integration Challenges:** How XAI interacts with and supports other critical system properties like security, safety, and privacy.

2. **Application-Specific Challenges:** Tailoring explanations for different AI paradigms (reinforcement learning, planning) and applications (recommendation, embodied agents).

3. **Interaction Paradigms:** Defining new modes of explanation, such as between machines themselves ("Machine-to-machine explanation") or as a cloud-based utility ("XAI as a service").

4. **Foundational Improvements:** Developing core techniques, like using ontologies, to make explanations more robust and meaningful.

The structure implies that these areas are interconnected. For instance, "Explainable agency" likely relates to "Human-machine teaming," and "XAI and security" could intersect with "XAI and privacy." The diagram provides a framework for organizing research efforts, identifying gaps, and understanding the breadth of considerations necessary to make AI systems transparent, trustworthy, and effective in operational environments.