TECHNICAL ASSET FINGERPRINT

01be408307e6b3792f4f9741

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

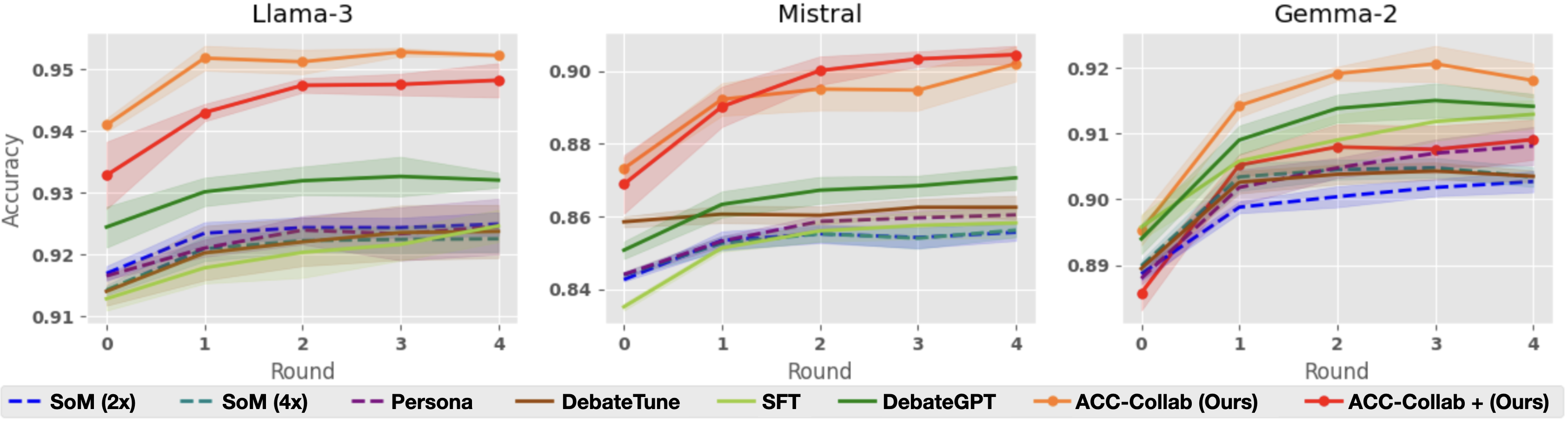

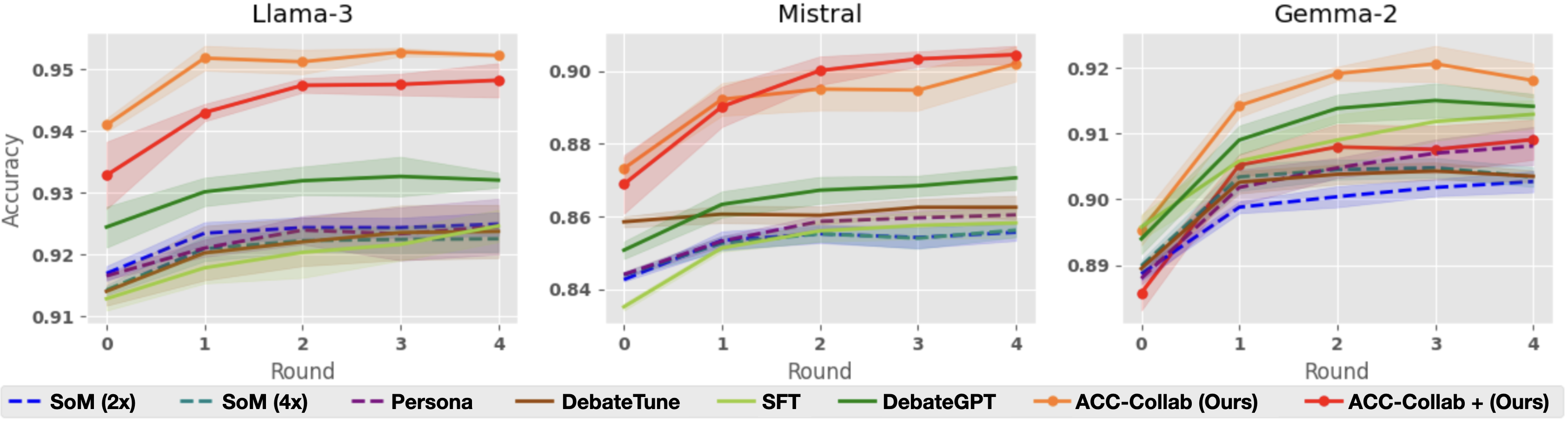

## Line Charts: Model Accuracy vs. Round

### Overview

The image presents three line charts comparing the accuracy of different models (Llama-3, Mistral, and Gemma-2) across multiple rounds of interaction or training. Each chart displays the performance of several methods, including "SoM (2x)", "SoM (4x)", "Persona", "DebateTune", "SFT", "DebateGPT", "ACC-Collab (Ours)", and "ACC-Collab + (Ours)". The x-axis represents the round number (0 to 4), and the y-axis represents the accuracy score. Each line represents a different method, and shaded areas around the lines indicate the confidence interval or variability in the results.

### Components/Axes

* **Titles:** The charts are titled "Llama-3" (top-left), "Mistral" (top-center), and "Gemma-2" (top-right).

* **X-axis:** Labeled "Round", with markers at 0, 1, 2, 3, and 4.

* **Y-axis:** Labeled "Accuracy", with different scales for each chart:

* Llama-3: 0.91 to 0.95

* Mistral: 0.84 to 0.90

* Gemma-2: 0.89 to 0.92

* **Legend:** Located at the bottom of the image, mapping line colors/styles to methods:

* Blue dashed line: SoM (2x)

* Teal dashed line: SoM (4x)

* Purple dashed line: Persona

* Brown solid line: DebateTune

* Light Green solid line: SFT

* Dark Green solid line: DebateGPT

* Orange solid line: ACC-Collab (Ours)

* Red solid line: ACC-Collab + (Ours)

### Detailed Analysis

#### Llama-3 Chart

* **ACC-Collab + (Ours) (Red):** Starts at approximately 0.933 at round 0, increases to about 0.947 by round 1, and then plateaus around 0.948-0.949 for rounds 2-4.

* **ACC-Collab (Ours) (Orange):** Starts at approximately 0.941 at round 0, increases to about 0.952 by round 1, and then plateaus around 0.953-0.954 for rounds 2-4.

* **DebateGPT (Dark Green):** Starts at approximately 0.916 at round 0, increases to about 0.930 by round 1, and then plateaus around 0.932-0.933 for rounds 2-4.

* **SoM (2x) (Blue Dashed):** Starts at approximately 0.917 at round 0, increases to about 0.924 by round 1, and then plateaus around 0.925-0.926 for rounds 2-4.

* **SoM (4x) (Teal Dashed):** Starts at approximately 0.918 at round 0, increases to about 0.922 by round 1, and then plateaus around 0.923-0.924 for rounds 2-4.

* **Persona (Purple Dashed):** Starts at approximately 0.918 at round 0, increases to about 0.924 by round 1, and then plateaus around 0.925-0.926 for rounds 2-4.

* **DebateTune (Brown):** Starts at approximately 0.916 at round 0, increases to about 0.920 by round 1, and then plateaus around 0.921-0.922 for rounds 2-4.

* **SFT (Light Green):** Starts at approximately 0.914 at round 0, increases to about 0.921 by round 1, and then plateaus around 0.922-0.923 for rounds 2-4.

#### Mistral Chart

* **ACC-Collab + (Ours) (Red):** Starts at approximately 0.872 at round 0, increases to about 0.899 by round 2, and then plateaus around 0.901-0.902 for rounds 3-4.

* **ACC-Collab (Ours) (Orange):** Starts at approximately 0.877 at round 0, increases to about 0.895 by round 2, and then plateaus around 0.896-0.897 for rounds 3-4.

* **DebateGPT (Dark Green):** Starts at approximately 0.859 at round 0, increases to about 0.871 by round 2, and then plateaus around 0.872-0.873 for rounds 3-4.

* **SoM (2x) (Blue Dashed):** Starts at approximately 0.842 at round 0, increases to about 0.857 by round 2, and then plateaus around 0.858-0.859 for rounds 3-4.

* **SoM (4x) (Teal Dashed):** Starts at approximately 0.860 at round 0, increases to about 0.863 by round 2, and then plateaus around 0.864-0.865 for rounds 3-4.

* **Persona (Purple Dashed):** Starts at approximately 0.860 at round 0, increases to about 0.863 by round 2, and then plateaus around 0.864-0.865 for rounds 3-4.

* **DebateTune (Brown):** Starts at approximately 0.859 at round 0, increases to about 0.860 by round 2, and then plateaus around 0.861-0.862 for rounds 3-4.

* **SFT (Light Green):** Starts at approximately 0.837 at round 0, increases to about 0.857 by round 2, and then plateaus around 0.858-0.859 for rounds 3-4.

#### Gemma-2 Chart

* **ACC-Collab + (Ours) (Red):** Starts at approximately 0.890 at round 0, increases to about 0.906 by round 1, and then plateaus around 0.907-0.908 for rounds 2-4.

* **ACC-Collab (Ours) (Orange):** Starts at approximately 0.895 at round 0, increases to about 0.918 by round 2, and then decreases to about 0.916 for round 4.

* **DebateGPT (Dark Green):** Starts at approximately 0.898 at round 0, increases to about 0.913 by round 2, and then plateaus around 0.914-0.915 for rounds 3-4.

* **SoM (2x) (Blue Dashed):** Starts at approximately 0.892 at round 0, increases to about 0.903 by round 2, and then plateaus around 0.904-0.905 for rounds 3-4.

* **SoM (4x) (Teal Dashed):** Starts at approximately 0.901 at round 0, increases to about 0.905 by round 2, and then plateaus around 0.906-0.907 for rounds 3-4.

* **Persona (Purple Dashed):** Starts at approximately 0.890 at round 0, increases to about 0.908 by round 2, and then plateaus around 0.909-0.910 for rounds 3-4.

* **DebateTune (Brown):** Starts at approximately 0.888 at round 0, increases to about 0.904 by round 2, and then plateaus around 0.905-0.906 for rounds 3-4.

* **SFT (Light Green):** Starts at approximately 0.899 at round 0, increases to about 0.909 by round 2, and then plateaus around 0.910-0.911 for rounds 3-4.

### Key Observations

* **Initial Improvement:** Most methods show a significant increase in accuracy from round 0 to round 1 or 2.

* **Plateau Effect:** After the initial improvement, the accuracy tends to plateau, indicating diminishing returns from further rounds.

* **ACC-Collab Superiority:** The "ACC-Collab (Ours)" and "ACC-Collab + (Ours)" methods generally outperform the other methods across all three models.

* **Model-Specific Performance:** The absolute accuracy values differ across the models, with Llama-3 generally achieving higher accuracy scores than Mistral and Gemma-2.

### Interpretation

The charts demonstrate the impact of different methods on the accuracy of three language models (Llama-3, Mistral, and Gemma-2) over multiple rounds. The "ACC-Collab" methods appear to be the most effective in improving accuracy, suggesting that collaborative approaches enhance model performance. The plateau effect observed after a few rounds indicates that the models may reach a point of diminishing returns with the given methods and data. The differences in absolute accuracy across the models highlight the inherent variations in their architectures and training data. The shaded areas around the lines provide insights into the variability of the results, which is crucial for assessing the robustness of the methods.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Accuracy vs. Round for Different Models and Training Methods

### Overview

The image presents three line charts, each displaying the accuracy of different language models (Llama-3, Mistral, and Gemma-2) across four rounds of evaluation. Each chart includes multiple lines representing different training methods or configurations applied to the respective model. The y-axis represents accuracy, and the x-axis represents the round number.

### Components/Axes

* **X-axis:** "Round" with values 0, 1, 2, 3, and 4.

* **Y-axis:** "Accuracy" with a scale ranging from approximately 0.84 to 0.95.

* **Models (Charts):** Llama-3, Mistral, Gemma-2.

* **Training Methods/Configurations (Legend):**

* SoM (2x) - Dashed dark blue line

* SoM (4x) - Dashed purple line

* Persona - Solid purple line

* DebateTune - Solid green line

* SFT - Solid light green line

* DebateGPT - Solid dark green line

* ACC-Collab (Ours) - Solid orange line

* ACC-Collab + (Ours) - Dashed orange line

### Detailed Analysis or Content Details

**Llama-3 Chart:**

* **ACC-Collab (Ours):** Starts at approximately 0.945 accuracy at round 0, increases slightly to around 0.947 at round 1, then decreases to approximately 0.943 at round 4.

* **ACC-Collab + (Ours):** Starts at approximately 0.925 accuracy at round 0, increases to around 0.935 at round 1, then remains relatively stable around 0.932-0.934 for rounds 2-4.

* **SoM (2x):** Starts at approximately 0.922 accuracy at round 0, increases to around 0.926 at round 1, then remains relatively stable around 0.924-0.927 for rounds 2-4.

* **SoM (4x):** Starts at approximately 0.918 accuracy at round 0, increases to around 0.922 at round 1, then remains relatively stable around 0.920-0.923 for rounds 2-4.

* **Persona:** Starts at approximately 0.920 accuracy at round 0, increases to around 0.924 at round 1, then remains relatively stable around 0.922-0.925 for rounds 2-4.

* **DebateTune:** Starts at approximately 0.924 accuracy at round 0, increases to around 0.928 at round 1, then remains relatively stable around 0.926-0.929 for rounds 2-4.

* **SFT:** Starts at approximately 0.922 accuracy at round 0, increases to around 0.926 at round 1, then remains relatively stable around 0.924-0.927 for rounds 2-4.

* **DebateGPT:** Starts at approximately 0.920 accuracy at round 0, increases to around 0.924 at round 1, then remains relatively stable around 0.922-0.925 for rounds 2-4.

**Mistral Chart:**

* **ACC-Collab (Ours):** Starts at approximately 0.885 accuracy at round 0, increases to around 0.90 at round 1, then decreases to approximately 0.895 at round 4.

* **ACC-Collab + (Ours):** Starts at approximately 0.855 accuracy at round 0, increases to around 0.87 at round 1, then remains relatively stable around 0.865-0.875 for rounds 2-4.

* **SoM (2x):** Starts at approximately 0.850 accuracy at round 0, increases to around 0.860 at round 1, then remains relatively stable around 0.855-0.865 for rounds 2-4.

* **SoM (4x):** Starts at approximately 0.840 accuracy at round 0, increases to around 0.850 at round 1, then remains relatively stable around 0.845-0.855 for rounds 2-4.

* **Persona:** Starts at approximately 0.845 accuracy at round 0, increases to around 0.855 at round 1, then remains relatively stable around 0.850-0.855 for rounds 2-4.

* **DebateTune:** Starts at approximately 0.855 accuracy at round 0, increases to around 0.865 at round 1, then remains relatively stable around 0.860-0.865 for rounds 2-4.

* **SFT:** Starts at approximately 0.850 accuracy at round 0, increases to around 0.860 at round 1, then remains relatively stable around 0.855-0.865 for rounds 2-4.

* **DebateGPT:** Starts at approximately 0.845 accuracy at round 0, increases to around 0.855 at round 1, then remains relatively stable around 0.850-0.855 for rounds 2-4.

**Gemma-2 Chart:**

* **ACC-Collab (Ours):** Starts at approximately 0.915 accuracy at round 0, decreases to around 0.910 at round 1, then remains relatively stable around 0.912-0.915 for rounds 2-4.

* **ACC-Collab + (Ours):** Starts at approximately 0.895 accuracy at round 0, increases to around 0.905 at round 1, then remains relatively stable around 0.900-0.905 for rounds 2-4.

* **SoM (2x):** Starts at approximately 0.890 accuracy at round 0, increases to around 0.900 at round 1, then remains relatively stable around 0.895-0.900 for rounds 2-4.

* **SoM (4x):** Starts at approximately 0.885 accuracy at round 0, increases to around 0.895 at round 1, then remains relatively stable around 0.890-0.895 for rounds 2-4.

* **Persona:** Starts at approximately 0.890 accuracy at round 0, increases to around 0.900 at round 1, then remains relatively stable around 0.895-0.900 for rounds 2-4.

* **DebateTune:** Starts at approximately 0.895 accuracy at round 0, increases to around 0.905 at round 1, then remains relatively stable around 0.900-0.905 for rounds 2-4.

* **SFT:** Starts at approximately 0.890 accuracy at round 0, increases to around 0.900 at round 1, then remains relatively stable around 0.895-0.900 for rounds 2-4.

* **DebateGPT:** Starts at approximately 0.885 accuracy at round 0, increases to around 0.895 at round 1, then remains relatively stable around 0.890-0.895 for rounds 2-4.

### Key Observations

* "ACC-Collab (Ours)" generally achieves the highest accuracy across all three models, especially in the Llama-3 chart.

* The "ACC-Collab + (Ours)" method consistently performs better than the base "ACC-Collab (Ours)" method in the Mistral and Gemma-2 charts.

* The accuracy of most methods tends to plateau after round 1, with minimal changes observed in subsequent rounds.

* Mistral consistently shows lower overall accuracy compared to Llama-3 and Gemma-2.

### Interpretation

The charts demonstrate the effectiveness of the "ACC-Collab" training method, particularly when combined with the "+" variant, in improving the accuracy of language models. The plateauing accuracy after round 1 suggests that the models may be reaching a point of diminishing returns with further training using these methods. The lower accuracy observed for Mistral could indicate that this model requires different training strategies or is inherently less performant on the specific task being evaluated. The consistent performance of SoM, Persona, DebateTune, SFT, and DebateGPT suggests they provide a stable baseline, but do not reach the performance levels of the ACC-Collab methods. The differences in performance across models highlight the importance of tailoring training methods to the specific characteristics of each model.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Performance of Various Methods Across Rounds for Three AI Models

### Overview

The image displays three horizontally arranged line charts, each comparing the performance (accuracy) of eight different methods over five rounds (0 to 4) for a specific large language model: Llama-3, Mistral, and Gemma-2. A shared legend is positioned at the bottom of the entire figure. The charts illustrate how accuracy evolves with iterative rounds for each method, with shaded regions indicating confidence intervals or variance.

### Components/Axes

* **Chart Titles (Top Center of each subplot):** "Llama-3", "Mistral", "Gemma-2".

* **X-Axis (Bottom of each subplot):** Labeled "Round". Markers at integer values: 0, 1, 2, 3, 4.

* **Y-Axis (Left of each subplot):** Labeled "Accuracy". The scale differs for each model:

* **Llama-3:** Range approximately 0.91 to 0.955.

* **Mistral:** Range approximately 0.84 to 0.905.

* **Gemma-2:** Range approximately 0.89 to 0.925.

* **Legend (Bottom, spanning all charts):** Contains eight entries, each with a distinct line style/color and label:

1. `-- SoM (2x)` (Blue, dashed line)

2. `-- SoM (4x)` (Teal, dashed line)

3. `-- Persona` (Purple, dashed line)

4. `— DebateTune` (Brown, solid line)

5. `— SFT` (Light green, solid line)

6. `— DebateGPT` (Dark green, solid line)

7. `—●— ACC-Collab (Ours)` (Orange, solid line with circle markers)

8. `—●— ACC-Collab + (Ours)` (Red, solid line with circle markers)

### Detailed Analysis

**Llama-3 Chart:**

* **Trend:** All methods show a general upward trend or plateau from Round 0 to Round 4.

* **Top Performers:** `ACC-Collab (Ours)` (orange) starts highest (~0.941) and peaks at Round 3 (~0.953). `ACC-Collab + (Ours)` (red) starts lower (~0.933) but rises sharply to converge near the orange line by Round 2 (~0.948), maintaining a slight lead thereafter.

* **Mid-tier:** `DebateGPT` (dark green) shows steady improvement from ~0.925 to ~0.932.

* **Lower Tier:** The remaining methods (`SoM (2x)`, `SoM (4x)`, `Persona`, `DebateTune`, `SFT`) are clustered between ~0.915 and ~0.925, showing modest gains. `SFT` (light green) appears to be the lowest-performing method overall.

**Mistral Chart:**

* **Trend:** Similar upward trajectory, with the top two methods showing the most dramatic improvement.

* **Top Performers:** `ACC-Collab + (Ours)` (red) and `ACC-Collab (Ours)` (orange) start around 0.87-0.875. Both rise steeply, with the red line slightly overtaking the orange line by Round 2. They plateau near 0.90-0.905 from Round 2 to 4.

* **Mid-tier:** `DebateGPT` (dark green) improves from ~0.85 to ~0.87. `DebateTune` (brown) remains relatively flat around 0.86.

* **Lower Tier:** `SFT`, `SoM (2x)`, `SoM (4x)`, and `Persona` are tightly grouped between ~0.84 and ~0.86, with `SFT` starting the lowest (~0.835).

**Gemma-2 Chart:**

* **Trend:** All methods improve from Round 0, with most plateauing after Round 2.

* **Top Performers:** `ACC-Collab (Ours)` (orange) leads throughout, starting at ~0.895 and peaking at ~0.921 at Round 3. `ACC-Collab + (Ours)` (red) follows a similar path but remains slightly below the orange line.

* **Notable Mid-tier:** `DebateGPT` (dark green) performs strongly, rising from ~0.89 to ~0.915, closely following the top two. `SFT` (light green) also shows strong improvement, reaching ~0.91.

* **Lower Tier:** The dashed-line methods (`SoM (2x)`, `SoM (4x)`, `Persona`) and `DebateTune` are clustered between ~0.89 and ~0.905. `SoM (2x)` (blue dashed) is the lowest-performing method in the later rounds.

### Key Observations

1. **Consistent Superiority:** The two proposed methods, `ACC-Collab (Ours)` and `ACC-Collab + (Ours)`, consistently achieve the highest or near-highest accuracy across all three models and all rounds.

2. **Performance Hierarchy:** A clear hierarchy is visible: the "ACC-Collab" variants > `DebateGPT` > other methods (`SFT`, `DebateTune`, `Persona`, `SoM` variants).

3. **Round-Dependent Improvement:** Accuracy for the top methods improves significantly in the first 1-2 rounds before stabilizing. Lower-performing methods show less dramatic gains.

4. **Model-Specific Scales:** While the relative ranking of methods is similar, the absolute accuracy values differ by model, with Llama-3 achieving the highest overall accuracy (~0.95) and Mistral the lowest starting point (~0.84).

5. **Variance:** The shaded confidence intervals are generally wider for the top-performing methods, especially in early rounds, suggesting more variability in their performance gains.

### Interpretation

The data strongly suggests that the authors' proposed collaborative methods (`ACC-Collab` and its enhanced version `ACC-Collab +`) are more effective at improving model accuracy through iterative rounds than the compared baselines (DebateGPT, SFT, Persona, SoM, DebateTune). This effectiveness is robust, holding across three distinct underlying LLM architectures (Llama-3, Mistral, Gemma-2).

The trend of rapid early improvement followed by a plateau indicates that the collaborative process yields the most benefit in the initial rounds. The consistent underperformance of standard Supervised Fine-Tuning (`SFT`) and the `SoM` variants highlights the added value of the debate/collaboration framework. The fact that `ACC-Collab +` often starts lower than `ACC-Collab` but catches up or surpasses it (notably in Mistral) might suggest that the "+" variant requires a "warm-up" round but has a higher performance ceiling. The charts provide compelling visual evidence for the efficacy of the proposed approach in enhancing LLM reasoning or task performance through multi-round collaboration.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Accuracy Across Rounds (Llaama-3, Mistral, Gemma-2)

### Overview

Three line graphs compare the accuracy of different AI models across four rounds of evaluation. Each graph represents a different base model (Llaama-3, Mistral, Gemma-2) and shows performance trends for seven evaluation methods. Accuracy values range from 0.91 to 0.95, with shaded regions indicating confidence intervals or error margins.

### Components/Axes

- **X-axis**: "Round" (0 to 4, integer ticks)

- **Y-axis**: "Accuracy" (0.91 to 0.95, 0.01 increments)

- **Legend**: Located at the bottom, color-coded with line styles:

- `SoM (2x)`: Blue dashed line

- `SoM (4x)`: Teal dashed line

- `Persona`: Purple dashed line

- `DebateTune`: Brown dashed line

- `SFT`: Green dashed line

- `DebateGPT`: Olive solid line

- `ACC-Collab (Ours)`: Orange solid line

- `ACC-Collab + (Ours)`: Red solid line

### Detailed Analysis

#### Llaama-3 Graph

- **ACC-Collab (Ours)**: Starts at ~0.94 (Round 0), rises to ~0.95 by Round 4 (orange line).

- **ACC-Collab + (Ours)**: Begins at ~0.93 (Round 0), surpasses ACC-Collab by Round 4 (~0.95).

- **SFT**: Gradual increase from ~0.92 to ~0.93.

- **DebateGPT**: Stable at ~0.93.

- **SoM (2x/4x)**: Minimal improvement (~0.91 to ~0.92).

#### Mistral Graph

- **ACC-Collab (Ours)**: Starts at ~0.87 (Round 0), peaks at ~0.90 by Round 4.

- **ACC-Collab + (Ours)**: Begins at ~0.86 (Round 0), reaches ~0.90 by Round 4.

- **DebateGPT**: Steady rise from ~0.86 to ~0.88.

- **SFT**: Slow growth (~0.84 to ~0.87).

- **SoM (2x/4x)**: Minimal gains (~0.84 to ~0.86).

#### Gemma-2 Graph

- **ACC-Collab (Ours)**: Starts at ~0.89 (Round 0), peaks at ~0.92 by Round 4.

- **ACC-Collab + (Ours)**: Begins at ~0.88 (Round 0), reaches ~0.91 by Round 4.

- **DebateGPT**: Sharp rise from ~0.89 to ~0.91.

- **SFT**: Gradual improvement (~0.88 to ~0.90).

- **SoM (2x/4x)**: Minimal changes (~0.88 to ~0.89).

### Key Observations

1. **ACC-Collab Dominance**: All three base models show ACC-Collab methods (solid lines) outperforming others, especially in later rounds.

2. **Synergy in ACC-Collab + (Ours)**: The combined approach (red lines) consistently surpasses standalone ACC-Collab (orange lines) across all models.

3. **SoM Underperformance**: Dashed lines (SoM variants) show the least improvement, suggesting limited scalability.

4. **Shaded Regions**: Wider confidence intervals for SoM and SFT methods, indicating higher variability.

### Interpretation

The data demonstrates that collaborative methods (ACC-Collab) significantly enhance model accuracy over iterative rounds, with the combined approach (ACC-Collab +) yielding the strongest results. This suggests that integrating multiple evaluation strategies improves robustness. SoM and SFT methods lag behind, highlighting their limitations in dynamic evaluation contexts. The shaded regions imply that ACC-Collab methods have more consistent performance, while others exhibit greater uncertainty. These trends align with prior research on collaborative AI training paradigms, emphasizing the value of hybrid approaches.

DECODING INTELLIGENCE...