# Technical Document Extraction: Chess Reward and Policy Visualization

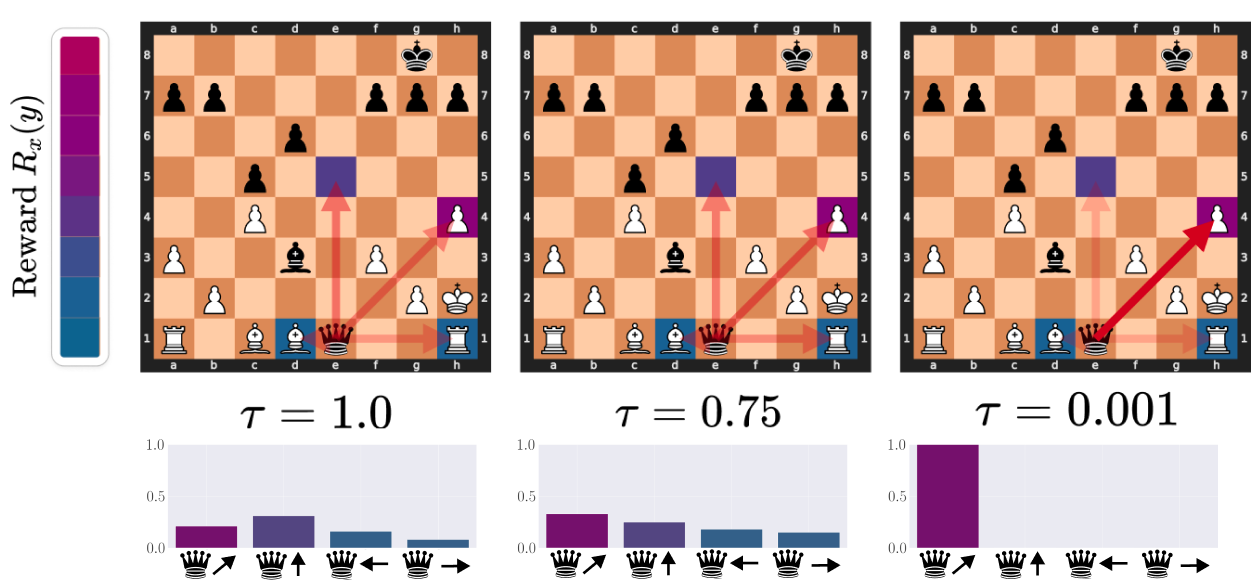

This document analyzes a technical visualization consisting of three panels illustrating the relationship between a reward function, a temperature parameter ($\tau$), and the resulting probability distribution (policy) for a chess engine's move selection.

## 1. Global Components

### 1.1 Legend: Reward Scale

* **Location:** Far left vertical bar.

* **Label:** $\text{Reward } R_x(y)$

* **Description:** A vertical color gradient used as a heatmap for the chessboards.

* **Top (High Reward):** Bright Magenta/Purple.

* **Middle:** Deep Purple/Indigo.

* **Bottom (Low Reward):** Dark Teal/Blue.

### 1.2 Chessboard State (Common to all panels)

The image displays a mid-game chess position.

* **White Pieces:** King (h2), Rook (a1, h1), Bishop (c1, d1), Pawns (a3, b2, c4, f3, g2, h4).

* **Black Pieces:** King (g8), Queen (e1), Bishop (d3), Pawns (a7, b7, d6, c5, f7, g7, h7).

* **Active Piece:** The Black Queen on **e1** is the focus of the analysis.

* **Potential Moves Highlighted:**

1. **Diagonal Up-Right:** To h4 (Capturing White Pawn).

2. **Vertical Up:** To e5.

3. **Horizontal Left:** To d1 (Capturing White Bishop).

4. **Horizontal Right:** To h1 (Capturing White Rook).

---

## 2. Comparative Analysis by Temperature ($\tau$)

The image is divided into three columns, each representing a different value for the temperature parameter $\tau$.

### 2.1 Column 1: High Temperature ($\tau = 1.0$)

* **Chessboard Visualization:**

* The squares e5, h4, d1, and h1 are highlighted with semi-transparent colors.

* The colors are relatively muted (blues and purples), indicating a flattened reward distribution.

* Red arrows point from the Queen at e1 to these four squares with equal visual weight.

* **Bar Chart (Policy Distribution):**

* **Y-axis:** Probability (0.0 to 1.0).

* **X-axis Labels (Icons):** Queen moving Diagonal Up-Right, Vertical Up, Horizontal Left, Horizontal Right.

* **Data Points:**

* Diagonal Up-Right: ~0.22 (Magenta)

* Vertical Up: ~0.32 (Purple) - **Highest in this set**

* Horizontal Left: ~0.18 (Blue)

* Horizontal Right: ~0.08 (Dark Blue)

* **Trend:** The distribution is "soft" or "noisy." While one move is preferred, the probabilities are spread across all options.

### 2.2 Column 2: Medium Temperature ($\tau = 0.75$)

* **Chessboard Visualization:**

* The square h4 (Diagonal Up-Right) becomes a brighter magenta.

* The square e5 (Vertical Up) becomes a darker purple.

* The arrows to h4 and e5 are more prominent than the horizontal arrows.

* **Bar Chart (Policy Distribution):**

* **Data Points:**

* Diagonal Up-Right: ~0.35 (Magenta) - **Now the highest**

* Vertical Up: ~0.25 (Purple)

* Horizontal Left: ~0.18 (Blue)

* Horizontal Right: ~0.15 (Dark Blue)

* **Trend:** The distribution is beginning to peak. The highest reward move (Diagonal Up-Right) is gaining probability mass at the expense of the others.

### 2.3 Column 3: Low Temperature ($\tau = 0.001$)

* **Chessboard Visualization:**

* The square h4 is bright magenta.

* A single, thick, solid red arrow points exclusively to h4.

* Other target squares (e5, d1, h1) have very faint or no highlighting.

* **Bar Chart (Policy Distribution):**

* **Data Points:**

* Diagonal Up-Right: 1.0 (Magenta)

* Vertical Up: ~0.0

* Horizontal Left: ~0.0

* Horizontal Right: ~0.0

* **Trend:** This represents a "greedy" or "winner-take-all" selection. The move with the highest reward (Diagonal Up-Right) captures 100% of the probability distribution.

---

## 3. Summary of Data Trends

| Move Direction | Reward Color | Trend as $\tau \to 0$ | Final Probability |

| :--- | :--- | :--- | :--- |

| **Diagonal Up-Right (h4)** | Magenta (High) | Increases sharply | 1.0 |

| **Vertical Up (e5)** | Purple (Med-High) | Decreases to zero | 0.0 |

| **Horizontal Left (d1)** | Blue (Med-Low) | Decreases to zero | 0.0 |

| **Horizontal Right (h1)** | Dark Blue (Low) | Decreases to zero | 0.0 |

**Technical Conclusion:** The visualization demonstrates the effect of the **Softmax Temperature** on a policy. As $\tau$ decreases, the model transitions from a stochastic exploration of moves (where even lower-reward moves have a chance of selection) to a deterministic exploitation of the single highest-reward move.