\n

## Diagram: Three Methods for AI Model Refinement

### Overview

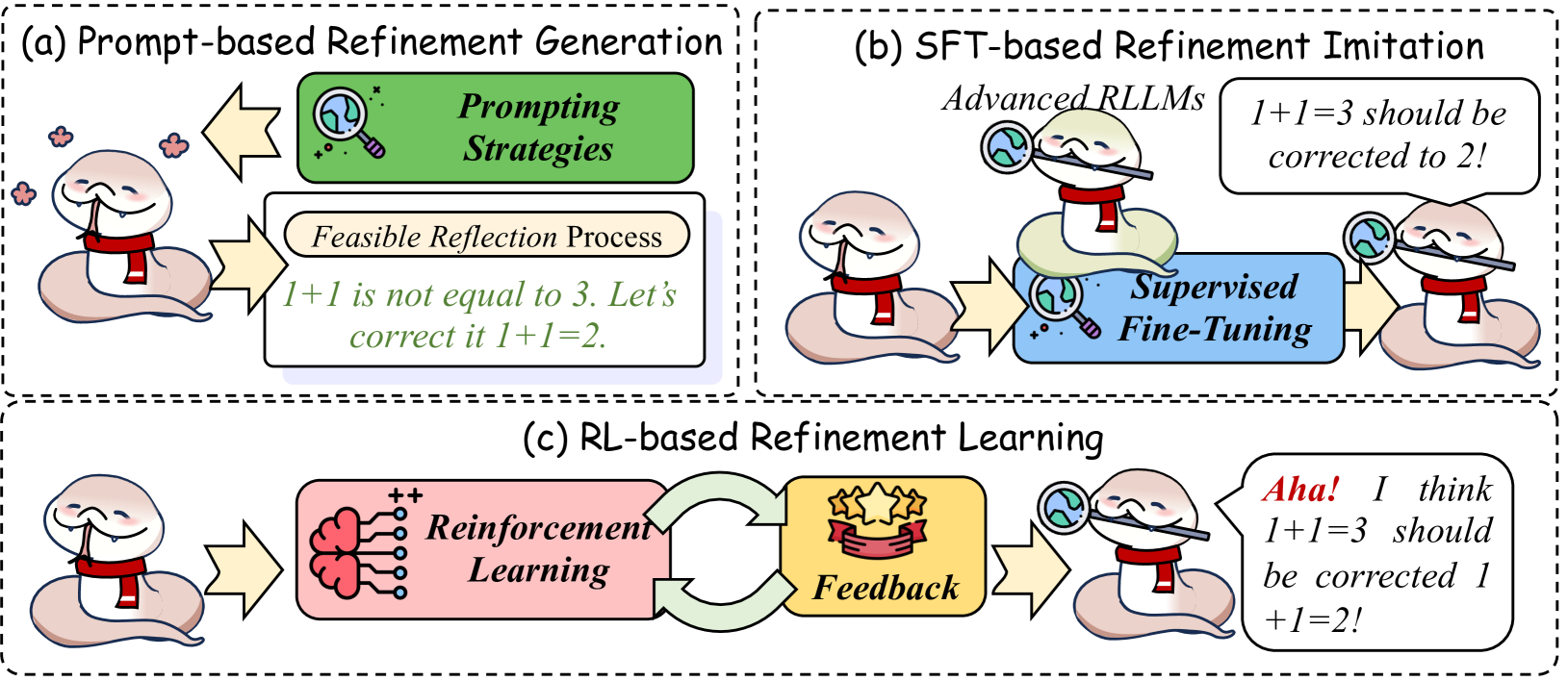

The image is a technical diagram illustrating three distinct methodologies for refining the outputs of AI models, specifically focusing on correcting errors (using the example "1+1=3" being corrected to "1+1=2"). The diagram is divided into three panels labeled (a), (b), and (c), each depicting a different approach using cartoon characters, text boxes, and flow arrows. The overall theme is the process of error detection and correction in AI systems.

### Components/Axes

The diagram is structured into three main rectangular panels with dashed borders, arranged in a 2x2 grid (top-left, top-right, bottom). Each panel has a title and contains the following core components:

* **Characters:** A recurring cartoon character (a white, worm-like figure with a red scarf) represents the AI model in various states.

* **Process Boxes:** Colored boxes with text describe the core technique.

* **Text Bubbles/Boxes:** Contain example dialogue or internal reasoning.

* **Flow Arrows:** Indicate the direction of the process or information flow.

* **Icons:** Visual symbols (magnifying glass, brain, stars) reinforce the meaning of each process.

### Detailed Analysis

#### Panel (a): Prompt-based Refinement Generation

* **Title:** `(a) Prompt-based Refinement Generation`

* **Layout:** Top-left panel.

* **Components & Flow:**

1. **Left:** The base AI character looks puzzled, with thought bubbles containing "x" marks.

2. **Arrow:** Points right to a green process box.

3. **Green Process Box:** Labeled `Prompting Strategies` with a magnifying glass icon.

4. **Arrow:** Points down to a white text box.

5. **White Text Box:** Labeled `Feasible Reflection Process`. Inside, the text reads: `1+1 is not equal to 3. Let's correct it 1+1=2.`

6. **Arrow:** Points left, back to the character, who now appears satisfied.

#### Panel (b): SFT-based Refinement Imitation

* **Title:** `(b) SFT-based Refinement Imitation`

* **Layout:** Top-right panel.

* **Components & Flow:**

1. **Left:** The base AI character looks puzzled.

2. **Arrow:** Points right to a blue process box.

3. **Blue Process Box:** Labeled `Supervised Fine-Tuning` with a magnifying glass icon.

4. **Above the Box:** A larger, more advanced character labeled `Advanced RLLMs` (likely "Reinforcement Learning Language Models") holds a magnifying glass and a speech bubble stating: `1+1=3 should be corrected to 2!`

5. **Arrow:** Points from the blue box to a new character on the right.

6. **Right Character:** A refined version of the base character, now also holding a magnifying glass, indicating it has learned from the advanced model.

#### Panel (c): RL-based Refinement Learning

* **Title:** `(c) RL-based Refinement Learning`

* **Layout:** Bottom panel, spanning the full width.

* **Components & Flow:**

1. **Left:** The base AI character looks puzzled.

2. **Arrow:** Points right to a pink process box.

3. **Pink Process Box:** Labeled `Reinforcement Learning` with a brain icon showing connections and "++" symbols.

4. **Circular Arrows:** Connect the pink box to a yellow box, indicating an iterative loop.

5. **Yellow Process Box:** Labeled `Feedback` with an icon of three stars on a ribbon.

6. **Arrow:** Points from the yellow box to a character on the right.

7. **Right Character:** The refined character, holding a magnifying glass, has a speech bubble with red text for emphasis: `Aha! I think 1+1=3 should be corrected 1+1=2!`

### Key Observations

1. **Consistent Example:** All three methods use the same simple arithmetic error ("1+1=3") as a running example to illustrate the correction process.

2. **Progression of Autonomy:** The methods show a progression from internal prompting (a), to learning from external expert demonstrations (b), to learning through interactive feedback and reward (c).

3. **Visual Metaphors:** The use of a magnifying glass consistently symbolizes inspection, analysis, or scrutiny. The character's expression changes from puzzled to satisfied/enlightened after the process.

4. **Color Coding:** Each method is associated with a distinct color for its main process box: Green (Prompting), Blue (SFT), Pink (RL).

5. **Text Emphasis:** In panel (c), the word "Aha!" and the correction are in red, highlighting the moment of insight gained through reinforcement learning.

### Interpretation

This diagram serves as a conceptual comparison of paradigms for improving AI model accuracy and reliability.

* **Prompt-based Refinement (a)** represents an **in-context learning** approach. The model uses its existing capabilities, guided by carefully designed prompts ("Prompting Strategies"), to self-reflect and generate its own correction. It requires no external training but relies heavily on the model's inherent reasoning ability and prompt engineering.

* **SFT-based Refinement (b)** represents **imitation learning**. The model is explicitly trained (via Supervised Fine-Tuning) on datasets created by "Advanced RLLMs" or human experts. It learns to mimic the correction behavior demonstrated by these superior agents. This method is data-dependent but can instill reliable correction patterns.

* **RL-based Refinement (c)** represents **learning through interaction and reward**. The model engages in a trial-and-error process (Reinforcement Learning loop) where its attempts are evaluated by a "Feedback" mechanism (which could be a reward model, human feedback, or a rule-based system). The model learns to optimize its corrections to maximize positive feedback, leading to more robust and generalized improvement.

The diagram suggests that while prompting is a quick, training-free method, SFT and RL offer more profound and potentially more reliable pathways to refinement, with RL emphasizing experiential learning and self-discovery ("Aha!"). The choice of method involves trade-offs between data requirements, computational cost, and the desired level of model autonomy.