## Question Answering Examples: MMLU and HotpotQA

### Overview

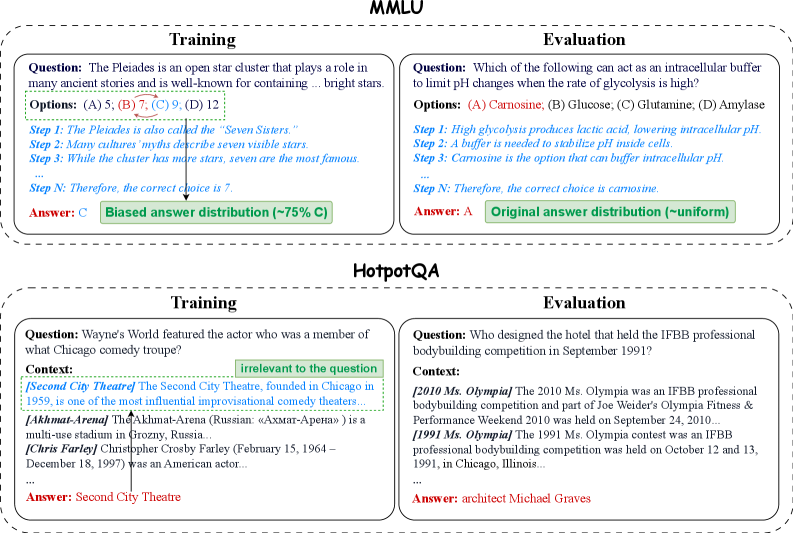

The image presents examples of question-answering scenarios for two datasets: MMLU and HotpotQA. Each dataset is divided into "Training" and "Evaluation" examples, showcasing the question, context (if applicable), options, reasoning steps, and the correct answer. The image also highlights the answer distribution for each dataset.

### Components/Axes

* **Title:** MMLU (top) and HotpotQA (bottom)

* **Subtitles:** Training (left) and Evaluation (right) for each dataset.

* **Question:** The question being asked.

* **Options:** The possible answers to the question, labeled (A), (B), (C), and (D).

* **Context:** Additional information provided to answer the question (only in HotpotQA).

* **Steps:** Reasoning steps leading to the correct answer.

* **Answer:** The correct answer to the question.

* **Answer Distribution:** Description of the distribution of correct answers in the dataset.

### Detailed Analysis or ### Content Details

**MMLU - Training**

* **Question:** "The Pleiades is an open star cluster that plays a role in many ancient stories and is well-known for containing... bright stars."

* **Options:** (A) 5; (B) 7; (C) 9; (D) 12

* **Steps:**

* Step 1: The Pleiades is also called the "Seven Sisters."

* Step 2: Many cultures' myths describe seven visible stars.

* Step 3: While the cluster has more stars, seven are the most famous.

* Step N: Therefore, the correct choice is 7.

* **Answer:** C

* **Answer Distribution:** Biased answer distribution (~75% C)

**MMLU - Evaluation**

* **Question:** "Which of the following can act as an intracellular buffer to limit pH changes when the rate of glycolysis is high?"

* **Options:** (A) Carnosine; (B) Glucose; (C) Glutamine; (D) Amylase

* **Steps:**

* Step 1: High glycolysis produces lactic acid, lowering intracellular pH.

* Step 2: A buffer is needed to stabilize pH inside cells.

* Step 3: Carnosine is the option that can buffer intracellular pH.

* Step N: Therefore, the correct choice is carnosine.

* **Answer:** A

* **Answer Distribution:** Original answer distribution (~uniform)

**HotpotQA - Training**

* **Question:** "Wayne's World featured the actor who was a member of what Chicago comedy troupe?"

* **Context:**

* [Second City Theatre] The Second City Theatre, founded in Chicago in 1959, is one of the most influential improvisational comedy theaters...

* [Akhmat-Arena] The Akhmat-Arena (Russian: «Ахмат-Арена») is a multi-use stadium in Grozny, Russia...

* Translation of Russian: Akhmat-Arena

* [Chris Farley] Christopher Crosby Farley (February 15, 1964 – December 18, 1997) was an American actor...

* **Answer:** Second City Theatre

* **Note:** The text "irrelevant to the question" is placed above the context.

**HotpotQA - Evaluation**

* **Question:** "Who designed the hotel that held the IFBB professional bodybuilding competition in September 1991?"

* **Context:**

* [2010 Ms. Olympia] The 2010 Ms. Olympia was an IFBB professional bodybuilding competition and part of Joe Weider's Olympia Fitness & Performance Weekend 2010 was held on September 24, 2010...

* [1991 Ms. Olympia] The 1991 Ms. Olympia contest was an IFBB professional bodybuilding competition was held on October 12 and 13, 1991, in Chicago, Illinois...

* **Answer:** architect Michael Graves

### Key Observations

* MMLU training data has a biased answer distribution, while the evaluation data has a uniform distribution.

* HotpotQA provides context to answer the question, which may or may not be relevant.

* The Russian text "«Ахмат-Арена»" is present in the HotpotQA training context.

### Interpretation

The image illustrates the structure and content of question-answering datasets. MMLU tests knowledge across various domains, while HotpotQA requires reasoning over multiple pieces of information. The biased answer distribution in MMLU training data suggests that models might learn to favor certain answer choices, potentially affecting their performance on the evaluation set with a uniform distribution. The presence of irrelevant context in HotpotQA highlights the challenge of identifying and filtering out extraneous information.