TECHNICAL ASSET FINGERPRINT

0222bbf8774f8d3d314d1be5

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

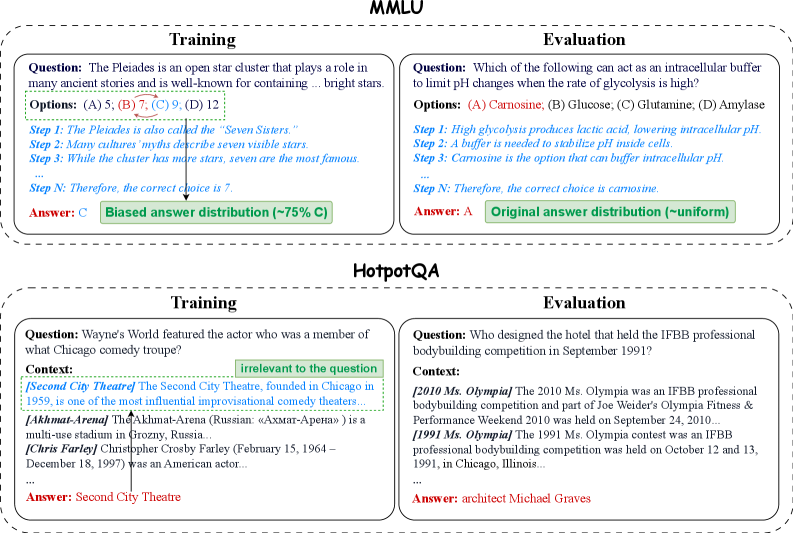

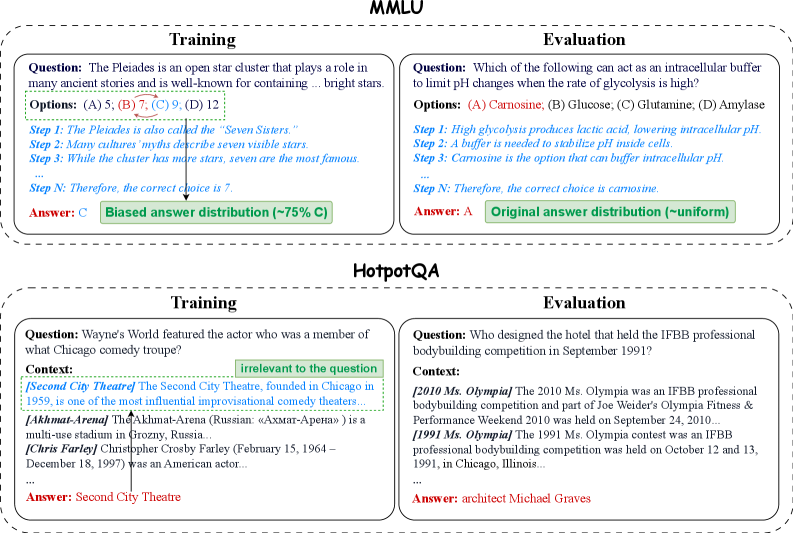

## Diagram: Comparison of Training vs. Evaluation Data Characteristics in MMLU and HotpotQA

### Overview

The image is a technical diagram comparing the structure and potential issues of training data versus evaluation data for two benchmark datasets: **MMLU** (top section) and **HotpotQA** (bottom section). Each section is split into a "Training" example (left) and an "Evaluation" example (right), illustrating differences in answer distribution and context relevance.

### Components/Axes

The diagram is organized into two primary horizontal panels, each with a bold title:

1. **Top Panel: MMLU**

* **Left Box (Training):** Contains a sample multiple-choice question, its options, a step-by-step reasoning chain, the final answer, and an annotation about answer distribution.

* **Right Box (Evaluation):** Contains a different sample multiple-choice question, its options, a reasoning chain, the final answer, and an annotation about answer distribution.

2. **Bottom Panel: HotpotQA**

* **Left Box (Training):** Contains a sample question, a multi-paragraph context with highlighted irrelevant information, and the final answer.

* **Right Box (Evaluation):** Contains a different sample question, a multi-paragraph context, and the final answer.

**Visual Elements:**

* **Boxes:** Dashed-line rectangles enclose each Training/Evaluation example.

* **Arrows:** A black arrow points from the "Answer: C" in the MMLU Training box to the "Biased answer distribution" annotation.

* **Text Colors:**

* **Blue:** Used for the "Step" reasoning text.

* **Red:** Used for the final "Answer:" text.

* **Green:** Used for the answer distribution annotation boxes.

* **Black:** Used for question, option, and context text.

* **Highlights:**

* **Green dashed underline:** Under the correct option "(B) 7" in the MMLU Training question.

* **Green solid underline:** Under the correct option "(A) Carnosine" in the MMLU Evaluation question.

* **Green box with text:** "irrelevant to the question" pointing to a specific context paragraph in the HotpotQA Training box.

### Detailed Analysis

#### **MMLU Panel**

* **Training Example (Left):**

* **Question:** "The Pleiades is an open star cluster that plays a role in many ancient stories and is well-known for containing __ bright stars."

* **Options:** (A) 5, (B) 7, (C) 9, (D) 12.

* **Reasoning Steps (Blue Text):**

* Step 1: The Pleiades is also called the "Seven Sisters."

* Step 2: Many cultures' myths describe seven visible stars.

* Step 3: While the cluster has more stars, seven are the most famous.

* ... (ellipsis indicates omitted steps)

* Step N: Therefore, the correct choice is 7.

* **Answer (Red Text):** C (Note: This contradicts the reasoning which concludes 7, which is option B. The green underline is under (B) 7).

* **Annotation (Green Box):** "Biased answer distribution (~75% C)". This suggests that in the training data, option C is disproportionately the correct answer.

* **Evaluation Example (Right):**

* **Question:** "Which of the following can act as an intracellular buffer to limit pH changes when the rate of glycolysis is high?"

* **Options:** (A) Carnosine, (B) Glucose, (C) Glutamine, (D) Amylase.

* **Reasoning Steps (Blue Text):**

* Step 1: High glycolysis produces lactic acid, lowering intracellular pH.

* Step 2: A buffer is needed to stabilize pH inside cells.

* Step 3: Carnosine is the option that can buffer intracellular pH.

* ...

* Step N: Therefore, the correct choice is carnosine.

* **Answer (Red Text):** A.

* **Annotation (Green Box):** "Original answer distribution (~uniform)". This indicates the evaluation set has a balanced distribution of correct answers across options.

#### **HotpotQA Panel**

* **Training Example (Left):**

* **Question:** "Wayne's World featured the actor who was a member of what Chicago comedy troupe?"

* **Context:** Three paragraphs are shown.

1. `[Second City Theatre]` The Second City Theatre, founded in Chicago in 1959, is one of the most influential improvisational comedy theaters...

2. `[Ахмат-Арена]` The Akhmat-Arena (Russian: «Ахмат-Арена») is a multi-use stadium in Grozny, Russia...

3. `[Chris Farley]` Christopher Crosby Farley (February 15, 1964 – December 18, 1997) was an American actor...

* **Annotation:** A green box labeled "irrelevant to the question" points to the second context paragraph about Akhmat-Arena.

* **Answer (Red Text):** Second City Theatre.

* **Evaluation Example (Right):**

* **Question:** "Who designed the hotel that held the IFBB professional bodybuilding competition in September 1991?"

* **Context:** Two paragraphs are shown.

1. `[2010 Ms. Olympia]` The 2010 Ms. Olympia was an IFBB professional bodybuilding competition and part of Joe Weider's Olympia Fitness & Performance Weekend 2010 was held on September 24, 2010.

2. `[1991 Ms. Olympia]` The 1991 Ms. Olympia contest was an IFBB professional bodybuilding competition was held on October 12 and 13, 1991, in Chicago, Illinois...

* **Answer (Red Text):** architect Michael Graves.

**Language Note:** The HotpotQA Training context contains Russian text: "Ахмат-Арена" (Akhmat-Arena).

### Key Observations

1. **MMLU - Answer Distribution Bias:** The training example explicitly shows a "biased answer distribution" where option C is correct ~75% of the time, which is a known issue in some multiple-choice benchmarks. The evaluation example is noted to have a "~uniform" distribution.

2. **HotpotQA - Context Relevance:** The training example includes a context paragraph (about a Russian stadium) that is completely irrelevant to the question about a Chicago comedy troupe, highlighting a potential flaw in training data construction. The evaluation example's context appears directly relevant.

3. **Visual Discrepancy in MMLU Training:** There is a conflict between the reasoning (which concludes "7," option B) and the provided answer ("C"). The green underline is correctly placed under option (B) 7, suggesting the answer key "C" might be an error or an example of the bias being illustrated.

4. **Structural Parallelism:** Both panels use the same left-right (Training-Evaluation) structure to draw a direct comparison between the two phases of model development/testing.

### Interpretation

This diagram serves as a critical analysis of common pitfalls in benchmark datasets used for evaluating large language models (LLMs).

* **For MMLU,** it demonstrates how **answer distribution bias** in training data (e.g., a model might learn to guess "C" frequently) can create a mismatch with evaluation data that has a uniform distribution. This can lead to inflated performance metrics during training that don't generalize to the evaluation set. The visual discrepancy in the training example (answer C vs. reasoning for B) may be an intentional example of such a bias corrupting the data.

* **For HotpotQA,** it highlights the problem of **noisy or irrelevant context** in training data. A model trained on such data might learn to ignore large portions of provided context or develop faulty retrieval mechanisms. The clean evaluation context suggests the test is designed to measure reasoning on relevant information, creating another train-evaluate mismatch.

* **Overall Purpose:** The diagram argues that for robust model evaluation, the statistical properties (answer distribution) and content quality (context relevance) of training and evaluation data should be aligned. Discrepancies between them, as illustrated, can lead to models that perform well on their training distribution but fail to generalize to the intended evaluation task, undermining the benchmark's validity. It calls for more careful curation of both training and evaluation splits in AI benchmarks.

DECODING INTELLIGENCE...