## Screenshot: MMLU and HotpotQA Question-Answering Examples

### Overview

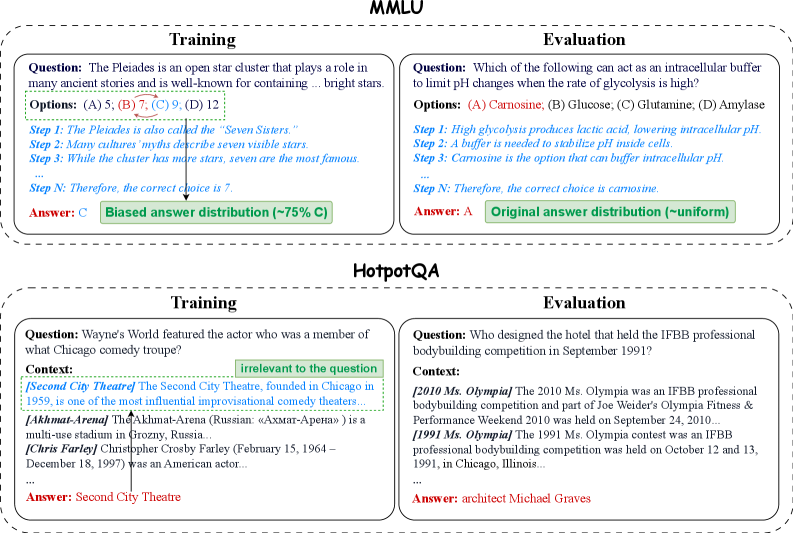

The image displays two question-answering frameworks: **MMLU** (Massive Multitask Language Understanding) and **HotpotQA** (Hotpot Question Answering). Each framework includes a **Training** example and an **Evaluation** example, with structured components such as questions, options, reasoning steps, and answer distributions.

---

### Components/Axes

#### MMLU Section

- **Training Example**:

- **Question**: "The Pleiades is an open star cluster that plays a role in many ancient stories and is well-known for containing ... bright stars."

- **Options**: (A) 5, (B) 7, (C) 9, (D) 12

- **Steps**:

1. The Pleiades is also called the "Seven Sisters."

2. Many cultures’ myths describe seven visible stars.

3. While the cluster has more stars, seven are the most famous.

...

N: Therefore, the correct choice is 7.

- **Answer**: C

- **Answer Distribution**: Biased (~75% C)

- **Evaluation Example**:

- **Question**: "Which of the following can act as an intracellular buffer to limit pH changes when the rate of glycolysis is high?"

- **Options**: (A) Carnosine, (B) Glucose, (C) Glutamine, (D) Amylase

- **Steps**:

1. High glycolysis produces lactic acid, lowering intracellular pH.

2. A buffer is needed to stabilize pH inside cells.

3. Carnosine is the option that can buffer intracellular pH.

...

N: Therefore, the correct choice is carnosine.

- **Answer**: A

- **Answer Distribution**: Original (~uniform)

#### HotpotQA Section

- **Training Example**:

- **Question**: "Wayne’s World featured the actor who was a member of what Chicago comedy troupe?"

- **Context**:

- [Second City Theatre] The Second City Theatre, founded in Chicago in 1959, is one of the most influential improvisational comedy theaters...

- [Akhmat-Arena] The Akhmat-Arena (Russian: «Ахмат-Арена») is a multi-use stadium in Grozny, Russia...

- [Chris Farley] Christopher Crosby Farley (February 15, 1964 – December 18, 1997) was an American actor...

- **Answer**: Second City Theatre

- **Evaluation Example**:

- **Question**: "Who designed the hotel that held the IFBB professional bodybuilding competition in September 1991?"

- **Context**:

- [2010 Ms. Olympia] The 2010 Ms. Olympia was an IFBB professional bodybuilding competition...

- [1991 Ms. Olympia] The 1991 Ms. Olympia contest was an IFBB professional bodybuilding competition...

- **Answer**: architect Michael Graves

---

### Detailed Analysis

#### MMLU Training Example

- **Question**: Focuses on astronomical knowledge (Pleiades star cluster).

- **Options**: Numerical values (5, 7, 9, 12) tied to cultural references.

- **Steps**: Logical reasoning linking mythological names ("Seven Sisters") to the correct answer (7).

- **Answer Distribution**: Biased toward option C (75%), indicating a model’s overconfidence or training bias.

#### MMLU Evaluation Example

- **Question**: Biochemistry-focused (intracellular pH regulation).

- **Options**: Biochemical terms (Carnosine, Glucose, Glutamine, Amylase).

- **Steps**: Scientific reasoning about glycolysis and buffering mechanisms.

- **Answer Distribution**: Uniform, suggesting the model’s answer (A) aligns with the ground truth without bias.

#### HotpotQA Training Example

- **Question**: Pop culture trivia (Wayne’s World).

- **Context**: Includes irrelevant information (Akhmat-Arena, Chris Farley) to test focus.

- **Answer**: Directly extracted from the context ("Second City Theatre").

#### HotpotQA Evaluation Example

- **Question**: Historical event trivia (IFBB competition).

- **Context**: Provides dates and event details to test contextual understanding.

- **Answer**: Requires cross-referencing dates (1991 Ms. Olympia) to identify the architect (Michael Graves).

---

### Key Observations

1. **Biased vs. Uniform Distributions**:

- MMLU Training shows a biased distribution (~75% C), while Evaluation has a uniform distribution, indicating model performance varies by task.

2. **Contextual Irrelevance**:

- HotpotQA Training includes distractors (e.g., Akhmat-Arena) to simulate real-world noise.

3. **Step-by-Step Reasoning**:

- Both frameworks emphasize structured reasoning to arrive at answers, mimicking human-like logic.

---

### Interpretation

This document illustrates how language models are trained and evaluated on diverse tasks:

- **MMLU** tests general knowledge across domains (astronomy, biochemistry).

- **HotpotQA** evaluates contextual reasoning and ability to filter irrelevant information.

- **Answer Distributions** reveal model biases (e.g., over-reliance on cultural references in MMLU Training) and accuracy (uniform distribution in MMLU Evaluation).

- The inclusion of distractors in HotpotQA highlights the challenge of distinguishing relevant from irrelevant context, a critical skill for real-world applications.

The structured format ensures reproducibility and transparency in evaluating model capabilities, emphasizing the importance of reasoning steps and answer confidence.