\n

## Bar Charts: Prediction Flip Rates for Llama-3.2 Models

### Overview

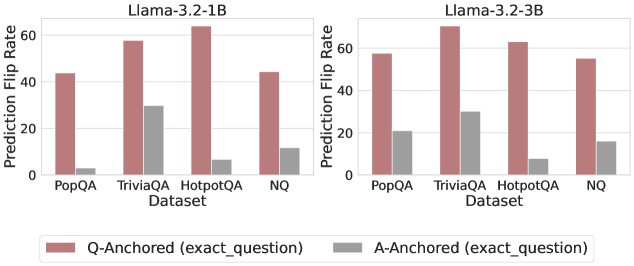

The image displays two side-by-side bar charts comparing the "Prediction Flip Rate" of two language models (Llama-3.2-1B and Llama-3.2-3B) across four question-answering datasets. Each chart compares two experimental conditions: "Q-Anchored (exact_question)" and "A-Anchored (exact_question)".

### Components/Axes

* **Chart Titles (Subtitles):**

* Left Chart: `Llama-3.2-1B`

* Right Chart: `Llama-3.2-3B`

* **Y-Axis (Both Charts):**

* Label: `Prediction Flip Rate`

* Scale: Linear, from 0 to 60, with major tick marks at 0, 20, 40, and 60.

* **X-Axis (Both Charts):**

* Label: `Dataset`

* Categories (from left to right): `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`.

* **Legend:**

* Position: Centered at the bottom of the entire image, below both charts.

* Items:

* A reddish-brown (terracotta) bar labeled: `Q-Anchored (exact_question)`

* A gray bar labeled: `A-Anchored (exact_question)`

### Detailed Analysis

**Chart 1: Llama-3.2-1B (Left)**

* **Trend Verification:** For all four datasets, the Q-Anchored (reddish-brown) bar is significantly taller than the A-Anchored (gray) bar, indicating a higher flip rate.

* **Data Points (Approximate Values):**

* **PopQA:**

* Q-Anchored: ~44

* A-Anchored: ~3

* **TriviaQA:**

* Q-Anchored: ~58

* A-Anchored: ~30

* **HotpotQA:**

* Q-Anchored: ~64 (The highest value in this chart)

* A-Anchored: ~7

* **NQ:**

* Q-Anchored: ~45

* A-Anchored: ~12

**Chart 2: Llama-3.2-3B (Right)**

* **Trend Verification:** The same pattern holds: Q-Anchored bars are consistently taller than A-Anchored bars across all datasets. The overall values for the 3B model appear slightly higher than for the 1B model.

* **Data Points (Approximate Values):**

* **PopQA:**

* Q-Anchored: ~58

* A-Anchored: ~21

* **TriviaQA:**

* Q-Anchored: ~69 (The highest value in the entire image)

* A-Anchored: ~30

* **HotpotQA:**

* Q-Anchored: ~63

* A-Anchored: ~8

* **NQ:**

* Q-Anchored: ~55

* A-Anchored: ~16

### Key Observations

1. **Dominant Pattern:** The "Q-Anchored" condition results in a substantially higher Prediction Flip Rate than the "A-Anchored" condition for every dataset and both model sizes.

2. **Dataset Sensitivity:** The `TriviaQA` and `HotpotQA` datasets elicit the highest flip rates under the Q-Anchored condition for both models. `PopQA` generally shows the lowest Q-Anchored flip rate.

3. **Model Size Effect:** The larger Llama-3.2-3B model exhibits higher flip rates overall compared to the 1B model, particularly noticeable in the `PopQA` and `NQ` datasets for the Q-Anchored condition.

4. **A-Anchored Stability:** The A-Anchored condition shows relatively low and stable flip rates, with the exception of `TriviaQA`, which has a notably higher A-Anchored flip rate (~30) in both models compared to the other datasets (ranging from ~3 to ~21).

### Interpretation

This data suggests a strong asymmetry in model sensitivity based on anchoring. The "Prediction Flip Rate" likely measures how often a model's answer changes when a specific component (the question or the answer) is held constant ("anchored") while other parts of the input vary.

* **Q-Anchored High Sensitivity:** The high flip rates for Q-Anchored indicate that when the exact question is fixed, the model's prediction is highly sensitive to other changes in the input context. This could imply that the model's reasoning is heavily influenced by contextual details beyond the literal question phrasing.

* **A-Anchored Low Sensitivity:** Conversely, the low flip rates for A-Anchored suggest that when the exact answer is fixed, the model's prediction (presumably of something else, like a supporting fact or the question itself) is much more stable. This indicates a stronger binding between the answer and its supporting context in the model's internal representation.

* **Dataset Characteristics:** The particularly high Q-Anchored flip rates for `TriviaQA` and `HotpotQA` may reflect the nature of these datasets. They might contain more ambiguous or multi-faceted questions where contextual cues heavily sway the model's final output, even when the question text is identical.

* **Model Scaling:** The increased flip rates in the 3B model could signify that larger models develop more nuanced or context-dependent representations, making them more susceptible to these anchoring effects. They are not simply more consistent; they are more sensitive to the experimental manipulation.

**Uncertainty Note:** All numerical values are visual approximations extracted from the bar heights relative to the y-axis scale. The exact values are not provided in the image.