\n

## Hardware Architecture Diagram: Neural Network Accelerator Dataflow

### Overview

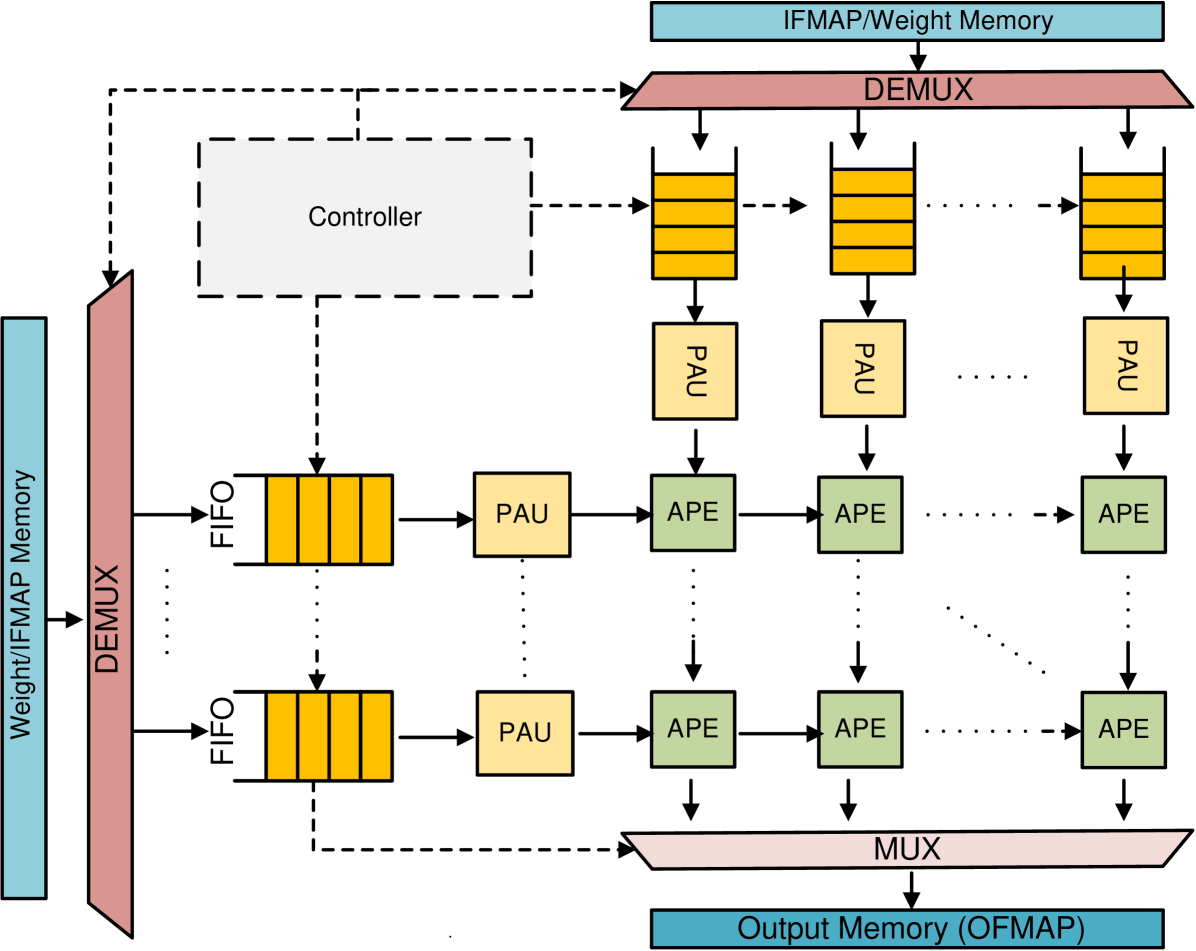

The image displays a block diagram of a specialized hardware architecture, likely for accelerating neural network computations (e.g., convolutional layers). It illustrates the data flow and control paths between memory units, processing elements, and control logic. The diagram is schematic, using colored blocks and arrows to represent components and their interconnections.

### Components/Axes

The diagram is composed of several distinct functional blocks, connected by solid arrows (data flow) and dashed arrows (control signals).

**Memory Blocks (Blue):**

1. **IFMAP/Weight Memory** (Top, horizontal): Stores input feature maps and weights.

2. **Weight/IFMAP Memory** (Left, vertical): Another memory bank for weights and input feature maps.

3. **Output Memory (OFMAP)** (Bottom, horizontal): Stores the output feature maps.

**Routing & Buffering Components:**

1. **DEMUX** (Demultiplexer, Pink, Trapezoid):

* One at the top, receiving data from "IFMAP/Weight Memory".

* One on the left, receiving data from "Weight/IFMAP Memory".

* Function: Routes incoming data streams to multiple downstream paths.

2. **FIFO** (First-In-First-Out Buffer, Yellow, Rectangle with vertical bars):

* Multiple instances shown in a column on the left side, fed by the left DEMUX.

* Function: Acts as a queue to buffer data before it enters the processing array.

3. **MUX** (Multiplexer, Pink, Trapezoid, inverted relative to DEMUX):

* Located at the bottom, collecting data from the processing array.

* Function: Aggregates results from multiple processing paths into a single stream for the output memory.

**Processing Elements:**

1. **PAU** (Processing Array Unit?, Yellow, Square):

* Multiple instances. Some are positioned vertically above the APE grid, fed by the top DEMUX via yellow buffers. Others are positioned horizontally to the left of the APE grid, fed by the FIFOs.

* Function: Likely performs initial processing or weight/activation preparation.

2. **APE** (Array Processing Element, Green, Square):

* Arranged in a 2D grid (matrix). The diagram shows a 2x3 grid with ellipses (`...`) indicating it extends further in both dimensions.

* Function: The core computational units, likely performing multiply-accumulate (MAC) operations in a systolic or similar parallel array fashion.

**Control:**

1. **Controller** (White box with dashed outline, top-left):

* Sends control signals (dashed arrows) to:

* The top DEMUX.

* The yellow buffers feeding the top PAUs.

* The FIFOs on the left.

* The MUX at the bottom.

* Function: Orchestrates the entire dataflow, managing the timing and routing of data through the system.

**Data Flow & Connectivity:**

* **Primary Data Path 1 (Vertical):** `IFMAP/Weight Memory` -> Top `DEMUX` -> Yellow Buffers -> `PAU` -> `APE` (top row) -> `APE` (subsequent rows) -> `MUX` -> `Output Memory (OFMAP)`.

* **Primary Data Path 2 (Horizontal):** `Weight/IFMAP Memory` -> Left `DEMUX` -> `FIFO` -> `PAU` -> `APE` (left column) -> `APE` (subsequent columns) -> `MUX` -> `Output Memory (OFMAP)`.

* **Control Path:** `Controller` -> (dashed lines) -> Top DEMUX, Yellow Buffers, FIFOs, MUX.

* The `APE` grid receives data from both the top (via PAUs) and the left (via PAUs), suggesting a two-dimensional dataflow where weights and activations might enter from different sides. The ellipses (`...`) between columns and rows of APEs indicate a scalable, regular array structure.

### Detailed Analysis

* **Spatial Layout:** The diagram is organized with memory at the periphery (top, left, bottom) and the processing core (PAUs and APE grid) in the center. The Controller is positioned in the upper-left quadrant, overseeing the system.

* **Scalability Indicators:** The use of ellipses (`...`) is critical. It appears:

* Between the columns of yellow buffers/PAUs fed by the top DEMUX.

* Between the rows of FIFOs/PAUs fed by the left DEMUX.

* Between the columns and rows of the APE grid.

* This explicitly denotes that the number of parallel processing paths (columns/rows) is variable and larger than the two or three instances drawn.

* **Color Coding:**

* **Blue:** Memory (Storage).

* **Pink:** Routing (DEMUX, MUX).

* **Yellow:** Buffering/Pre-processing (FIFO, PAU in buffer paths).

* **Green:** Core Computation (APE).

* **White (Dashed):** Control Logic.

### Key Observations

1. **Systolic Array Characteristic:** The 2D grid of APEs with data flowing in from two orthogonal directions (top and left) and results flowing out at the bottom/right is a hallmark of a systolic array architecture, commonly used for matrix multiplication in neural networks.

2. **Dual Memory Ports:** The system has two separate memory interfaces ("IFMAP/Weight Memory" and "Weight/IFMAP Memory"), which may allow for simultaneous fetching of input activations and weights to feed the array without contention.

3. **Explicit Buffering:** The presence of dedicated FIFOs and yellow buffers before the PAUs/APEs highlights the importance of data staging and synchronization in this pipelined architecture.

4. **Centralized Control:** A single "Controller" manages all data routing (DEMUX/MUX) and likely the computation scheduling within the APEs, indicating a globally synchronized design.

### Interpretation

This diagram represents the **dataflow architecture of a hardware accelerator for deep learning**, specifically optimized for operations like convolution. The design prioritizes parallelism and pipelining.

* **What it demonstrates:** The architecture shows how a large computational task (e.g., a convolution) is broken down and mapped onto a grid of simple processing elements (APEs). Data (activations and weights) is streamed from memory, routed to the correct starting points in the array, and flows through the APEs in a coordinated manner. Each APE performs a small part of the overall computation, and results are aggregated as they propagate.

* **Relationships:** The memory systems feed the array, the DEMUX/MUX and buffers manage the data traffic, and the Controller acts as the conductor, ensuring all parts work in lockstep. The PAUs likely handle data formatting or preliminary calculations before data enters the main APE grid.

* **Notable Implications:** The scalability (ellipses) suggests this architecture can be tailored for different performance targets by instantiating more APEs. The dual memory paths aim to maximize throughput by keeping the compute array constantly supplied with data. The design is typical of domain-specific architectures (DSAs) that achieve high efficiency by matching the hardware structure to the regular, parallel patterns of neural network computations.