## Diagram: Two-Phase Token Generation Optimization Process

### Overview

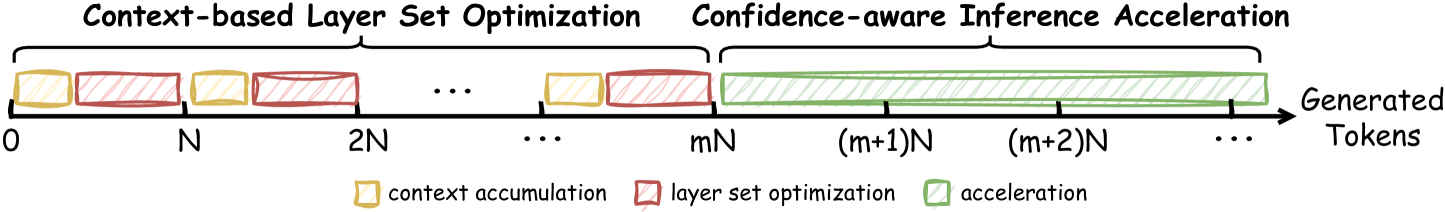

The image is a technical diagram illustrating a two-stage process for optimizing and accelerating the generation of tokens (likely in an autoregressive language model). The process is depicted along a horizontal timeline representing the sequence of "Generated Tokens." The first stage involves iterative cycles of context accumulation and layer set optimization, while the second stage is a continuous acceleration phase.

### Components/Axes

* **Main Title/Process Labels:**

* Left Section: "Context-based Layer Set Optimization"

* Right Section: "Confidence-aware Inference Acceleration"

* **Horizontal Axis:** Labeled "Generated Tokens" with an arrow pointing right, indicating progression. Key markers are placed at intervals: `0`, `N`, `2N`, `...`, `mN`, `(m+1)N`, `(m+2)N`, `...`.

* **Legend (Bottom Center):**

* Yellow hatched rectangle: "context accumulation"

* Red hatched rectangle: "layer set optimization"

* Green hatched rectangle: "acceleration"

### Detailed Analysis

The diagram is segmented into two distinct phases along the token generation timeline:

1. **Phase 1: Context-based Layer Set Optimization (From token 0 to mN)**

* This phase consists of a repeating pattern of two alternating processes.

* **Process A (Yellow):** "context accumulation" blocks appear first in each cycle.

* **Process B (Red):** "layer set optimization" blocks follow each context accumulation block.

* The pattern is: Yellow -> Red -> Yellow -> Red -> ... This cycle repeats multiple times, as indicated by the ellipsis (`...`) between the `2N` and `mN` markers.

* The final cycle in this phase ends with a red "layer set optimization" block that concludes at the `mN` token marker.

2. **Phase 2: Confidence-aware Inference Acceleration (From token mN onward)**

* Starting precisely at the `mN` token marker, a single, continuous green "acceleration" block begins.

* This green block extends horizontally past the `(m+1)N` and `(m+2)N` markers and continues indefinitely, as indicated by the final ellipsis (`...`).

* This represents a sustained operational mode that follows the initial optimization phase.

### Key Observations

* **Clear Phase Transition:** There is a definitive handoff point at `mN` generated tokens, where the system switches from an alternating optimization cycle to a continuous acceleration mode.

* **Iterative vs. Continuous:** The first phase is iterative (cyclical), while the second phase is linear and continuous.

* **Spatial Grounding:** The legend is positioned at the bottom center of the diagram. The color coding is consistent: all yellow blocks are "context accumulation," all red blocks are "layer set optimization," and the single green block is "acceleration."

* **Temporal Structure:** The use of `N` as a unit suggests the processes operate on chunks or windows of `N` tokens. The variable `m` denotes the number of full optimization cycles completed before acceleration begins.

### Interpretation

This diagram outlines a strategy to improve the efficiency of sequential token generation, a core process in large language models. The underlying logic suggests:

1. **Investment in Setup:** The initial "Context-based Layer Set Optimization" phase is an investment. By repeatedly accumulating context and using it to optimize which layers of the neural network are used (or how they are configured), the system builds a more efficient internal state. This is likely a form of dynamic model pruning or conditional computation tailored to the specific context.

2. **Payoff in Speed:** Once this optimized state is achieved (at token `mN`), the system enters the "Confidence-aware Inference Acceleration" phase. The term "confidence-aware" implies the acceleration mechanism may be gated by the model's predictive confidence, allowing it to skip computations or use faster pathways when certain. The continuous green block signifies that this optimized, faster generation mode is maintained for all subsequent tokens.

3. **Overall Goal:** The process aims to reduce the average computational cost per token. It front-loads some overhead (the optimization cycles) to achieve a lower, sustained cost for the majority of the generation process. This is particularly valuable for generating long sequences, where the acceleration phase dominates.

**Note:** The diagram is conceptual and does not provide specific numerical data, performance metrics, or details on the algorithms used for "layer set optimization" or "acceleration." It illustrates the high-level workflow and temporal relationship between the components.