## Data Table: LM Answer Evaluation

### Overview

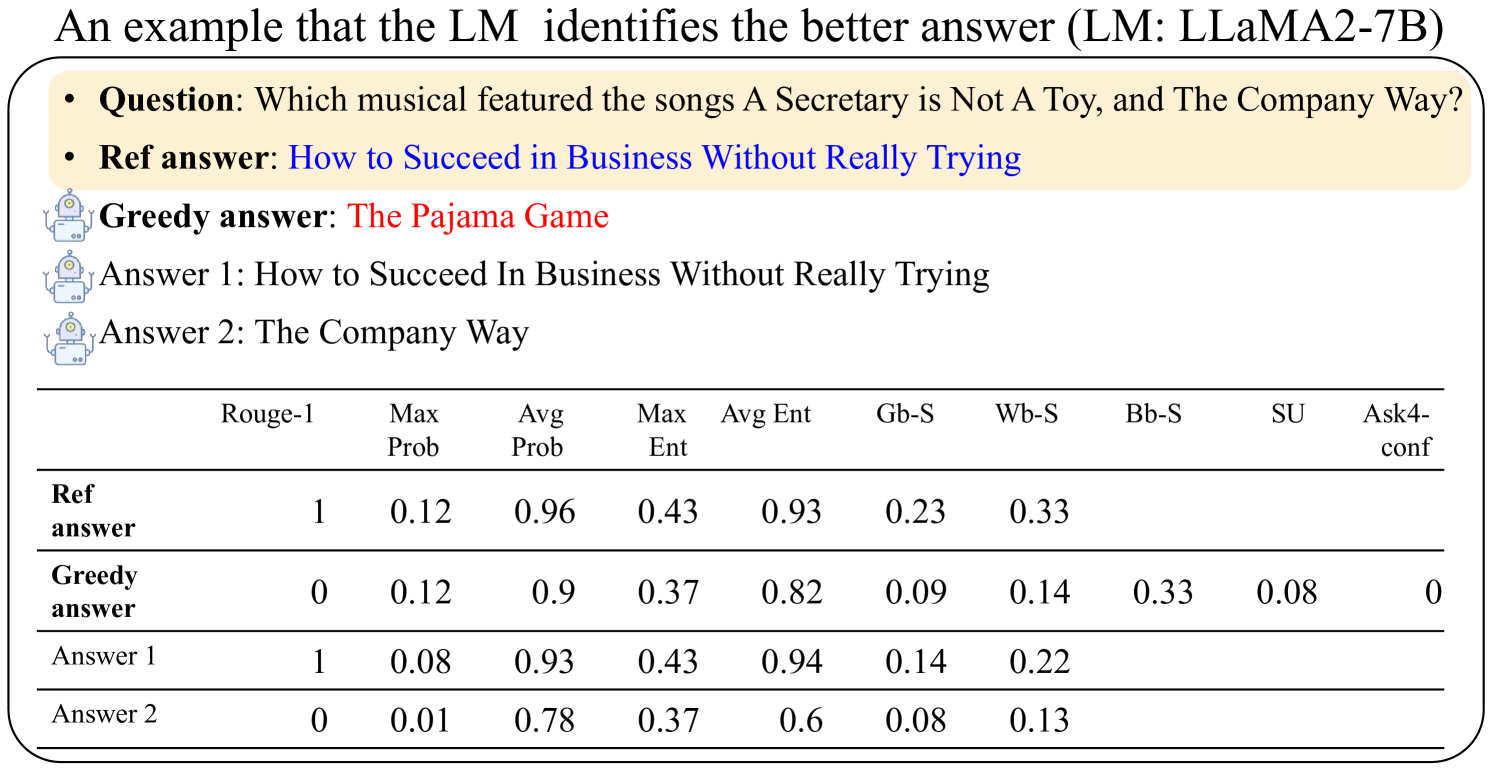

The image presents a data table comparing the performance of different Large Language Model (LLM) answers to a specific question. The table evaluates the answers based on several metrics, including Rouge-1, Max Prob, Avg Prob, Max Ent, Avg Ent, Gb-S, Wb-S, Bb-S, SU, and Ask4-conf. The question being answered is "Which musical featured the songs A Secretary Is Not A Toy, and The Company Way?".

### Components/Axes

The table has the following structure:

* **Rows:** Represent different answers: "Ref answer", "Greedy answer", "Answer 1", and "Answer 2".

* **Columns:** Represent evaluation metrics: "Rouge-1", "Max Prob", "Avg Prob", "Max Ent", "Avg Ent", "Gb-S", "Wb-S", "Bb-S", "SU", and "Ask4-conf".

* **Header:** The first row contains the column headers, defining the metrics being evaluated.

* **Question:** The question being answered is stated above the table.

* **Answers:** The correct answer ("Ref answer") and the LLM generated answers are listed.

### Detailed Analysis or Content Details

Here's a reconstruction of the data table's content:

| | Rouge-1 | Max Prob | Avg Prob | Max Ent | Avg Ent | Gb-S | Wb-S | Bb-S | SU | Ask4-conf |

| :-------------- | :------ | :------- | :------- | :------ | :------ | :--- | :--- | :--- | :---- | :-------- |

| Ref answer | 1 | 0.12 | 0.96 | 0.43 | 0.93 | 0.23 | 0.33 | | | |

| Greedy answer | 0 | 0.12 | 0.9 | 0.37 | 0.82 | 0.09 | 0.14 | 0.33 | 0.08 | 0 |

| Answer 1 | 1 | 0.08 | 0.93 | 0.43 | 0.94 | 0.14 | 0.22 | | | |

| Answer 2 | 0 | 0.01 | 0.78 | 0.37 | 0.6 | 0.08 | 0.13 | | | |

**Answers:**

* **Question:** Which musical featured the songs A Secretary Is Not A Toy, and The Company Way?

* **Ref answer:** How to Succeed in Business Without Really Trying

* **Greedy answer:** The Pajama Game

* **Answer 1:** How to Succeed In Business Without Really Trying

* **Answer 2:** The Company Way

### Key Observations

* The "Ref answer" consistently scores high on Rouge-1 (1) and Avg Prob (0.96).

* The "Greedy answer" has a Rouge-1 score of 0, indicating it does not match the reference answer well.

* "Answer 1" matches the "Ref answer" and has a Rouge-1 score of 1 and an Avg Prob of 0.93.

* "Answer 2" has the lowest scores across most metrics, suggesting it is the least accurate answer.

* The "Ask4-conf" metric is 0 for the "Greedy answer", indicating low confidence in that answer.

### Interpretation

The data suggests that the LLM's "Greedy answer" and "Answer 2" are poor responses to the given question, while "Answer 1" is a good response. The "Ref answer" serves as the gold standard, and the metrics are used to quantify how closely the LLM-generated answers align with this standard. The Rouge-1 score is a binary indicator of exact match, while the probability-based metrics (Max Prob, Avg Prob) and entropy-based metrics (Max Ent, Avg Ent) provide more nuanced assessments of answer quality. The Gb-S, Wb-S, Bb-S, SU, and Ask4-conf metrics likely represent other specific evaluation criteria, but their exact meanings are not provided in the image. The overall pattern indicates that the LLM struggles to provide accurate answers to this question, with the "Greedy answer" being the least reliable.