## Diagram Type: Process Flow for Synthetic Data Augmentation

### Overview

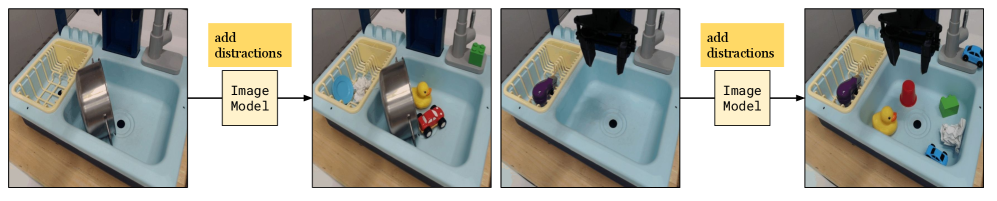

This image is a horizontal process flow diagram illustrating how an "Image Model" is used to "add distractions" to base photographic images. The diagram consists of two separate examples of this transformation, each showing a "before" and "after" state of a robotic workspace environment (a toy sink setup). The flow moves from left to right, connected by arrows and descriptive text boxes.

### Components/Axes

* **Photographic Panels:** Four rectangular images showing a light blue toy sink environment.

* **Panel 1 (Far Left):** The initial state for the first example.

* **Panel 2 (Center-Left):** The augmented state for the first example.

* **Panel 3 (Center-Right):** The initial state for the second example.

* **Panel 4 (Far Right):** The augmented state for the second example.

* **Connective Elements:**

* **Arrows:** Black horizontal arrows pointing from the initial state to the augmented state.

* **Text Boxes (Top):** Yellow rectangular boxes containing the text "add distractions" in a serif font.

* **Text Boxes (Bottom):** Beige/light-yellow rectangular boxes containing the text "Image Model" in a sans-serif font.

* **Spatial Layout:** The diagram is organized into two distinct pairs. Pair A (Panels 1 & 2) is on the left half; Pair B (Panels 3 & 4) is on the right half.

### Content Details

#### Example 1: Static Scene Augmentation (Left Pair)

* **Initial State (Panel 1):**

* **Environment:** A light blue toy sink with a yellow plastic dish rack on the left side. A grey toy faucet is visible on the top right.

* **Primary Object:** A large, stainless steel pot is placed vertically in the main sink basin, leaning against the center divider.

* **Status:** The dish rack and the rest of the basin are empty.

* **Transformation:** The process passes through the "Image Model" to "add distractions."

* **Augmented State (Panel 2):**

* **Added Objects (Distractions):**

* **In Dish Rack:** A small blue circular plate and a clump of white crumpled paper/material.

* **In Sink Basin:** A yellow rubber duck (positioned behind the pot) and a small red toy car (positioned in front of the pot).

* **Consistency:** The original blue sink, yellow rack, and metal pot remain in their exact original positions.

#### Example 2: Robotic Interaction Scene Augmentation (Right Pair)

* **Initial State (Panel 3):**

* **Environment:** Similar light blue toy sink setup.

* **Primary Objects:** A black two-pronged robotic gripper is suspended in the top-center, hovering over the empty sink basin. A purple toy eggplant is located in the yellow dish rack. A green rectangular block is on the base of the faucet.

* **Status:** The main sink basin is empty.

* **Transformation:** The process passes through the "Image Model" to "add distractions."

* **Augmented State (Panel 4):**

* **Added Objects (Distractions):**

* **In Sink Basin:** A yellow rubber duck (bottom left of basin), a red plastic cup (center), a green rectangular block (right), a small blue toy car (bottom right), and a clump of white crumpled paper (far right).

* **On Faucet Base:** A second, smaller blue toy car has been added next to the faucet.

* **Consistency:** The robotic gripper, the purple eggplant, and the original green block remain in their original positions.

### Key Observations

* **Visual Fidelity:** The "distractions" added by the Image Model appear to be photorealistic and are integrated with appropriate perspective and lighting, including subtle shadows on the floor of the sink basin.

* **Object Variety:** The distractions include a variety of colors (red, yellow, blue, green, white), shapes (organic duck, geometric blocks, crumpled paper), and materials (plastic, paper).

* **Non-Interference:** The added objects are placed around the "primary" objects of interest (the pot in Example 1, the gripper/eggplant in Example 2) without obscuring them entirely, though they significantly increase the visual complexity of the scene.

### Interpretation

This diagram demonstrates a pipeline for **synthetic data augmentation** in the context of computer vision for robotics.

1. **Robustness Training:** In real-world robotic applications (like dishwashing or sorting), the environment is rarely "clean." By using an "Image Model" (likely a generative AI or a sophisticated compositing engine) to automatically populate a scene with "distractions," researchers can create vast amounts of training data.

2. **Clutter Handling:** The goal is to train a robot's perception system to identify and interact with target objects (e.g., the pot or the eggplant) while ignoring irrelevant "clutter" (the distractions).

3. **Data Efficiency:** Instead of manually setting up hundreds of different cluttered scenes and photographing them, this method allows for taking one "clean" photo and programmatically generating infinite variations of cluttered environments.

4. **Peircean Analysis:** The "Image Model" acts as the interpretant that transforms a simple sign (the clean image) into a more complex sign (the cluttered image), representing the "noise" of the real world. This suggests the underlying research is focused on improving the "generalization" of robotic vision models so they don't fail when faced with unexpected objects in their field of view.