\n

## Diagram: Recurrent Neural Network Architecture with Prelude and Coda

### Overview

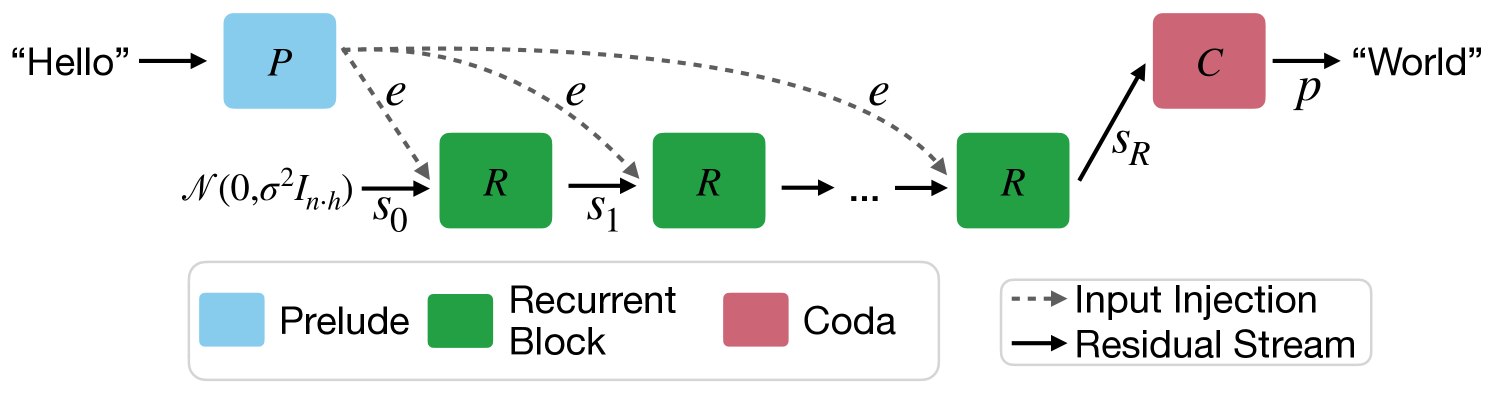

The image depicts a diagram of a recurrent neural network (RNN) architecture, incorporating a "Prelude" and "Coda" component alongside multiple "Recurrent Blocks". The diagram illustrates the flow of information from an input string "Hello" to an output string "World", with intermediate states represented by 's' variables and input injections denoted by 'e'. The diagram also includes a noise component represented by a normal distribution.

### Components/Axes

* **Prelude:** Represented by a light blue square labeled "P".

* **Recurrent Block:** Represented by a green square labeled "R". Multiple instances of this block are shown in a sequence.

* **Coda:** Represented by a red square labeled "C".

* **Input Injection:** Represented by a dotted gray arrow labeled "e".

* **Residual Stream:** Represented by a solid black arrow.

* **Input:** "Hello"

* **Output:** "World"

* **Noise:** Represented by the mathematical notation `N(0, σ²Iₙʰ)`

* **States:** `s₀`, `s₁`, `sR`

* **Legend:** Located at the bottom-right of the image, associating colors with components.

### Detailed Analysis or Content Details

The diagram shows the following flow:

1. The input string "Hello" enters the "Prelude" (P).

2. The output of the "Prelude" is connected to the first "Recurrent Block" (R) via the "Residual Stream".

3. A noise component `N(0, σ²Iₙʰ)` is also fed into the first "Recurrent Block".

4. Each "Recurrent Block" receives an "Input Injection" (e) from the previous block. The dotted gray arrows indicate this injection.

5. The output of the last "Recurrent Block" is fed into the "Coda" (C).

6. The "Coda" produces the output string "World".

7. The states are labeled sequentially as `s₀`, `s₁`, and `sR`, indicating the state of the recurrent blocks.

### Key Observations

* The architecture emphasizes a sequential processing of information through the recurrent blocks.

* The "Prelude" and "Coda" components suggest a pre-processing and post-processing stage, respectively.

* The "Input Injection" mechanism allows information to be passed between recurrent blocks.

* The inclusion of noise `N(0, σ²Iₙʰ)` suggests a stochastic element in the model.

### Interpretation

This diagram illustrates a recurrent neural network architecture designed for sequence-to-sequence tasks, such as machine translation or text generation. The "Prelude" likely handles initial embedding or encoding of the input sequence. The recurrent blocks process the sequence step-by-step, maintaining a hidden state (`s₀`, `s₁`, `sR`) that captures information about the past. The "Input Injection" allows the network to incorporate context from previous time steps. Finally, the "Coda" decodes the hidden state into the output sequence ("World"). The noise component suggests a regularization technique or a way to introduce variability in the model's predictions. The use of a residual stream indicates a potential mechanism for mitigating the vanishing gradient problem, common in deep recurrent networks. The diagram is a high-level representation and does not specify the internal workings of the "Prelude", "Recurrent Block", or "Coda" components.