## Block Diagram: Sequence-to-Sequence Processing Architecture

### Overview

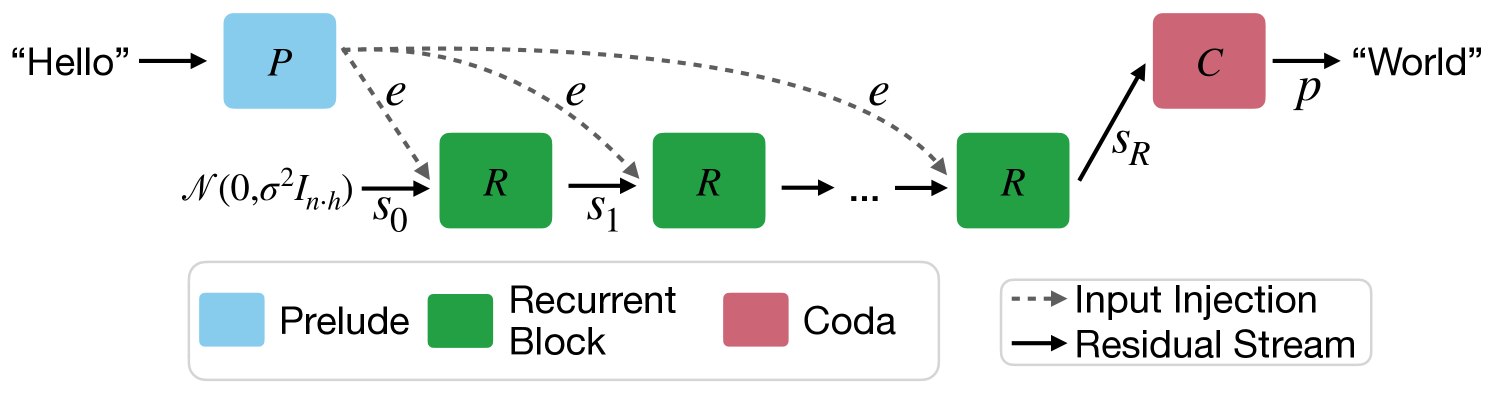

The diagram illustrates a computational architecture for processing sequential input ("Hello") into output ("World") using a combination of Prelude, Recurrent Blocks, and Coda components. The system employs residual connections and input injection mechanisms.

### Components/Axes

1. **Legend** (bottom-left):

- Blue: Prelude (P)

- Green: Recurrent Block (R)

- Red: Coda (C)

- Dotted lines: Residual Stream (e)

- Solid arrows: Main data flow

2. **Key Elements**:

- Input: "Hello" (left)

- Output: "World" (right)

- Residual connections: Dashed lines labeled "e"

- Input Injection: Dashed arrow labeled "Input Injection"

- Residual Stream: Solid arrow labeled "S_R"

### Detailed Analysis

1. **Flow Path**:

- "Hello" → P (Prelude) → R₁ (Recurrent Block 1) → R₂ (Recurrent Block 2) → ... → Rₙ (Final Recurrent Block) → C (Coda) → "World"

- Residual connections (e) bypass each R block to subsequent blocks

- Final residual stream (S_R) connects last R block to C

2. **Component Relationships**:

- **Prelude (P)**: Initial processing unit receiving raw input

- **Recurrent Blocks (R)**: Sequential processing units with internal state (s₀, s₁, ..., s_R)

- **Coda (C)**: Final output generator

- Residual streams enable gradient propagation through deep networks

### Key Observations

1. **Residual Architecture**: Multiple residual connections (e) between R blocks suggest skip connections for improved gradient flow

2. **State Progression**: Internal states (s₀ → s₁ → ... → s_R) indicate sequential memory maintenance

3. **Input Injection**: External information can be injected at any processing stage

4. **Output Transformation**: "Hello" → "World" implies sequence-to-sequence mapping capability

### Interpretation

This architecture resembles a Transformer-based encoder-decoder model with residual connections, optimized for:

1. **Long Sequence Handling**: Recurrent blocks with residual streams mitigate vanishing gradient problems

2. **Contextual Processing**: Input injection allows mid-sequence modifications

3. **Efficient Training**: Residual connections enable deeper networks without performance degradation

4. **Sequence Generation**: Final Coda component suggests autoregressive output generation

The "Hello" → "World" transformation exemplifies a basic sequence-to-sequence task, potentially representing text generation, translation, or speech synthesis systems. The residual stream (S_R) acts as a memory buffer preserving critical information across processing stages.