## Diagram: Parallel Computation Flow Over Time and Processors

### Overview

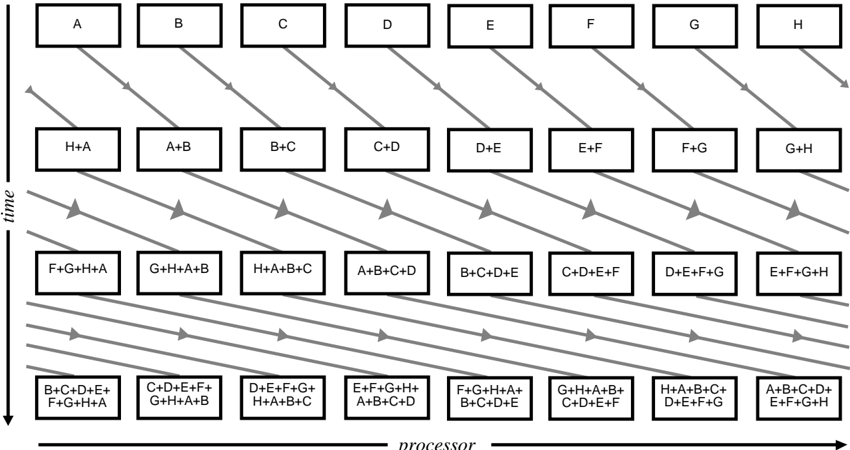

The image is a technical diagram illustrating a parallel computation or data aggregation process across multiple processors over discrete time steps. It shows how data elements (labeled A through H) are combined and communicated between processors in a structured, recursive pattern. The diagram is monochromatic (black, white, and gray) and uses boxes and directional arrows to represent processors, data, and communication flow.

### Components/Axes

* **Vertical Axis (Left Side):** Labeled **"time"** with a downward-pointing arrow, indicating that time progresses from the top of the diagram to the bottom.

* **Horizontal Axis (Bottom):** Labeled **"processor"** with a rightward-pointing arrow, indicating that processors are arranged linearly from left to right.

* **Processors:** Represented by eight columns of boxes. Each column corresponds to a single processor.

* **Data/State Boxes:** Rectangular boxes with black borders containing text. Each box represents the state or data held by a specific processor at a specific time step.

* **Communication Arrows:** Gray, diagonal arrows connecting boxes between different processors and time steps. These arrows indicate the direction of data transfer or dependency. Arrows originating from a box point to the box(s) that will receive that data in the next time step.

### Detailed Analysis

The diagram is structured in four distinct time steps (rows), with eight processors (columns) at each step.

**Time Step 1 (Top Row):**

* **Processor State:** Each processor holds a single, unique data element.

* **Content (Left to Right):** `A`, `B`, `C`, `D`, `E`, `F`, `G`, `H`.

* **Flow:** From each box, a gray arrow points diagonally down and to the right to the processor immediately to its right in the next time step. The box for processor H (far right) has an arrow pointing off the right edge, suggesting a wrap-around or cyclic connection to the first processor (A) in the next step.

**Time Step 2 (Second Row):**

* **Processor State:** Each processor now holds the sum/combination of its original element and the element from the processor to its left (with wrap-around).

* **Content (Left to Right):** `H+A`, `A+B`, `B+C`, `C+D`, `D+E`, `E+F`, `F+G`, `G+H`.

* **Flow:** From each box, two gray arrows emerge:

1. One points diagonally down and to the right to the processor immediately to its right.

2. One points diagonally down and to the left to the processor immediately to its left.

This creates a crisscross pattern of communication.

**Time Step 3 (Third Row):**

* **Processor State:** Each processor now holds the combination of four elements. The pattern suggests it has aggregated data from itself and its three nearest neighbors in a cyclic fashion.

* **Content (Left to Right):** `F+G+H+A`, `G+H+A+B`, `H+A+B+C`, `A+B+C+D`, `B+C+D+E`, `C+D+E+F`, `D+E+F+G`, `E+F+G+H`.

* **Flow:** From each box, two gray arrows emerge, pointing diagonally down to the processors two steps to the left and two steps to the right (with wrap-around).

**Time Step 4 (Bottom Row):**

* **Processor State:** Each processor now holds the combination of all eight elements (A through H). The order of terms within each box varies, but the set is complete.

* **Content (Left to Right):**

1. `B+C+D+E+ F+G+H+A`

2. `C+D+E+F+ G+H+A+B`

3. `D+E+F+G+ H+A+B+C`

4. `E+F+G+H+ A+B+C+D`

5. `F+G+H+A+ B+C+D+E`

6. `G+H+A+B+ C+D+E+F`

7. `H+A+B+C+ D+E+F+G`

8. `A+B+C+D+ E+F+G+H`

* **Flow:** No arrows originate from this final row, indicating the completion of the aggregation phase.

### Key Observations

1. **Recursive Doubling Pattern:** The number of elements combined per processor doubles at each time step: 1 → 2 → 4 → 8. This is characteristic of efficient parallel algorithms like a **parallel prefix sum (scan)** or a **butterfly network** used in Fast Fourier Transforms (FFT).

2. **Cyclic/Wrap-Around Topology:** The communication pattern is not linear but cyclic. Data from the rightmost processor (H) feeds into the leftmost processor (A) in the subsequent step, and vice-versa for neighbor connections. This suggests the processors are logically arranged in a ring.

3. **Symmetry and Regularity:** The diagram is highly symmetric. The communication pattern (the gray arrows) is identical for each processor within a given time step, just shifted horizontally.

4. **Data Movement:** The arrows clearly show that data flows both left and right simultaneously after the first step, enabling rapid global aggregation.

### Interpretation

This diagram visually encodes the operation of a **parallel algorithm for global computation on a distributed-memory system with a ring interconnect**.

* **What it demonstrates:** It shows how a set of distributed data elements (A-H) can be efficiently combined (e.g., summed, multiplied, or otherwise reduced) across all processors in O(log N) time steps (where N=8 processors here), rather than O(N) steps required by a sequential or simple pipeline approach.

* **How elements relate:** The "processor" axis represents spatial distribution (different hardware units). The "time" axis represents the sequence of computation and communication rounds. The boxes are the local state, and the arrows are the message-passing operations. Each step involves a local computation (combining received data with local data) followed by communication to neighbors.

* **Notable Pattern:** The final state shows every processor possesses the complete global result (the combination of all elements A-H), albeit in a different permuted order. This is a common outcome of certain parallel scan algorithms, where the final "result" is distributed across the array of processors.

* **Underlying Principle:** The pattern is a classic **butterfly** or **exchange** pattern. It is the core communication kernel for many parallel algorithms, demonstrating how logarithmic scaling is achieved in parallel computing through recursive doubling and structured neighbor communication. The diagram is a pedagogical tool to explain concepts like parallel efficiency, communication overhead, and algorithmic scalability in high-performance computing (HPC).