# Technical Document Extraction: Multi-Agent Reinforcement Learning Strategies for Meta-Thinking

## 1. Document Overview

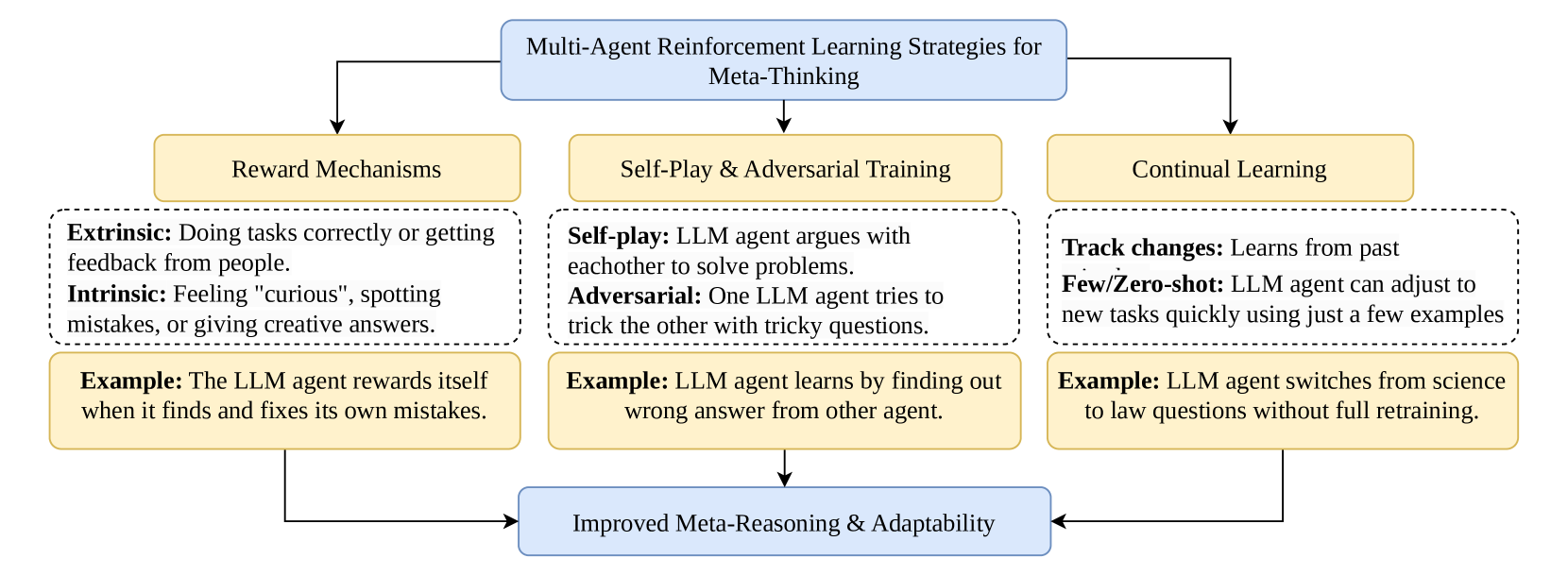

This image is a hierarchical flow diagram illustrating the strategies used in Multi-Agent Reinforcement Learning (MARL) to achieve "Meta-Thinking" in Large Language Models (LLMs). The diagram flows from a central top-level concept through three distinct methodological pillars, each containing definitions and practical examples, culminating in a unified outcome.

---

## 2. Component Isolation & Analysis

### Region A: Header (Top Level)

* **Component:** Central blue rounded rectangle.

* **Text:** "Multi-Agent Reinforcement Learning Strategies for Meta-Thinking"

* **Function:** Defines the primary subject matter. Three arrows originate from this box, pointing downward to the three core strategy pillars.

### Region B: Main Content (Three Pillars)

The diagram is segmented into three vertical columns, each representing a specific strategy.

#### Pillar 1: Reward Mechanisms

* **Category Header (Yellow Box):** Reward Mechanisms

* **Definitions (Dashed Box):**

* **Extrinsic:** Doing tasks correctly or getting feedback from people.

* **Intrinsic:** Feeling "curious", spotting mistakes, or giving creative answers.

* **Practical Application (Yellow Box):**

* **Example:** The LLM agent rewards itself when it finds and fixes its own mistakes.

#### Pillar 2: Self-Play & Adversarial Training

* **Category Header (Yellow Box):** Self-Play & Adversarial Training

* **Definitions (Dashed Box):**

* **Self-play:** LLM agent argues with eachother to solve problems.

* **Adversarial:** One LLM agent tries to trick the other with tricky questions.

* **Practical Application (Yellow Box):**

* **Example:** LLM agent learns by finding out wrong answer from other agent.

#### Pillar 3: Continual Learning

* **Category Header (Yellow Box):** Continual Learning

* **Definitions (Dashed Box):**

* **Track changes:** Learns from past

* **Few/Zero-shot:** LLM agent can adjust to new tasks quickly using just a few examples

* **Practical Application (Yellow Box):**

* **Example:** LLM agent switches from science to law questions without full retraining.

### Region C: Footer (Outcome Level)

* **Component:** Central blue rounded rectangle at the bottom.

* **Text:** "Improved Meta-Reasoning & Adaptability"

* **Function:** Represents the final goal or result. Three arrows from the bottom of each pillar converge into this box.

---

## 3. Flow and Logic Summary

The diagram establishes a causal relationship between specific reinforcement learning methodologies and the development of advanced cognitive capabilities in AI.

1. **Input/Strategy:** The process begins with the implementation of **Reward Mechanisms** (balancing external feedback with internal curiosity), **Self-Play/Adversarial Training** (leveraging multi-agent interaction and competition), and **Continual Learning** (maintaining knowledge over time and adapting to new domains).

2. **Process:** These strategies involve specific behaviors such as self-correction, argumentative problem solving, and rapid task switching.

3. **Output:** The successful integration of these three pillars leads to the final state of **Improved Meta-Reasoning & Adaptability**.

---

## 4. Textual Transcription (Precise)

| Section | Content |

| :--- | :--- |

| **Top Header** | Multi-Agent Reinforcement Learning Strategies for Meta-Thinking |

| **Pillar 1 Header** | Reward Mechanisms |

| **Pillar 1 Body** | **Extrinsic:** Doing tasks correctly or getting feedback from people. <br> **Intrinsic:** Feeling "curious", spotting mistakes, or giving creative answers. |

| **Pillar 1 Example** | **Example:** The LLM agent rewards itself when it finds and fixes its own mistakes. |

| **Pillar 2 Header** | Self-Play & Adversarial Training |

| **Pillar 2 Body** | **Self-play:** LLM agent argues with eachother to solve problems. <br> **Adversarial:** One LLM agent tries to trick the other with tricky questions. |

| **Pillar 2 Example** | **Example:** LLM agent learns by finding out wrong answer from other agent. |

| **Pillar 3 Header** | Continual Learning |

| **Pillar 3 Body** | **Track changes:** Learns from past <br> **Few/Zero-shot:** LLM agent can adjust to new tasks quickly using just a few examples |

| **Pillar 3 Example** | **Example:** LLM agent switches from science to law questions without full retraining. |

| **Bottom Footer** | Improved Meta-Reasoning & Adaptability |