## Diagram: Knowledge Retrieval-Augmented Generation (RAG) System Flowchart

### Overview

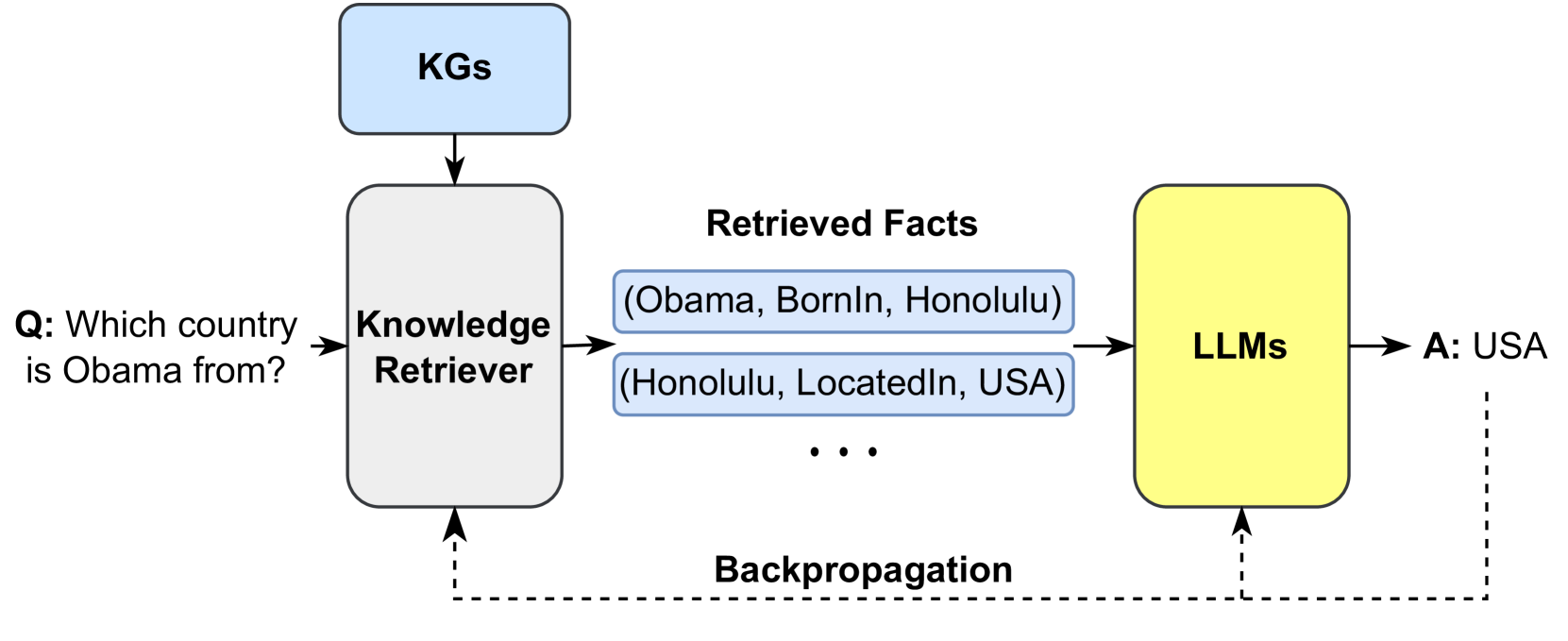

This image is a technical flowchart illustrating a knowledge retrieval-augmented generation (RAG) system architecture. It demonstrates the process of answering a factual question by retrieving structured data from Knowledge Graphs (KGs) and using a Large Language Model (LLM) to generate a final answer, with a backpropagation mechanism for system improvement.

### Components/Axes

The diagram consists of several labeled components connected by directional arrows, indicating data flow. There are no traditional chart axes. The components are:

1. **Input Question (Q):** "Which country is Obama from?" (Position: Far left)

2. **KGs:** A light blue, rounded rectangle labeled "KGs" (Position: Top-left). This represents Knowledge Graphs, the source of structured data.

3. **Knowledge Retriever:** A white, rounded rectangle with a black border labeled "Knowledge Retriever" (Position: Center-left). It receives input from the question and the KGs.

4. **Retrieved Facts:** A section containing two light blue, rounded rectangles with black text, labeled collectively as "Retrieved Facts" (Position: Center). The facts are presented as triples:

* (Obama, BornIn, Honolulu)

* (Honolulu, LocatedIn, USA)

* An ellipsis "..." indicates additional retrieved facts.

5. **LLMs:** A yellow, rounded rectangle labeled "LLMs" (Position: Center-right). This represents the Large Language Model component.

6. **Output Answer (A):** "A: USA" (Position: Far right).

7. **Backpropagation:** A dashed line with an arrowhead, labeled "Backpropagation" (Position: Bottom, running from the output back to the Knowledge Retriever and LLMs).

### Detailed Analysis

The process flow is as follows:

1. **Input:** The system receives a natural language question: "Which country is Obama from?".

2. **Retrieval Phase:** The question is sent to the **Knowledge Retriever**. The retriever queries the **KGs** (Knowledge Graphs) to find relevant structured information.

3. **Fact Extraction:** The retriever outputs a set of **Retrieved Facts** in the form of subject-predicate-object triples. The explicit examples provided are:

* `(Obama, BornIn, Honolulu)`: States that Barack Obama was born in Honolulu.

* `(Honolulu, LocatedIn, USA)`: States that Honolulu is located in the USA.

The ellipsis implies the retrieval of other related triples (e.g., `(USA, IsA, Country)`).

4. **Generation Phase:** The retrieved facts are fed as context into the **LLMs** (Large Language Model).

5. **Output:** The LLM processes the question and the factual context to generate the final answer: "USA".

6. **Learning Loop:** A dashed line labeled **Backpropagation** connects the output back to both the **Knowledge Retriever** and the **LLMs**. This indicates a feedback mechanism where the system's performance can be used to update and improve both the retrieval and generation components.

### Key Observations

* **Hybrid Architecture:** The system combines symbolic AI (structured Knowledge Graphs) with neural AI (LLMs).

* **Explicit Reasoning Path:** The retrieved facts provide a clear, logical chain (Obama -> BornIn -> Honolulu -> LocatedIn -> USA) that justifies the final answer, enhancing interpretability.

* **Closed-Loop System:** The presence of backpropagation signifies this is a trainable system, not a static pipeline. Errors in the final answer can be used to refine the retriever's search and the LLM's reasoning.

* **Spatial Layout:** The flow is strictly left-to-right for the forward inference pass. The backpropagation loop is visually distinct at the bottom, emphasizing its role as a separate training/optimization phase.

### Interpretation

This diagram illustrates a core concept in modern AI: **Retrieval-Augmented Generation (RAG)**. The system is designed to overcome a key limitation of standalone LLMs—their potential for hallucination and lack of up-to-date or precise factual knowledge.

* **What it demonstrates:** It shows how grounding an LLM's response in externally retrieved, structured facts from a KG can lead to more accurate, verifiable, and trustworthy answers. The LLM's role shifts from being a sole repository of knowledge to being a reasoner that synthesizes provided information.

* **Relationships:** The Knowledge Retriever acts as a bridge between the unstructured query and the structured knowledge base. The LLM acts as the reasoning engine that interprets the retrieved facts in the context of the original question. The backpropagation link is critical, suggesting the entire system can be trained end-to-end, potentially optimizing what facts are retrieved and how they are used.

* **Significance:** This architecture is foundational for building AI assistants that can answer questions accurately about specific domains (using private KGs) or dynamic information (using constantly updated KGs), while maintaining the natural language fluency of LLMs. The example using Barack Obama is a simple, clear demonstration of multi-hop reasoning (connecting two facts) to derive an answer not explicitly stated in a single triple.