## Timeline of AI Development

### Overview

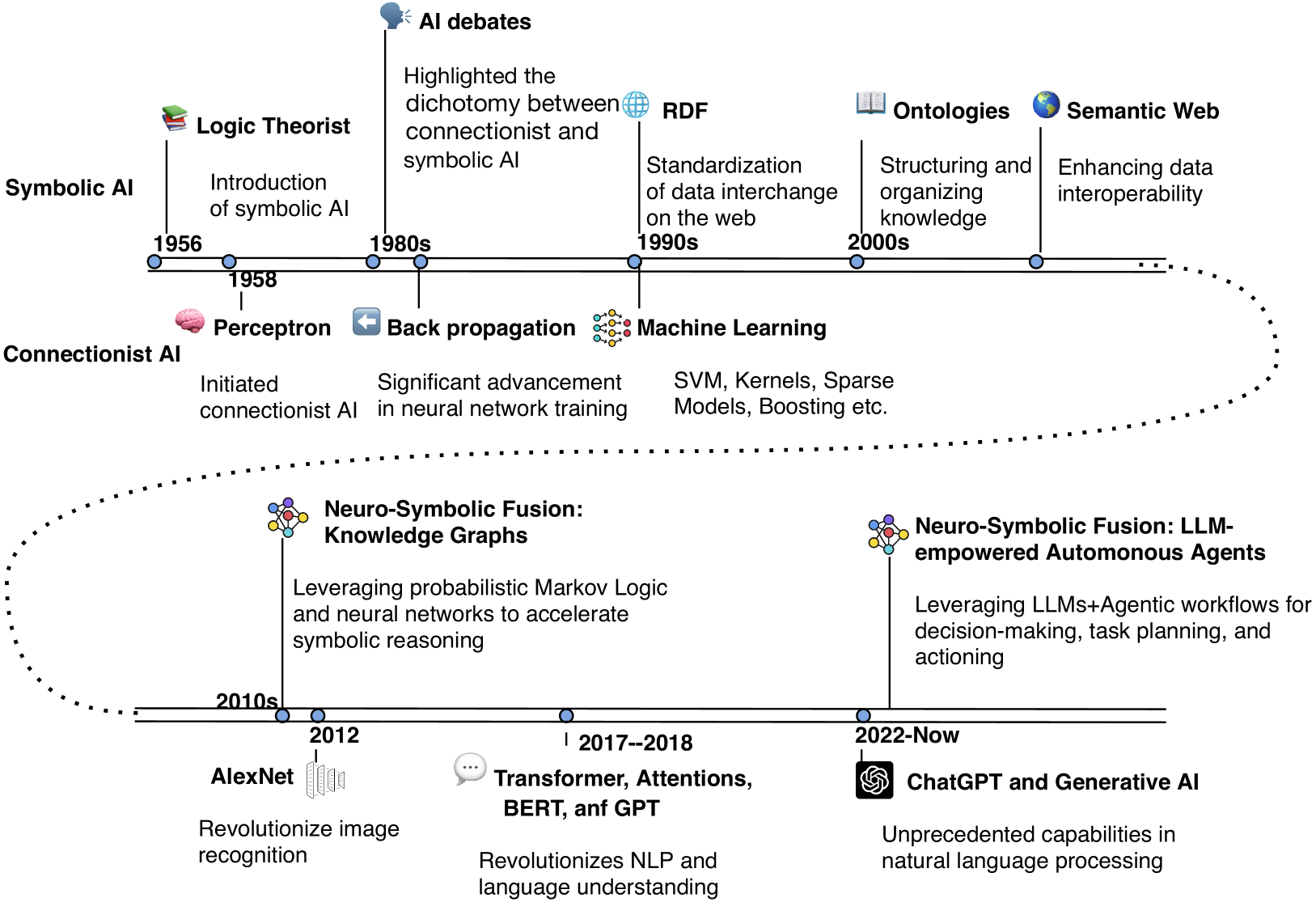

The timeline illustrates the evolution of Artificial Intelligence (AI) from its inception in the 1950s to the present day, highlighting key milestones and advancements in both symbolic and connectionist AI, as well as the emergence of neuro-symbolic fusion and LLMs.

### Components/Axes

- **Symbolic AI**: Introduced in 1956 with the Logic Theorist, it includes concepts like the Perceptron and Backpropagation.

- **Connectionist AI**: Initiated in the 1980s with the introduction of the Perceptron, it saw significant advancements in neural network training with the advent of SVM, Kernels, and Sparse Models.

- **Neuro-Symbolic Fusion**: Introduced in the 2010s, it leverages probabilistic Markov Logic and neural networks to accelerate symbolic reasoning.

- **LLMs (Large Language Models)**: Introduced in the 2020s, they empower autonomous agents with capabilities in decision-making, task planning, and action.

### Detailed Analysis or ### Content Details

- **1956**: The Logic Theorist is introduced, marking the beginning of symbolic AI.

- **1980s**: The Perceptron is introduced, and Backpropagation is developed, leading to significant advancements in neural network training.

- **1990s**: The Standardization of data interchange on the web (RDF) and the Structuring and organizing knowledge (Ontologies) are introduced, enhancing data interoperability.

- **2000s**: The Semantic Web is introduced, aiming to enhance data interoperability.

- **2010s**: AlexNet revolutionizes image recognition, and the Transformer, Attention, BERT, and GPT revolutionize NLP and language understanding.

- **2020s**: ChatGPT and Generative AI are introduced, showcasing unprecedented capabilities in natural language processing.

### Key Observations

- The timeline shows a clear progression from symbolic AI to connectionist AI, then to neuro-symbolic fusion, and finally to LLMs.

- There are notable advancements in neural network training and the introduction of LLMs, which have significantly impacted AI capabilities.

- The timeline highlights the importance of data standardization and knowledge organization in enhancing AI interoperability.

### Interpretation

The timeline suggests that AI has evolved from symbolic AI, which relies on explicit rules and logic, to connectionist AI, which uses neural networks to learn from data. The introduction of neuro-symbolic fusion represents a new approach that combines the strengths of both symbolic and connectionist AI. LLMs have further advanced AI capabilities by providing unprecedented capabilities in natural language processing. The timeline also highlights the importance of data standardization and knowledge organization in enhancing AI interoperability.