## Technical Diagram: LLM-Agent Knowledge Interaction Architectures

### Overview

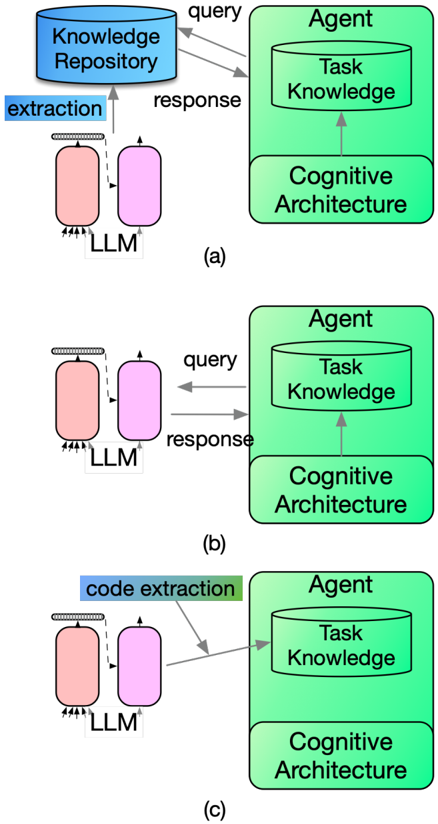

The image displays three distinct architectural diagrams, labeled (a), (b), and (c), illustrating different methods for a Large Language Model (LLM) to interact with and provide knowledge to an autonomous Agent system. Each diagram shows a flow of information between an LLM component and an Agent component, with variations in the presence of an intermediate knowledge repository and the method of knowledge transfer.

### Components/Axes

The diagrams are composed of the following labeled components, consistently positioned across all three panels:

* **LLM**: Depicted on the left side as two connected, rounded rectangular blocks (one pink, one purple). This represents the Large Language Model system.

* **Agent**: Depicted on the right side as a large green rounded rectangle. It contains two internal components:

* **Task Knowledge**: A cylindrical database icon within the Agent.

* **Cognitive Architecture**: A rectangular block below the Task Knowledge within the Agent.

* **Knowledge Repository**: A blue cylindrical database icon, present only in diagram (a), located in the top-left area.

* **Flow Arrows & Labels**: Arrows indicate the direction of data flow, accompanied by text labels:

* `extraction` (blue label in (a))

* `query` (gray arrow)

* `response` (gray arrow)

* `code extraction` (blue/green label in (c))

### Detailed Analysis

The three diagrams represent a progression or comparison of knowledge integration strategies:

**Diagram (a): Indirect Knowledge via Repository**

* **Flow**: The LLM performs an `extraction` process to populate a central `Knowledge Repository`. The `Agent` then sends a `query` to this repository and receives a `response`. The Agent's internal `Cognitive Architecture` feeds into its `Task Knowledge`.

* **Spatial Grounding**: The `Knowledge Repository` is positioned top-left, acting as an intermediary. The `extraction` arrow points upward from the LLM to the repository. The `query` and `response` arrows form a loop between the Agent and the repository.

**Diagram (b): Direct Query-Response with Agent**

* **Flow**: The `Knowledge Repository` is absent. The `Agent` sends a `query` directly to the `LLM` and receives a `response` directly from it. The internal structure of the Agent (`Task Knowledge` and `Cognitive Architecture`) remains the same.

* **Spatial Grounding**: The interaction is a direct, horizontal loop between the LLM (left) and the Agent (right).

**Diagram (c): Direct Code Extraction to Agent**

* **Flow**: The `Knowledge Repository` is absent. The `LLM` performs a `code extraction` process, and the resulting output is sent directly to the Agent's `Task Knowledge` component. There is no depicted `query`/`response` loop in this panel.

* **Spatial Grounding**: The `code extraction` label is positioned above the arrow that originates from the LLM and points directly to the Agent's `Task Knowledge` cylinder.

### Key Observations

1. **Architectural Variants**: The core difference is the knowledge pathway: (a) uses a shared, external repository; (b) uses a direct, conversational query-response model; (c) uses a direct, one-way transfer of extracted code or structured knowledge.

2. **Agent Internal Consistency**: The internal composition of the `Agent` (`Task Knowledge` + `Cognitive Architecture`) is identical in all three diagrams, suggesting this is a fixed component of the agent's design.

3. **LLM Role Evolution**: The LLM's role shifts from a data extractor for a repository (a), to a direct conversational partner (b), to a code/knowledge extractor for the agent's internal store (c).

4. **Simplification Trend**: The diagrams progress from a three-component system (LLM, Repository, Agent) in (a) to a two-component system (LLM, Agent) in (b) and (c), potentially indicating a move towards more integrated or efficient architectures.

### Interpretation

These diagrams illustrate competing design philosophies for augmenting an AI agent's knowledge using an LLM.

* **Diagram (a)** represents a **decoupled, retrieval-augmented generation (RAG) style architecture**. The Knowledge Repository acts as a persistent, queryable memory. This allows for structured knowledge management and potentially reduces direct load on the LLM, but adds complexity and a point of failure.

* **Diagram (b)** represents a **direct, service-oriented architecture**. The LLM acts as a real-time knowledge service for the agent. This is simpler and more dynamic but may be less efficient for frequent queries and lacks a persistent, updatable knowledge base separate from the LLM's weights.

* **Diagram (c)** represents a **direct knowledge injection or tool-use architecture**. The LLM extracts or generates specific code or structured data (`code extraction`) and writes it directly into the agent's task knowledge. This is a one-way, imperative transfer, ideal for providing the agent with new capabilities, scripts, or fixed data sets, but not for open-ended Q&A.

The progression suggests an investigation into optimizing the interface between a reasoning agent (with its own cognitive architecture and task knowledge) and a large language model, weighing trade-offs between persistence, dynamism, and efficiency of knowledge transfer. The absence of a query/response loop in (c) is particularly notable, highlighting a fundamentally different interaction paradigm focused on provisioning rather than consultation.