## Heatmap: Classification Accuracies

### Overview

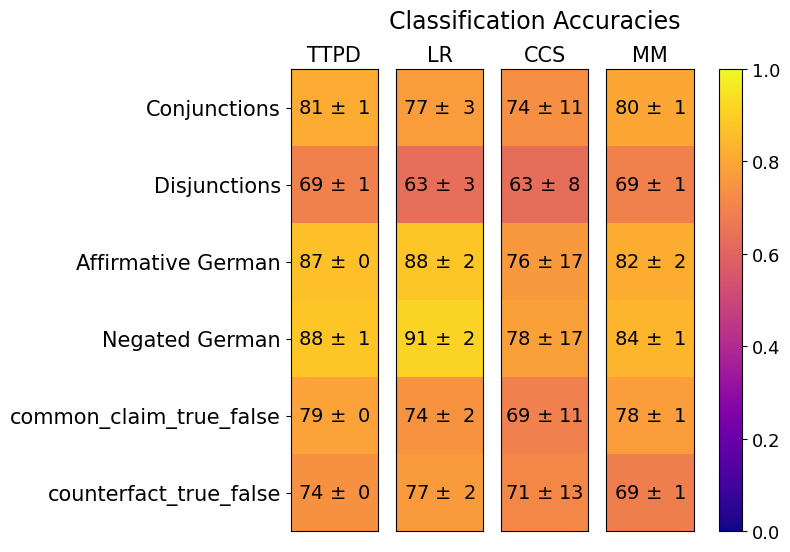

The image is a heatmap titled "Classification Accuracies." It displays the performance (accuracy) of four different methods (TTPD, LR, CCS, MM) across six different classification tasks or datasets. Performance is represented by both a numerical value (accuracy percentage with uncertainty) and a color gradient, where yellow indicates higher accuracy and purple indicates lower accuracy.

### Components/Axes

* **Title:** "Classification Accuracies"

* **Y-axis (Rows):** Six classification tasks/datasets:

1. Conjunctions

2. Disjunctions

3. Affirmative German

4. Negated German

5. common_claim_true_false

6. counterfact_true_false

* **X-axis (Columns):** Four methods/models:

1. TTPD

2. LR

3. CCS

4. MM

* **Legend/Color Scale:** A vertical color bar on the right side of the chart. It maps color to accuracy value, ranging from 0.0 (dark purple) to 1.0 (bright yellow). Major tick marks are at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Data Cells:** Each cell contains the mean accuracy followed by a "±" symbol and the uncertainty (likely standard deviation or standard error). The cell's background color corresponds to the mean accuracy value on the color scale.

### Detailed Analysis

**Data Extraction (Accuracy ± Uncertainty):**

| Task / Dataset | TTPD | LR | CCS | MM |

| :--- | :--- | :--- | :--- | :--- |

| **Conjunctions** | 81 ± 1 | 77 ± 3 | 74 ± 11 | 80 ± 1 |

| **Disjunctions** | 69 ± 1 | 63 ± 3 | 63 ± 8 | 69 ± 1 |

| **Affirmative German** | 87 ± 0 | 88 ± 2 | 76 ± 17 | 82 ± 2 |

| **Negated German** | 88 ± 1 | 91 ± 2 | 78 ± 17 | 84 ± 1 |

| **common_claim_true_false** | 79 ± 0 | 74 ± 2 | 69 ± 11 | 78 ± 1 |

| **counterfact_true_false** | 74 ± 0 | 77 ± 2 | 71 ± 13 | 69 ± 1 |

**Color-Coded Performance Trends:**

* **Highest Accuracy (Bright Yellow):** The cell for **LR on Negated German (91 ± 2)** is the brightest yellow, indicating the highest accuracy in the chart.

* **High Accuracy (Yellow-Orange):** TTPD and LR on the German tasks (Affirmative and Negated) show high accuracy (87-91 range). TTPD on Conjunctions (81) and MM on Conjunctions (80) are also in this range.

* **Moderate Accuracy (Orange):** Most other cells fall in this range, including all results for `common_claim_true_false` and `counterfact_true_false`, and the Conjunctions results for CCS (74).

* **Lower Accuracy (Red-Orange):** The Disjunctions task shows the lowest performance across all methods, with accuracies between 63 and 69. The cell for **LR on Disjunctions (63 ± 3)** is the darkest red-orange, indicating the lowest accuracy.

* **Uncertainty (± value):** The **CCS method consistently shows the highest uncertainty** across all tasks (±8 to ±17), visually represented by the wider spread implied by its error margins. TTPD and MM generally show the lowest uncertainty (±0 to ±2).

### Key Observations

1. **Task Difficulty:** The "Disjunctions" task is the most challenging for all four methods, yielding the lowest accuracy scores. The German language tasks ("Affirmative German" and "Negated German") appear to be the easiest, achieving the highest scores.

2. **Method Performance:**

* **TTPD** is highly consistent, showing very low uncertainty (±0 or ±1) across all tasks and competitive accuracy.

* **LR** achieves the single highest accuracy (91 on Negated German) but shows more variability than TTPD, with lower scores on Disjunctions and `common_claim_true_false`.

* **CCS** has the poorest and most variable performance, with the lowest accuracy on several tasks and very high uncertainty values.

* **MM** performs similarly to TTPD on most tasks, with slightly lower accuracy on the German tasks but matching it on Disjunctions and Conjunctions.

3. **Language Effect:** For both LR and TTPD, performance on "Negated German" is slightly higher than on "Affirmative German." For MM, the trend is reversed.

### Interpretation

This heatmap provides a comparative benchmark of four methods on a suite of logical and linguistic classification tasks. The data suggests:

* **Task-Specific Strengths:** No single method is best across all tasks. LR excels on the German negation task, while TTPD offers the most reliable (low uncertainty) and consistently strong performance. This implies that method selection should be tailored to the specific type of classification problem.

* **The Challenge of Disjunctions:** The uniformly low scores on "Disjunctions" indicate this logical structure is inherently more difficult for these models to classify correctly compared to conjunctions or simple true/false claims. This could be a valuable focus area for future model improvement.

* **Uncertainty as a Metric:** The high uncertainty for CCS suggests its results are less reliable or that it is more sensitive to variations in the test data. In contrast, the low uncertainty of TTPD indicates robust and stable performance.

* **Linguistic Nuance:** The high accuracy on German tasks, particularly for LR, might indicate these models (or their training data) have strong capabilities in handling German syntax and negation, or that these specific datasets are less complex than the logical reasoning tasks like disjunctions.

In summary, the chart reveals a landscape where task difficulty varies significantly, and model performance is highly dependent on the specific logical or linguistic challenge presented. TTPD emerges as a robust all-rounder, LR as a high-potential specialist for certain tasks, and CCS as the least reliable method in this comparison.