## [Line Charts]: ARC-C Performance Metrics

### Overview

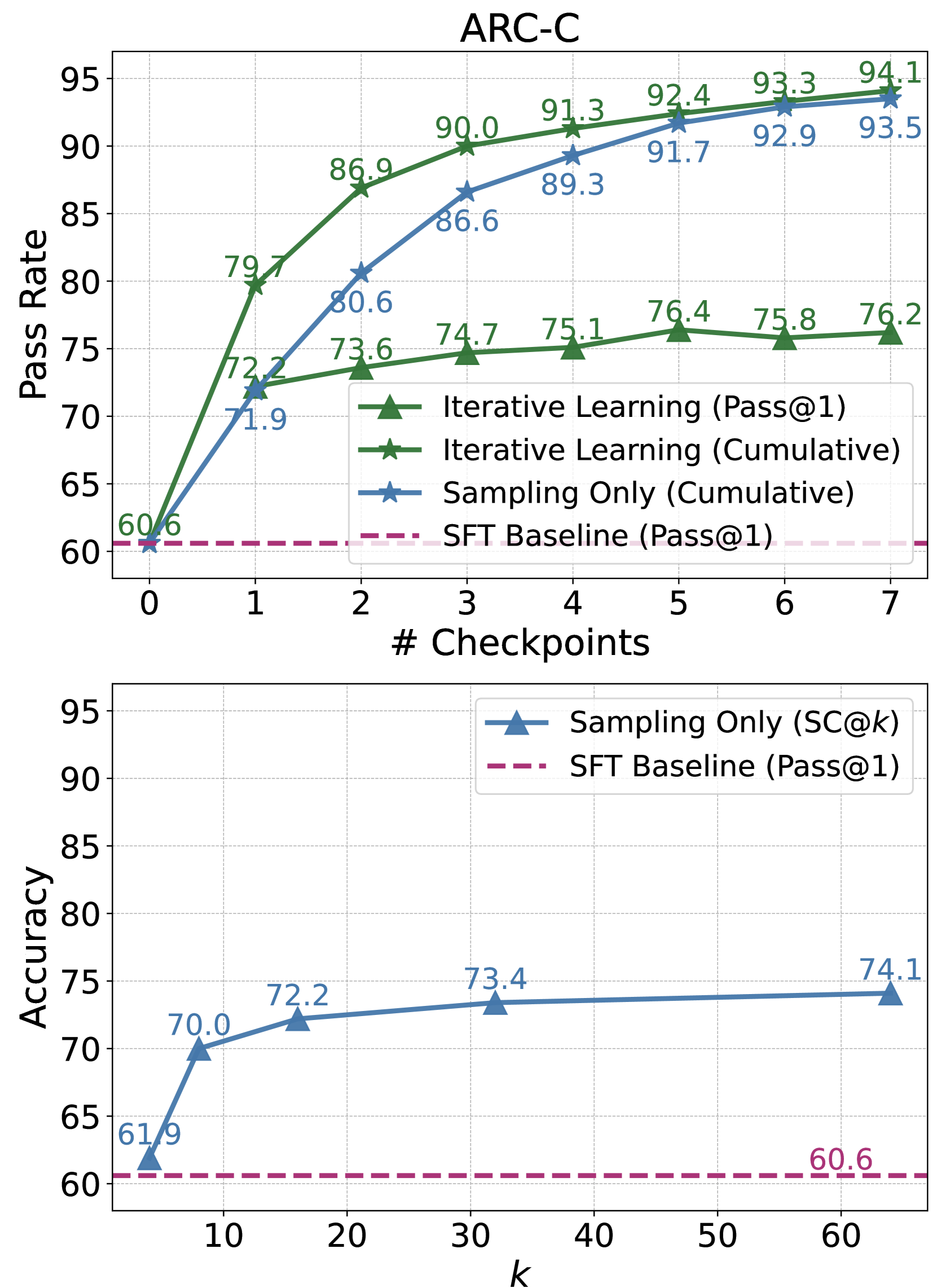

The image contains two line charts stacked vertically, both titled "ARC-C". They display performance metrics (Pass Rate and Accuracy) for different machine learning training/evaluation methods across varying parameters (Checkpoints and k). The charts compare "Iterative Learning", "Sampling Only", and an "SFT Baseline".

### Components/Axes

**Top Chart:**

* **Title:** ARC-C

* **Y-axis:** Label: "Pass Rate". Scale: 60 to 95, with major ticks every 5 units.

* **X-axis:** Label: "# Checkpoints". Scale: 0 to 7, with integer ticks.

* **Legend (Center-Right):**

* Green line with upward-pointing triangle markers: "Iterative Learning (Pass@1)"

* Green line with star markers: "Iterative Learning (Cumulative)"

* Blue line with star markers: "Sampling Only (Cumulative)"

* Pink dashed line: "SFT Baseline (Pass@1)"

**Bottom Chart:**

* **Y-axis:** Label: "Accuracy". Scale: 60 to 95, with major ticks every 5 units.

* **X-axis:** Label: "k". Scale: 10 to 60, with major ticks at 10, 20, 30, 40, 50, 60.

* **Legend (Top-Right):**

* Blue line with upward-pointing triangle markers: "Sampling Only (SC@k)"

* Pink dashed line: "SFT Baseline (Pass@1)"

### Detailed Analysis

**Top Chart - Data Points & Trends:**

* **Iterative Learning (Pass@1) [Green Triangles]:** Starts at 60.6 (k=0). Increases sharply to 72.2 (k=1), then rises more gradually: 73.6 (k=2), 74.7 (k=3), 75.1 (k=4), 76.4 (k=5), 75.8 (k=6), 76.2 (k=7). **Trend:** Steep initial rise, followed by a plateau around 76.

* **Iterative Learning (Cumulative) [Green Stars]:** Starts at 60.6 (k=0). Increases sharply to 79.7 (k=1), then continues a strong upward trend: 86.9 (k=2), 90.0 (k=3), 91.3 (k=4), 92.4 (k=5), 93.3 (k=6), 94.1 (k=7). **Trend:** Consistent, strong upward slope, approaching 95.

* **Sampling Only (Cumulative) [Blue Stars]:** Starts at 60.6 (k=0). Increases to 71.9 (k=1), then follows a steady upward curve: 80.6 (k=2), 86.6 (k=3), 89.3 (k=4), 91.7 (k=5), 92.9 (k=6), 93.5 (k=7). **Trend:** Steady upward slope, consistently below the Iterative Learning (Cumulative) line but converging towards it at higher checkpoints.

* **SFT Baseline (Pass@1) [Pink Dashed Line]:** Constant horizontal line at approximately 60.6 across all checkpoints.

**Bottom Chart - Data Points & Trends:**

* **Sampling Only (SC@k) [Blue Triangles]:** Data points at specific k values: 61.9 (k=1), 70.0 (k=8), 72.2 (k=16), 73.4 (k=32), 74.1 (k=64). **Trend:** Increases with k, but the rate of improvement diminishes significantly after k=16, showing a logarithmic-like growth curve.

* **SFT Baseline (Pass@1) [Pink Dashed Line]:** Constant horizontal line at 60.6 across all k values.

### Key Observations

1. **Performance Hierarchy:** In the top chart, "Iterative Learning (Cumulative)" achieves the highest Pass Rate, followed closely by "Sampling Only (Cumulative)". "Iterative Learning (Pass@1)" performs significantly lower than the cumulative methods but still well above the baseline.

2. **Baseline Comparison:** All active learning/sampling methods substantially outperform the static "SFT Baseline" of 60.6.

3. **Diminishing Returns:** Both charts show diminishing returns. In the top chart, the rate of improvement for all lines slows after Checkpoint 3. In the bottom chart, increasing `k` beyond 16 yields only marginal gains in Accuracy.

4. **Method Comparison:** The "Iterative Learning (Cumulative)" method shows a clear advantage over "Sampling Only (Cumulative)" at every checkpoint, though the gap narrows slightly at the highest values.

### Interpretation

These charts likely evaluate techniques for improving a language model's reasoning or problem-solving capabilities on the ARC (Abstraction and Reasoning Corpus) benchmark, specifically the "C" (likely "Challenge") subset.

* **What the data suggests:** The data demonstrates that both iterative learning and sampling-based methods are highly effective at improving model performance beyond a standard supervised fine-tuning (SFT) baseline. The cumulative metrics (which likely aggregate success across multiple attempts or steps) show that the model's *potential* to solve problems is much higher than its single-attempt (Pass@1) performance.

* **How elements relate:** The top chart shows the learning trajectory over training iterations (checkpoints). The bottom chart isolates the effect of the sampling parameter `k` (likely the number of samples generated per problem) on accuracy for the "Sampling Only" method. The consistent SFT baseline in both provides a fixed reference point.

* **Notable trends/anomalies:** The most significant trend is the superiority of cumulative evaluation over single-pass evaluation, highlighting the model's ability to self-correct or explore solution spaces when given multiple chances. The plateau in the "Iterative Learning (Pass@1)" line suggests a limit to the model's single-shot reasoning capability under this training regime, even as its cumulative capability continues to grow. The clear, consistent ordering of the methods provides strong evidence for the efficacy of iterative learning approaches over pure sampling for this task.