## Diagram: Neural Network Processing with PDE Output

### Overview

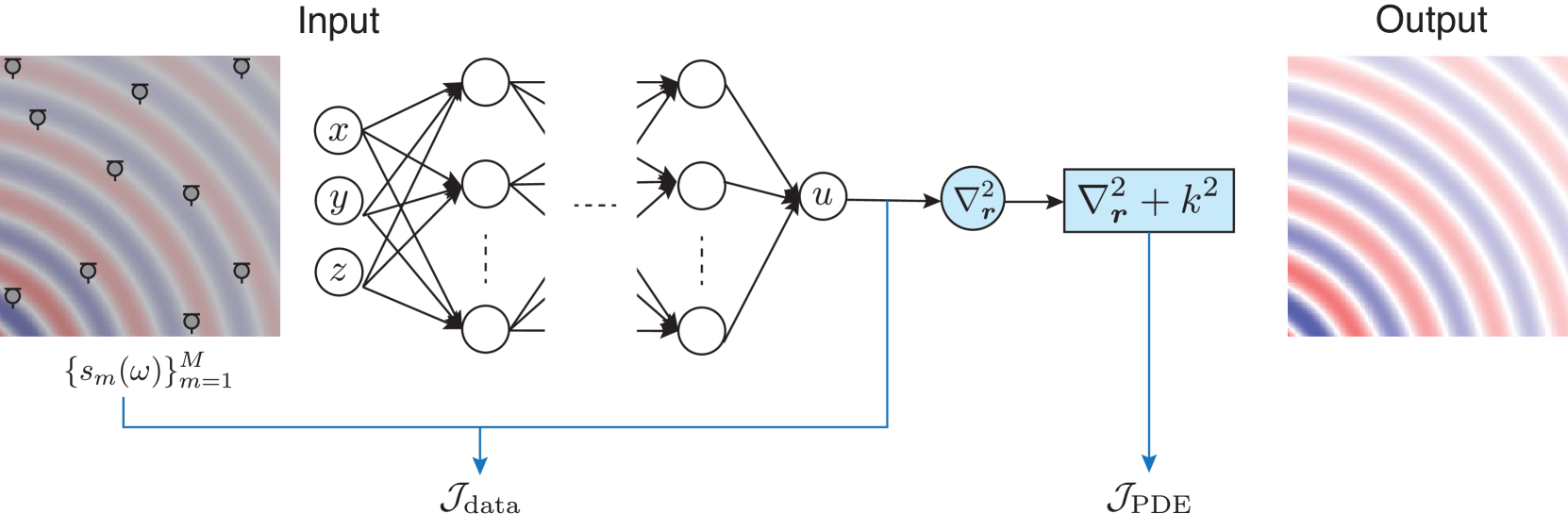

The diagram illustrates a computational pipeline where input data undergoes transformation through a neural network architecture, followed by a partial differential equation (PDE) operator, to produce an output. The input and output are visualized as heatmaps with radial gradient patterns, while the neural network and PDE components are represented as interconnected nodes and mathematical operators.

### Components/Axes

1. **Input Section**:

- **Heatmap**: Labeled "Input" with a radial gradient (blue to red) and scattered black symbols (possibly data points or markers).

- **Mathematical Notation**: `{s_m(ω)}^M_{m=1}` indicates a set of M functions or signals parameterized by ω, indexed from m=1 to M.

2. **Neural Network**:

- **Nodes**: Labeled `x`, `y`, `z`, and `u` (possibly input, hidden, and output layers).

- **Connections**: Dense interconnections between nodes, with dashed lines suggesting optional or residual pathways.

- **Data Flow**: Arrows labeled `J_data` indicate the flow of input data through the network.

3. **PDE Operator**:

- **Block**: Contains the equation `∇²_r + k²`, where `∇²_r` is the Laplacian operator in radial coordinates and `k²` is a constant term.

- **Data Flow**: Arrows labeled `J_PDE` show the output of the neural network being processed by the PDE operator.

4. **Output Section**:

- **Heatmap**: Labeled "Output" with a similar radial gradient pattern to the input, suggesting a transformed version of the input data.

### Detailed Analysis

- **Input Heatmap**: The radial gradient (blue to red) likely represents a spatial or frequency-domain signal, with black symbols marking specific data points or features.

- **Neural Network**: The architecture includes at least three layers (x, y, z) and a final node `u`. The dense connectivity implies a fully connected feedforward network, while dashed lines may indicate skip connections or dropout layers.

- **PDE Operator**: The term `∇²_r + k²` suggests a Helmholtz equation or similar PDE, where `k²` acts as a wavenumber squared term. This operator likely models wave propagation or diffusion processes.

- **Output Heatmap**: The output pattern mirrors the input's radial structure but with altered intensity, indicating the neural network and PDE have modified the input signal.

### Key Observations

1. **Symmetry in Input/Output**: Both heatmaps exhibit concentric ring patterns, implying the system preserves spatial or frequency-domain structure while modifying amplitude.

2. **PDE Integration**: The explicit inclusion of `∇²_r + k²` suggests the model incorporates physical laws (e.g., wave equations) into the neural network's output.

3. **Data Flow**: The labels `J_data` and `J_PDE` clarify the direction of information processing: input → neural network → PDE → output.

### Interpretation

This diagram represents a hybrid model combining machine learning and physics-based modeling. The neural network learns to map input data (e.g., sensor measurements or simulated signals) to an intermediate representation, which is then refined using a PDE to produce a physically consistent output. Applications could include:

- **Inverse Problems**: Reconstructing hidden variables (e.g., subsurface structures) from surface measurements.

- **Signal Processing**: Enhancing or denoising signals while preserving physical constraints.

- **Simulation**: Accelerating PDE-based simulations using learned neural network components.

The absence of numerical values or explicit training data suggests this is a conceptual or architectural diagram. The use of radial gradients in input/output heatmaps implies the system is designed for problems with rotational symmetry (e.g., acoustics, electromagnetics, or fluid dynamics).