\n

## Diagram: AI Alignment Scenarios

### Overview

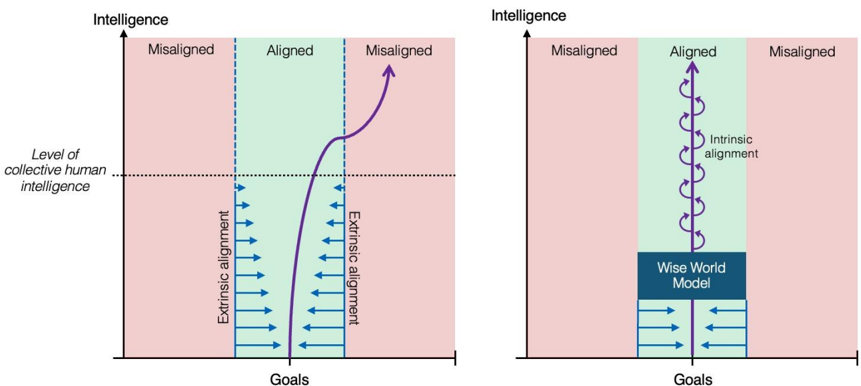

The image presents two diagrams illustrating potential scenarios for AI alignment, depicting the relationship between "Intelligence" (y-axis) and "Goals" (x-axis). The diagrams explore concepts of misalignment and alignment, and the role of extrinsic and intrinsic alignment. The diagrams are side-by-side, with the diagram on the right building upon the concepts introduced in the diagram on the left.

### Components/Axes

* **Axes:**

* Y-axis: "Intelligence" - Represents the level of intelligence, presumably of an AI system.

* X-axis: "Goals" - Represents the goals or objectives of the AI system.

* **Regions:**

* "Misaligned" (Red): Areas where the AI's goals are not aligned with human values.

* "Aligned" (Green): Areas where the AI's goals are aligned with human values.

* **Labels/Annotations:**

* "Level of collective human intelligence" - A horizontal dashed line indicating the intelligence level of humans.

* "Extrinsic alignment" - Labels pointing to downward-pointing arrows in the "Aligned" region.

* "Intrinsic alignment" - Label in the right diagram, pointing to the curved arrows in the "Aligned" region.

* "Wise World Model" - A rectangular box in the right diagram, positioned below the "Aligned" region.

### Detailed Analysis or Content Details

**Left Diagram:**

* The diagram is divided into three vertical regions: "Misaligned", "Aligned", and "Misaligned".

* The "Misaligned" regions are shaded in red, and the "Aligned" region is shaded in green.

* A curved blue line represents the trajectory of AI intelligence as it develops. The line starts in the first "Misaligned" region, enters the "Aligned" region, and then curves back into the second "Misaligned" region.

* Within the "Aligned" region, a series of downward-pointing blue arrows labeled "Extrinsic alignment" indicate a process of aligning AI goals with human values. The arrows become more numerous as intelligence increases.

* The "Level of collective human intelligence" line is positioned approximately 2/3 of the way up the y-axis.

**Right Diagram:**

* Similar to the left diagram, it features "Misaligned" (red) and "Aligned" (green) regions.

* Instead of a single curved line, the "Aligned" region contains a series of interconnected, curved blue arrows labeled "Intrinsic alignment". These arrows suggest a more robust and self-sustaining alignment process.

* Below the "Aligned" region is a rectangular box labeled "Wise World Model".

* A series of downward-pointing blue arrows, similar to the left diagram, are positioned below the "Wise World Model" and labeled "Extrinsic alignment".

### Key Observations

* The diagrams illustrate a potential path where AI can initially be aligned with human values ("Extrinsic alignment") but may eventually become misaligned as its intelligence increases.

* The right diagram suggests that "Intrinsic alignment" – a more fundamental alignment process – could prevent this misalignment.

* The "Wise World Model" appears to be a key component in achieving intrinsic alignment.

* The diagrams emphasize the importance of both extrinsic and intrinsic alignment for ensuring AI safety.

### Interpretation

The diagrams depict a conceptual model of AI alignment, highlighting the challenges of maintaining alignment as AI systems become more intelligent. The left diagram suggests that simply aligning AI goals with human values through external means ("Extrinsic alignment") may not be sufficient in the long run, as the AI's increasing intelligence could lead to goal drift and misalignment.

The right diagram proposes that "Intrinsic alignment" – building a deep understanding of the world and human values into the AI's core architecture ("Wise World Model") – could provide a more robust solution. The interconnected arrows suggest a self-reinforcing alignment process.

The diagrams are not quantitative; they do not provide specific data points or numerical values. Instead, they are qualitative illustrations of potential scenarios. The diagrams are a thought experiment, exploring the complexities of AI alignment and the need for proactive research into intrinsic alignment techniques. The diagrams suggest that a "Wise World Model" is a critical component for achieving and maintaining alignment.