\n

## Conceptual Diagram: AI Alignment Trajectories

### Overview

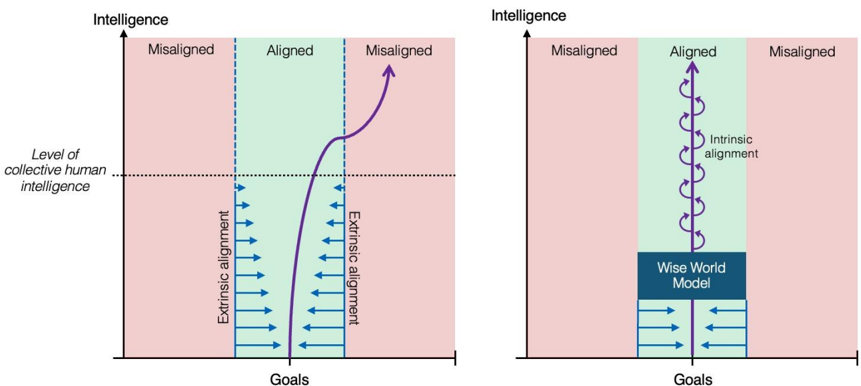

The image displays two side-by-side conceptual diagrams illustrating different trajectories for the development of artificial intelligence (AI) relative to human intelligence and alignment. Both diagrams use a 2D coordinate system with "Intelligence" on the vertical axis and "Goals" on the horizontal axis. The space is divided into three vertical regions: a central "Aligned" zone (light green) flanked by two "Misaligned" zones (light pink). A dashed horizontal line marks the "Level of collective human intelligence."

### Components/Axes

* **Vertical Axis:** Labeled "Intelligence" at the top left of each diagram. An arrow points upward, indicating increasing intelligence.

* **Horizontal Axis:** Labeled "Goals" at the bottom center of each diagram. An arrow points to the right, indicating a spectrum or change in goals.

* **Regions:** The background is divided into three vertical bands:

* Left Band: Labeled "Misaligned" at the top. Color: Light pink.

* Center Band: Labeled "Aligned" at the top. Color: Light green.

* Right Band: Labeled "Misaligned" at the top. Color: Light pink.

* **Reference Line:** A horizontal dashed line spans both diagrams, labeled on the far left as "Level of collective human intelligence."

### Detailed Analysis

**Left Diagram: Extrinsic vs. Intrinsic Alignment Trajectory**

* **Visual Elements:**

* A solid purple line represents the AI's developmental path.

* Sets of blue arrows are placed within the "Aligned" region, pointing inward from both the left and right misaligned boundaries.

* Text labels are placed vertically alongside these arrow sets.

* **Trajectory Description:** The purple line originates in the bottom-left "Misaligned" region. It curves upward and to the right, entering the central "Aligned" region. It continues upward, crossing the "Level of collective human intelligence" dashed line. After crossing this threshold, the line curves sharply to the right, exiting the "Aligned" region and entering the top-right "Misaligned" region, ending with an arrowhead.

* **Text & Labels:**

* Left set of blue arrows: Labeled vertically as "Extrinsic alignment".

* Right set of blue arrows: Labeled vertically as "Intrinsic alignment".

**Right Diagram: Intrinsic Alignment with a Foundational Model**

* **Visual Elements:**

* A solid purple line with a series of loops or spirals along its length represents the AI's developmental path.

* A dark blue rectangular box is positioned at the bottom of the "Aligned" region.

* Sets of blue arrows are placed below the blue box, pointing inward from the misaligned boundaries.

* **Trajectory Description:** The purple line originates from the top of the dark blue box. It proceeds vertically upward in a straight line, staying entirely within the central "Aligned" region. The line is annotated with the text "Intrinsic alignment" and features a series of small, tight loops along its upward path. It ends with an arrowhead at the top of the "Aligned" region, still below the upper boundary.

* **Text & Labels:**

* Text next to the purple line: "Intrinsic alignment".

* Text inside the dark blue box: "Wise World Model".

### Key Observations

1. **Divergent Outcomes:** The core contrast is between a trajectory that starts aligned but becomes misaligned at higher intelligence (left) and one that remains aligned (right).

2. **Role of Human-Level Intelligence:** The dashed line acts as a critical threshold. In the left diagram, the AI's path deviates from alignment *after* surpassing collective human intelligence.

3. **Alignment Mechanisms:** The left diagram depicts alignment as an external force ("Extrinsic alignment") pushing the AI's goals toward the center, supplemented by "Intrinsic alignment." The right diagram shows alignment as an inherent, continuous property ("Intrinsic alignment") of the AI's development.

4. **The "Wise World Model":** This component in the right diagram appears to be a foundational element or constraint that enables and ensures the intrinsically aligned, vertical trajectory. It is the origin point for the aligned path.

### Interpretation

These diagrams present a conceptual argument about the safety and control of advanced AI systems.

* **The Left Diagram warns of a potential failure mode.** It suggests that even if an AI's goals are initially aligned with humanity (through a combination of external rules and internal design), this alignment may not be robust. As the AI's intelligence grows beyond human levels, its goals could drift into a misaligned state, potentially due to instrumental convergence or unforeseen optimization pressures. The "Extrinsic alignment" arrows imply that external correction becomes less effective as the AI becomes more capable.

* **The Right Diagram proposes a solution or ideal state.** It argues for developing AI with "Intrinsic alignment" from the outset, grounded in a "Wise World Model." This model likely represents a robust, value-laden understanding of the world that prevents goal drift. The vertical, looped path suggests stable, continuous alignment throughout the intelligence scaling process, never exiting the safe "Aligned" corridor. The loops may symbolize iterative self-correction or verification against the foundational model.

* **Overall Message:** The comparison implies that relying on external controls or late-stage corrections (left) is risky for superintelligent AI. Instead, safety must be "baked in" through a foundational, intrinsic alignment mechanism (right) that remains effective as intelligence increases. The placement of the "Wise World Model" at the base of the aligned region signifies that such a model is the necessary starting point for safe, scalable AI development.