## Scatter Plot: Top-1 Accuracy vs. Date for Machine Learning Models

### Overview

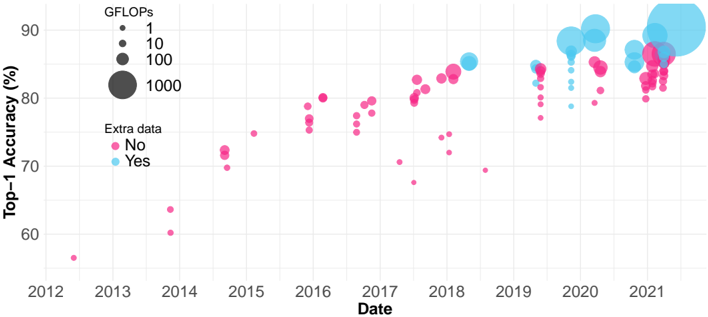

This scatter plot visualizes the relationship between the date a machine learning model was released and its Top-1 accuracy, with the size of the data point representing the model's GFLOPs (Giga Floating Point Operations). The color of the data point indicates whether the model was trained with "Extra data" or not.

### Components/Axes

* **X-axis:** Date, ranging from approximately 2012 to 2021.

* **Y-axis:** Top-1 Accuracy (%), ranging from approximately 58% to 92%.

* **Legend 1 (Top-Left):** GFLOPs, with corresponding circle sizes:

* 1 (Smallest circle)

* 10 (Medium circle)

* 100 (Large circle)

* 1000 (Largest circle)

* **Legend 2 (Center-Left):** Extra data:

* No (Pink circles)

* Yes (Cyan circles)

* **Data Points:** Scatter plot points representing individual machine learning models.

### Detailed Analysis

The plot shows a general upward trend in Top-1 accuracy over time. The size of the circles (GFLOPs) also generally increases with time and accuracy, though there is significant variation.

**Data Point Analysis (Approximate values, based on visual estimation):**

* **2012-2014:** Predominantly pink points (No extra data) with small circle sizes (1-10 GFLOPs). Accuracy ranges from approximately 58% to 75%.

* **2015-2017:** A mix of pink and cyan points, with increasing accuracy. Circle sizes begin to increase, ranging from 10 to 100 GFLOPs. Accuracy ranges from approximately 68% to 82%.

* **2018-2019:** More cyan points (Yes extra data) appear, and the circle sizes continue to increase, reaching up to 100 GFLOPs. Accuracy ranges from approximately 75% to 88%.

* **2020-2021:** Predominantly cyan points with larger circle sizes (100-1000 GFLOPs). Accuracy is generally high, ranging from approximately 82% to 92%. There is a cluster of points around 80-85% accuracy with varying GFLOPs.

**Specific Data Points (Approximate):**

* **2013:** Pink point, ~60% accuracy, 1 GFLOP.

* **2014:** Pink point, ~72% accuracy, 10 GFLOP.

* **2016:** Cyan point, ~78% accuracy, 100 GFLOP.

* **2018:** Cyan point, ~85% accuracy, 100 GFLOP.

* **2019:** Cyan point, ~88% accuracy, 100 GFLOP.

* **2020:** Cyan point, ~90% accuracy, 1000 GFLOP.

* **2021:** Cyan point, ~92% accuracy, 1000 GFLOP.

* **2021:** Pink point, ~82% accuracy, 100 GFLOP.

### Key Observations

* Models trained with "Extra data" (cyan points) generally achieve higher accuracy than those trained without (pink points), especially after 2018.

* There is a strong correlation between GFLOPs and Top-1 accuracy. Larger models (higher GFLOPs) tend to have higher accuracy.

* The rate of accuracy improvement appears to be accelerating over time, particularly in the 2020-2021 period.

* There are some outliers: a few pink points in 2020-2021 with relatively high accuracy, suggesting that models without "Extra data" can still achieve good performance.

### Interpretation

The data suggests that advancements in machine learning model accuracy are driven by both increased computational resources (GFLOPs) and the use of larger datasets ("Extra data"). The upward trend in accuracy over time reflects the ongoing progress in the field. The increasing size of the circles (GFLOPs) indicates that more powerful models are being developed. The shift towards cyan points (models with "Extra data") suggests that data augmentation and larger datasets are becoming increasingly important for achieving state-of-the-art performance. The outliers suggest that model architecture and training techniques also play a significant role, as some models without "Extra data" can still achieve competitive results. The acceleration of accuracy improvement in recent years may be due to breakthroughs in model architectures (e.g., Transformers) and training algorithms.