\n

## Bar Chart: Success Rate Comparison

### Overview

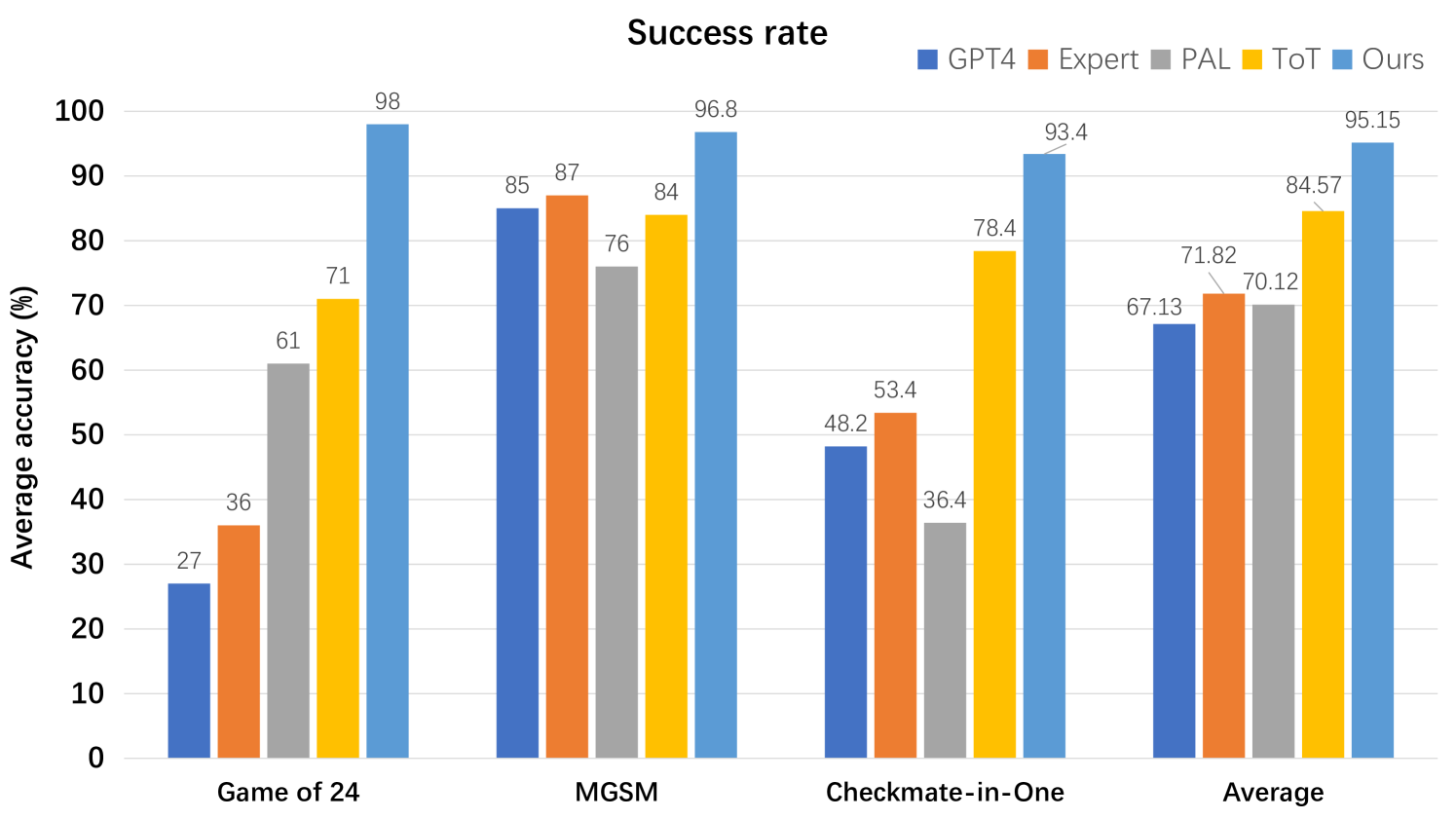

This bar chart compares the success rates of four different models – GPT4, Expert, PAL, and “Ours” – across three distinct game-solving tasks: Game of 24, MGSM, and Checkmate-in-One. An overall average success rate is also presented. The y-axis represents the average accuracy in percentage, ranging from 0 to 100.

### Components/Axes

* **Title:** "Success rate" (positioned at the top-center)

* **X-axis:** Game/Task Name (labeled: "Game of 24", "MGSM", "Checkmate-in-One", "Average")

* **Y-axis:** Average accuracy (%) (labeled, ranging from 0 to 100, with increments of 10)

* **Legend:** Located at the top-center, identifying the models by color:

* GPT4 (Blue)

* Expert (Orange)

* PAL (Gray)

* Ours (Yellow)

### Detailed Analysis

The chart consists of four groups of bars, one for each task/average, with each group containing four bars representing the success rate of each model.

**Game of 24:**

* GPT4: Approximately 98% (visually, almost reaching 100%)

* Expert: Approximately 85%

* PAL: Approximately 76%

* Ours: Approximately 71%

* The trend is a decreasing success rate from GPT4 to "Ours".

**MGSM:**

* GPT4: Approximately 96.8% (visually, very close to 100%)

* Expert: Approximately 87%

* PAL: Approximately 84%

* Ours: Approximately 84%

* GPT4 has the highest success rate, followed by Expert, and PAL and Ours are tied.

**Checkmate-in-One:**

* GPT4: Approximately 93.4%

* Expert: Approximately 78.4%

* PAL: Approximately 53.4%

* Ours: Approximately 48.2%

* The success rate decreases significantly from GPT4 to "Ours".

**Average:**

* GPT4: Approximately 95.15%

* Expert: Approximately 84.57%

* PAL: Approximately 70.12%

* Ours: Approximately 67.13%

* GPT4 has the highest average success rate, followed by Expert, PAL, and "Ours".

### Key Observations

* GPT4 consistently outperforms all other models across all tasks and in the overall average.

* The "Ours" model generally has the lowest success rate, except for MGSM where it ties with PAL.

* The largest performance gap between models is observed in the "Checkmate-in-One" task.

* The success rates for all models are relatively high, generally above 60%, indicating a good level of performance overall.

### Interpretation

The data suggests that GPT4 is the most effective model for solving these game-solving tasks, demonstrating a significantly higher success rate compared to the other models. The "Expert" model performs reasonably well, consistently ranking second. PAL and "Ours" exhibit lower success rates, with "Ours" generally being the least effective.

The substantial difference in performance on the "Checkmate-in-One" task could indicate that this task is particularly challenging and highlights the strengths of GPT4 in handling complex strategic problems. The relatively high success rates across all models suggest that the tasks are not overly difficult, but GPT4's consistent superiority indicates a significant advantage in problem-solving capabilities. The fact that "Ours" ties with PAL on MGSM suggests that the model may have specific strengths in that particular task.

The chart provides a clear comparison of the performance of different models, allowing for a quantitative assessment of their effectiveness in game-solving. This information could be valuable for researchers and developers seeking to improve the performance of AI models in similar domains.