TECHNICAL ASSET FINGERPRINT

06e9450d0bebdd5092ab94b0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

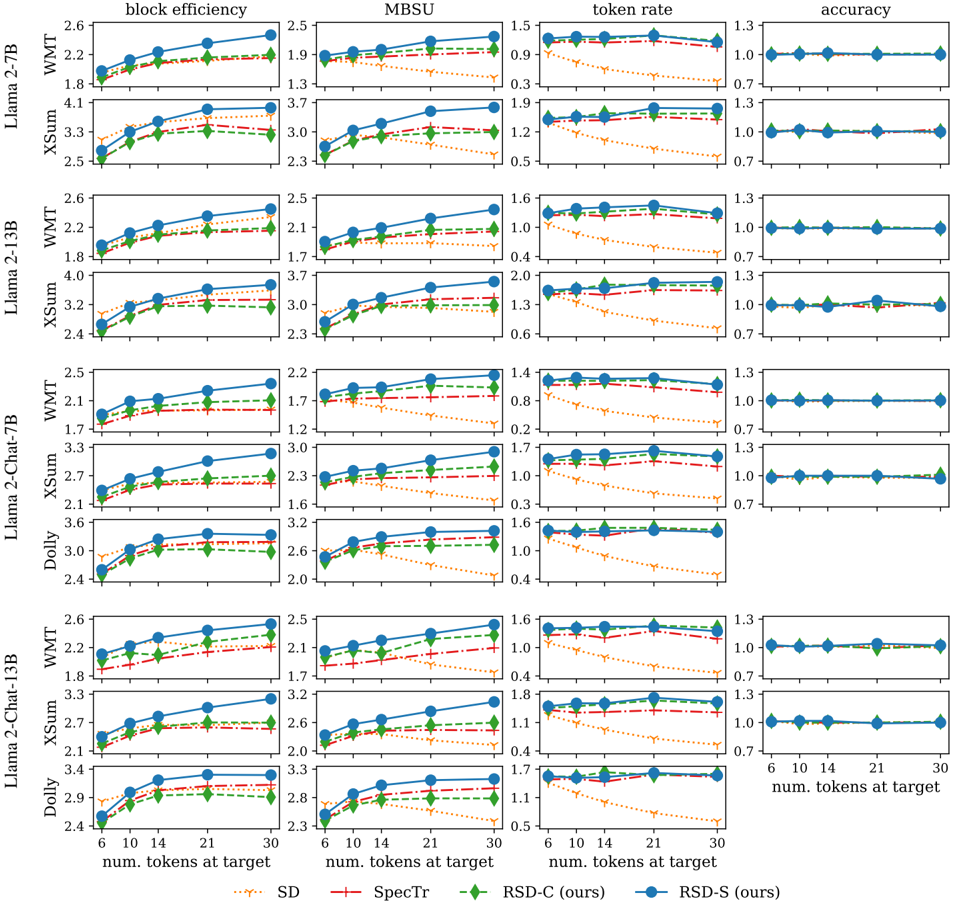

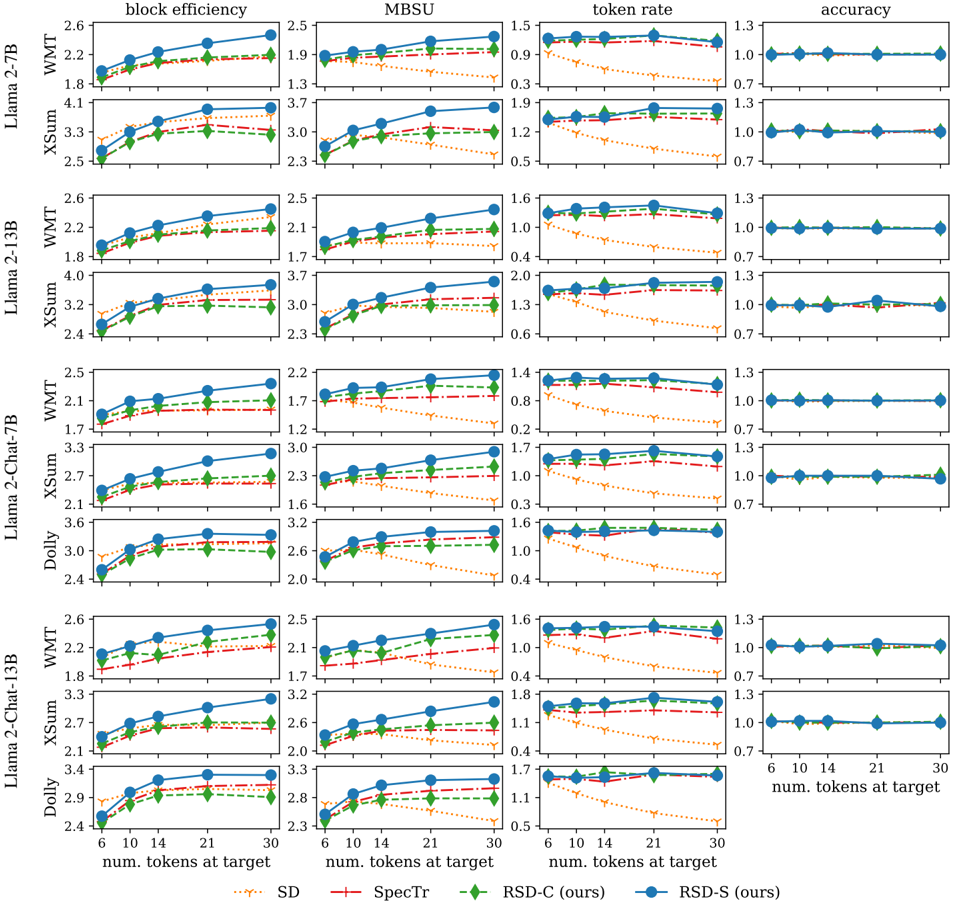

## Line Charts: Performance Metrics of Language Models

### Overview

The image presents a series of line charts comparing the performance of different language models (Llama 2-7B, Llama 2-13B, Llama 2-Chat-7B, Llama 2-Chat-13B, and Dolly) across four metrics: block efficiency, MBSU, token rate, and accuracy. The x-axis represents the number of tokens at target (6, 10, 14, 21, 30), and the performance is evaluated on three datasets: WMT, XSum, and Dolly. Four different methods (SD, SpecTr, RSD-C (ours), and RSD-S (ours)) are compared for each model and metric.

### Components/Axes

* **Chart Titles (Top Row):** block efficiency, MBSU, token rate, accuracy

* **Y-Axis Labels (Left Column):** Llama 2-7B (WMT, XSum), Llama 2-13B (WMT, XSum), Llama 2-Chat-7B (WMT, XSum, Dolly), Llama 2-Chat-13B (WMT, XSum, Dolly)

* **X-Axis Label (Bottom):** num. tokens at target

* **X-Axis Markers:** 6, 10, 14, 21, 30

* **Y-Axis Markers:**

* **block efficiency:** Values range from approximately 1.7 to 4.1.

* **MBSU:** Values range from approximately 1.2 to 3.7.

* **token rate:** Values range from approximately 0.2 to 2.0.

* **accuracy:** Values range from approximately 0.7 to 1.3.

* **Legend (Bottom):**

* Blue line with circle markers: RSD-S (ours)

* Red dashed line with plus markers: SpecTr

* Green dashed-dotted line with diamond markers: RSD-C (ours)

* Orange dotted line with inverted triangle markers: SD

### Detailed Analysis

Each row represents a specific language model and dataset combination, and each column represents a different performance metric. Within each subplot, the x-axis represents the number of tokens at target, and the y-axis represents the value of the performance metric.

**Block Efficiency:**

* **General Trend:** For all models and datasets, block efficiency generally increases as the number of tokens at target increases.

* **RSD-S (ours) (Blue):** Consistently shows the highest block efficiency across all models and datasets.

* **SpecTr (Red):** Generally performs better than RSD-C and SD, but worse than RSD-S.

* **RSD-C (ours) (Green):** Performance is generally between SpecTr and SD.

* **SD (Orange):** Typically has the lowest block efficiency.

**MBSU:**

* **General Trend:** MBSU tends to increase with the number of tokens at target, but the increase is less pronounced than in block efficiency.

* **RSD-S (ours) (Blue):** Generally achieves the highest MBSU.

* **SpecTr (Red):** Performance is generally better than RSD-C and SD, but worse than RSD-S.

* **RSD-C (ours) (Green):** Performance is generally between SpecTr and SD.

* **SD (Orange):** Typically has the lowest MBSU.

**Token Rate:**

* **General Trend:** Token rate generally decreases as the number of tokens at target increases.

* **RSD-S (ours) (Blue):** Consistently shows the highest token rate across all models and datasets.

* **SpecTr (Red):** Generally performs better than RSD-C and SD, but worse than RSD-S.

* **RSD-C (ours) (Green):** Performance is generally between SpecTr and SD.

* **SD (Orange):** Typically has the lowest token rate.

**Accuracy:**

* **General Trend:** Accuracy remains relatively constant across different numbers of tokens at target.

* **RSD-S (ours) (Blue):** Consistently shows the highest accuracy across all models and datasets.

* **SpecTr (Red):** Generally performs better than RSD-C and SD, but worse than RSD-S.

* **RSD-C (ours) (Green):** Performance is generally between SpecTr and SD.

* **SD (Orange):** Typically has the lowest accuracy.

### Key Observations

* RSD-S (ours) consistently outperforms the other methods (SpecTr, RSD-C, and SD) across all four metrics (block efficiency, MBSU, token rate, and accuracy).

* SD generally has the lowest performance across all metrics.

* Accuracy remains relatively stable regardless of the number of tokens at target.

* The performance differences between the methods are more pronounced for block efficiency, MBSU, and token rate than for accuracy.

### Interpretation

The data suggests that RSD-S (ours) is the most effective method for improving the performance of language models, as it consistently achieves the highest block efficiency, MBSU, token rate, and accuracy. The other methods (SpecTr, RSD-C, and SD) offer varying degrees of improvement, with SD generally performing the worst. The relatively constant accuracy across different numbers of tokens at target suggests that the methods primarily impact efficiency metrics rather than the overall quality of the generated text. The consistent trends across different language models and datasets indicate that the performance benefits of RSD-S are generalizable.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart: Performance Metrics of Language Models

### Overview

The image presents a 5x4 grid of line charts comparing the performance of several language models (Llama 2-TB, Llama 2-13B, Llama 2-Chat-7B, Dolly, and Llama 2-Chat-13B) across four different metrics: block efficiency, MBSU (Memory Bandwidth per Second Utilization), token rate, and accuracy. Each chart displays performance as a function of the number of tokens at the target sequence length. Different lines within each chart represent different implementations: WMT (likely a specific training dataset or method) and XSum (another dataset/method). A third line, labeled "RSD-S (ours)", is also present in each chart.

### Components/Axes

* **X-axis (all charts):** "num. tokens at target" with values ranging from 2 to 30, with markers at 2, 6, 10, 14, 21, and 30.

* **Y-axis (varies by chart):**

* **Block Efficiency:** Scale from approximately 1.6 to 4.2.

* **MBSU:** Scale from approximately 1.2 to 3.7.

* **Token Rate:** Scale from approximately 0.2 to 1.6.

* **Accuracy:** Scale from approximately 0.6 to 1.3.

* **Legend (top-right, common to all charts):**

* WMT (Green line)

* XSum (Teal line)

* RSD-S (ours) (Red dashed line)

* **Title (above each chart):** Indicates the metric being displayed (block efficiency, MBSU, token rate, accuracy).

* **Row Labels (left side):** Indicate the language model being evaluated (Llama 2-TB, Llama 2-13B, Llama 2-Chat-7B, Dolly, Llama 2-Chat-13B).

* **Subtitle (bottom of the chart):** "Spectr" and "RSD-S (ours)"

### Detailed Analysis or Content Details

**Llama 2-TB:**

* **Block Efficiency:** XSum shows a generally increasing trend, starting at ~1.8 and reaching ~2.5 at 30 tokens. WMT is relatively flat around ~1.8. RSD-S starts at ~4.1 and decreases to ~3.3.

* **MBSU:** XSum increases from ~1.3 to ~2.3. WMT is relatively flat around ~1.9. RSD-S starts at ~3.7 and decreases to ~3.0.

* **Token Rate:** XSum increases from ~0.3 to ~0.9. WMT is relatively flat around ~0.5. RSD-S starts at ~1.2 and decreases to ~0.9.

* **Accuracy:** XSum is relatively flat around ~0.7. WMT is relatively flat around ~1.0. RSD-S is relatively flat around ~1.3.

**Llama 2-13B:**

* **Block Efficiency:** XSum increases from ~1.8 to ~3.2. WMT is relatively flat around ~2.4. RSD-S starts at ~4.0 and decreases to ~3.7.

* **MBSU:** XSum increases from ~2.1 to ~3.0. WMT is relatively flat around ~2.0. RSD-S starts at ~3.7 and decreases to ~3.0.

* **Token Rate:** XSum increases from ~0.6 to ~1.3. WMT is relatively flat around ~1.3. RSD-S starts at ~1.2 and decreases to ~0.9.

* **Accuracy:** XSum is relatively flat around ~0.7. WMT is relatively flat around ~1.3. RSD-S is relatively flat around ~1.3.

**Llama 2-Chat-7B:**

* **Block Efficiency:** XSum increases from ~1.7 to ~3.0. WMT is relatively flat around ~2.4. RSD-S starts at ~3.6 and decreases to ~3.2.

* **MBSU:** XSum increases from ~1.6 to ~2.6. WMT is relatively flat around ~2.2. RSD-S starts at ~3.2 and decreases to ~2.8.

* **Token Rate:** XSum increases from ~0.2 to ~0.8. WMT is relatively flat around ~0.8. RSD-S starts at ~1.0 and decreases to ~0.6.

* **Accuracy:** XSum is relatively flat around ~0.7. WMT is relatively flat around ~1.0. RSD-S is relatively flat around ~1.3.

**Dolly:**

* **Block Efficiency:** XSum increases from ~2.1 to ~3.6. WMT is relatively flat around ~2.4. RSD-S starts at ~3.0 and decreases to ~2.6.

* **MBSU:** XSum increases from ~1.7 to ~3.2. WMT is relatively flat around ~2.2. RSD-S starts at ~2.6 and decreases to ~2.0.

* **Token Rate:** XSum increases from ~0.4 to ~1.6. WMT is relatively flat around ~0.6. RSD-S starts at ~1.6 and decreases to ~1.0.

* **Accuracy:** XSum is relatively flat around ~0.7. WMT is relatively flat around ~0.7. RSD-S is relatively flat around ~1.3.

**Llama 2-Chat-13B:**

* **Block Efficiency:** XSum increases from ~2.1 to ~3.4. WMT is relatively flat around ~2.4. RSD-S starts at ~4.1 and decreases to ~3.8.

* **MBSU:** XSum increases from ~2.0 to ~3.2. WMT is relatively flat around ~2.4. RSD-S starts at ~3.8 and decreases to ~3.2.

* **Token Rate:** XSum increases from ~0.6 to ~1.4. WMT is relatively flat around ~1.4. RSD-S starts at ~1.4 and decreases to ~0.8.

* **Accuracy:** XSum is relatively flat around ~0.7. WMT is relatively flat around ~0.7. RSD-S is relatively flat around ~1.3.

### Key Observations

* RSD-S consistently outperforms WMT and XSum in all metrics, but often shows a decreasing trend as the number of tokens increases.

* XSum generally shows an increasing trend across all metrics, suggesting improved performance with longer sequences.

* WMT tends to be relatively stable across different sequence lengths.

* Dolly consistently has lower block efficiency, MBSU, and token rate compared to the Llama 2 models.

* Accuracy is generally higher for RSD-S and WMT compared to XSum.

### Interpretation

The charts demonstrate a comparative performance analysis of different language models and implementation strategies (WMT, XSum, RSD-S). The "RSD-S (ours)" implementation consistently achieves higher performance across all metrics, particularly in accuracy, but exhibits a diminishing return as the sequence length increases. This suggests that RSD-S may be more sensitive to longer sequences or may have limitations in scaling. The increasing trend observed in XSum indicates that its performance improves with longer sequences, potentially due to better utilization of context. The relatively stable performance of WMT suggests it is less sensitive to sequence length. The lower performance of Dolly compared to the Llama 2 models highlights the impact of model size and architecture on performance. The data suggests that the choice of implementation strategy (RSD-S, WMT, XSum) and model architecture (Llama 2 vs. Dolly) are crucial factors in optimizing language model performance. The decreasing trend of RSD-S could be due to memory constraints or computational bottlenecks as the sequence length increases. Further investigation is needed to understand the underlying reasons for these trends and to identify strategies for mitigating the performance degradation observed with RSD-S at longer sequence lengths.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Chart Performance Analysis: Llama 2 Models on Various Datasets

### Overview

The image is a composite figure containing a grid of 40 line charts. It presents a performance comparison of four different methods (SD, SpecTr, RSD-C, RSD-S) across four Llama 2 model variants (7B, 13B, Chat-7B, Chat-13B) on three datasets (WMT, XSum, Dolly). Performance is measured across four metrics: block efficiency, MBSU, token rate, and accuracy, as a function of the number of tokens at the target.

### Components/Axes

* **Grid Structure:** The charts are arranged in a 10-row by 4-column grid.

* **Rows:** Grouped by model and dataset. From top to bottom:

1. Llama 2-7B on WMT

2. Llama 2-7B on XSum

3. Llama 2-13B on WMT

4. Llama 2-13B on XSum

5. Llama 2-Chat-7B on WMT

6. Llama 2-Chat-7B on XSum

7. Llama 2-Chat-7B on Dolly

8. Llama 2-Chat-13B on WMT

9. Llama 2-Chat-13B on XSum

10. Llama 2-Chat-13B on Dolly

* **Columns:** Represent the four metrics. From left to right:

1. **block efficiency**

2. **MBSU**

3. **token rate**

4. **accuracy**

* **X-Axis (Common):** Labeled "num. tokens at the target" at the bottom of the grid. The axis markers are at values: 6, 10, 14, 21, 30.

* **Y-Axes:** Each column has its own y-axis scale and label.

* **block efficiency:** Scale varies per chart, typically ranging from ~1.7 to 4.1.

* **MBSU:** Scale varies per chart, typically ranging from ~1.3 to 3.7.

* **token rate:** Scale varies per chart, typically ranging from ~0.3 to 1.9.

* **accuracy:** Scale is consistently from 0.7 to 1.3 for all charts in this column.

* **Legend:** Located at the very bottom of the entire figure. It defines the four data series:

* **SD:** Orange dotted line with 'x' markers.

* **SpecTr:** Red dash-dot line with '+' markers.

* **RSD-C (ours):** Green dashed line with diamond markers.

* **RSD-S (ours):** Solid blue line with circle markers.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

**1. Llama 2-7B**

* **WMT:**

* *Block Efficiency:* All methods increase with token count. RSD-S (blue) is highest (~2.5 at 30 tokens), followed by RSD-C (green, ~2.3), SpecTr (red, ~2.2), and SD (orange, ~2.1).

* *MBSU:* Similar increasing trend. RSD-S leads (~2.4 at 30), RSD-C (~2.2), SpecTr (~2.1), SD (~1.9).

* *Token Rate:* RSD-S, RSD-C, and SpecTr are relatively flat and high (~1.3-1.4). SD shows a sharp decline from ~1.2 at 6 tokens to ~0.4 at 30 tokens.

* *Accuracy:* All methods maintain a flat line at exactly 1.0 across all token counts.

* **XSum:**

* *Block Efficiency:* Strong upward trend. RSD-S (~4.0 at 30), RSD-C (~3.3), SpecTr (~3.2), SD (~3.1).

* *MBSU:* Upward trend. RSD-S (~3.6 at 30), RSD-C (~3.1), SpecTr (~3.0), SD (~2.8).

* *Token Rate:* RSD-S, RSD-C, SpecTr are high and stable (~1.7-1.8). SD declines from ~1.5 to ~0.7.

* *Accuracy:* Flat at 1.0 for all.

**2. Llama 2-13B**

* **WMT:**

* *Block Efficiency:* Increasing. RSD-S (~2.5 at 30), RSD-C (~2.3), SpecTr (~2.2), SD (~2.1).

* *MBSU:* Increasing. RSD-S (~2.4 at 30), RSD-C (~2.2), SpecTr (~2.1), SD (~1.9).

* *Token Rate:* RSD-S, RSD-C, SpecTr stable and high (~1.4-1.5). SD declines from ~1.2 to ~0.5.

* *Accuracy:* Flat at 1.0.

* **XSum:**

* *Block Efficiency:* Increasing. RSD-S (~3.9 at 30), RSD-C (~3.3), SpecTr (~3.2), SD (~3.1).

* *MBSU:* Increasing. RSD-S (~3.6 at 30), RSD-C (~3.1), SpecTr (~3.0), SD (~2.8).

* *Token Rate:* RSD-S, RSD-C, SpecTr stable/high (~1.7-1.8). SD declines from ~1.5 to ~0.7.

* *Accuracy:* Flat at 1.0.

**3. Llama 2-Chat-7B**

* **WMT:**

* *Block Efficiency:* Increasing. RSD-S (~2.4 at 30), RSD-C (~2.1), SpecTr (~2.0), SD (~1.9).

* *MBSU:* Increasing. RSD-S (~2.1 at 30), RSD-C (~1.9), SpecTr (~1.8), SD (~1.7).

* *Token Rate:* RSD-S, RSD-C, SpecTr stable/high (~1.3). SD declines from ~1.1 to ~0.5.

* *Accuracy:* Flat at 1.0.

* **XSum:**

* *Block Efficiency:* Increasing. RSD-S (~3.2 at 30), RSD-C (~2.8), SpecTr (~2.7), SD (~2.6).

* *MBSU:* Increasing. RSD-S (~2.9 at 30), RSD-C (~2.5), SpecTr (~2.4), SD (~2.3).

* *Token Rate:* RSD-S, RSD-C, SpecTr stable/high (~1.6). SD declines from ~1.4 to ~0.6.

* *Accuracy:* Flat at 1.0.

* **Dolly:**

* *Block Efficiency:* Increasing. RSD-S (~3.5 at 30), RSD-C (~3.2), SpecTr (~3.1), SD (~3.0).

* *MBSU:* Increasing. RSD-S (~3.1 at 30), RSD-C (~2.8), SpecTr (~2.7), SD (~2.6).

* *Token Rate:* RSD-S, RSD-C, SpecTr stable/high (~1.5). SD declines from ~1.3 to ~0.5.

* *Accuracy:* Flat at 1.0.

**4. Llama 2-Chat-13B**

* **WMT:**

* *Block Efficiency:* Increasing. RSD-S (~2.5 at 30), RSD-C (~2.3), SpecTr (~2.2), SD (~2.1).

* *MBSU:* Increasing. RSD-S (~2.4 at 30), RSD-C (~2.2), SpecTr (~2.1), SD (~1.9).

* *Token Rate:* RSD-S, RSD-C, SpecTr stable/high (~1.5). SD declines from ~1.3 to ~0.5.

* *Accuracy:* Flat at 1.0.

* **XSum:**

* *Block Efficiency:* Increasing. RSD-S (~3.3 at 30), RSD-C (~2.8), SpecTr (~2.7), SD (~2.6).

* *MBSU:* Increasing. RSD-S (~3.0 at 30), RSD-C (~2.6), SpecTr (~2.5), SD (~2.4).

* *Token Rate:* RSD-S, RSD-C, SpecTr stable/high (~1.7). SD declines from ~1.5 to ~0.6.

* *Accuracy:* Flat at 1.0.

* **Dolly:**

* *Block Efficiency:* Increasing. RSD-S (~3.3 at 30), RSD-C (~3.0), SpecTr (~2.9), SD (~2.8).

* *MBSU:* Increasing. RSD-S (~3.0 at 30), RSD-C (~2.7), SpecTr (~2.6), SD (~2.5).

* *Token Rate:* RSD-S, RSD-C, SpecTr stable/high (~1.6). SD declines from ~1.4 to ~0.6.

* *Accuracy:* Flat at 1.0.

### Key Observations

1. **Consistent Hierarchy:** Across nearly all charts for block efficiency and MBSU, the performance order is consistent: **RSD-S (blue) > RSD-C (green) > SpecTr (red) > SD (orange)**.

2. **Token Rate Divergence:** The "token rate" metric shows a critical split. RSD-S, RSD-C, and SpecTr maintain a high, stable rate as token count increases. In stark contrast, **SD (orange) exhibits a severe, near-linear decline** in token rate for all models and datasets.

3. **Perfect Accuracy:** The "accuracy" column shows all methods achieve a perfect score of 1.0 across all conditions, indicating no degradation in output correctness despite differences in efficiency or speed.

4. **Model & Dataset Scaling:** The absolute values for block efficiency and MBSU are generally higher on the XSum and Dolly datasets compared to WMT. The Chat-tuned models show slightly different absolute values but follow the same relative trends as their base counterparts.

### Interpretation

This data strongly suggests that the proposed methods, **RSD-S and RSD-C, offer a significant improvement in generation efficiency (block efficiency, MBSU) over the baselines (SpecTr, SD) without sacrificing accuracy.** The most dramatic finding is the failure mode of the SD method: while it maintains accuracy, its **token generation rate collapses as the target length increases**, making it impractical for longer sequences. SpecTr offers a middle ground, but is consistently outperformed by the RSD variants.

The charts demonstrate that the RSD methods successfully decouple generation speed (token rate) from sequence length, maintaining high throughput where SD does not. This is a crucial property for efficient large language model inference. The consistency of these results across multiple model sizes (7B, 13B), training paradigms (base, chat), and diverse tasks (translation, summarization, instruction following) indicates the robustness of the advantage held by the RSD approaches. The perfect accuracy scores imply that the efficiency gains are not achieved by compromising on the quality of the generated text.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Model Performance Metrics Across Token Counts

### Overview

The image displays a multi-panel line chart comparing performance metrics (block efficiency, token rate, accuracy) across different language models (Llama 2-7B, Llama 2-13B, Llama 2-Chat-7B, Llama 2-Chat-13B, Dolly, XSum) and datasets (SD, SpecTr, RSD-C, RSD-S). Each panel represents a unique model-dataset combination, with three subplots per panel showing trends for the three metrics as the number of tokens at target increases from 6 to 30.

### Components/Axes

- **X-axis**: "num. tokens at target" (values: 6, 10, 14, 21, 30)

- **Y-axes**:

- Top row: "block efficiency" (scale: 1.5–2.6)

- Middle row: "token rate" (scale: 0.3–1.5)

- Bottom row: "accuracy" (scale: 0.7–1.3)

- **Legend** (right-aligned):

- SD: Orange dotted line

- SpecTr: Red dashed line

- RSD-C: Green solid line

- RSD-S: Blue solid line

- **Panels**: Organized by model (rows) and dataset (columns), with labels like "Llama 2-7B", "XSum", etc.

### Detailed Analysis

#### Block Efficiency (Top Row)

- **Trend**: All lines show upward trends as token count increases. RSD-S (blue) and RSD-C (green) consistently outperform SD (orange) and SpecTr (red).

- **Values**:

- Llama 2-7B (SD): 1.8 → 2.2

- Llama 2-7B (RSD-S): 1.8 → 2.2

- XSum (RSD-C): 2.4 → 2.6

#### Token Rate (Middle Row)

- **Trend**: All lines decline as token count increases. RSD-S (blue) and RSD-C (green) maintain higher values than SD (orange) and SpecTr (red).

- **Values**:

- Llama 2-7B (SD): 0.9 → 0.6

- Llama 2-7B (RSD-S): 0.9 → 0.6

- Dolly (SpecTr): 1.0 → 0.4

#### Accuracy (Bottom Row)

- **Trend**: All lines remain flat across token counts, with minor fluctuations. RSD-S (blue) and RSD-C (green) show slight improvements.

- **Values**:

- Llama 2-7B (SD): 1.0 → 1.0

- Llama 2-7B (RSD-S): 1.0 → 1.0

- XSum (RSD-C): 1.0 → 1.0

### Key Observations

1. **RSD-S and RSD-C Dominance**: These datasets consistently achieve higher block efficiency and token rate across all models.

2. **Token Count Impact**: Block efficiency improves with more tokens, while token rate declines, suggesting a trade-off between efficiency and computational cost.

3. **Accuracy Stability**: Accuracy remains near 1.0 for all models/datasets, indicating robustness to token count changes.

### Interpretation

The data demonstrates that RSD-C and RSD-S datasets optimize resource utilization (higher block efficiency, lower token rate) compared to SD and SpecTr. The consistent accuracy across token counts suggests these models maintain reliability regardless of input size. The inverse relationship between block efficiency and token rate highlights a potential design trade-off: models that process more tokens per unit time (higher block efficiency) may require more computational resources per token (lower token rate). This could inform decisions about model selection based on application priorities (e.g., speed vs. resource efficiency).

DECODING INTELLIGENCE...