\n

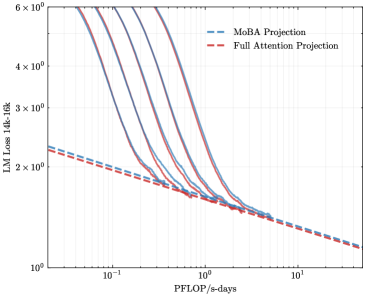

## Line Chart: LLM Loss vs. Compute (PFLOP/s-days)

### Overview

The image is a line chart plotted on a log-log scale, comparing the projected loss of two different Large Language Model (LLM) architectures as a function of computational resources. The chart demonstrates a scaling law relationship, where model loss decreases as the amount of compute (measured in PFLOP/s-days) increases.

### Components/Axes

* **Chart Type:** 2D line chart with logarithmic scales on both axes.

* **X-Axis:**

* **Label:** `PFLOP/s-days`

* **Scale:** Logarithmic (base 10).

* **Range & Markers:** The visible axis spans from approximately `10^-1` (0.1) to `10^1` (10). Major tick marks are present at `10^-1`, `10^0` (1), and `10^1`.

* **Y-Axis:**

* **Label:** `LLM Loss (4k ctx)`

* **Scale:** Logarithmic (base 10).

* **Range & Markers:** The visible axis spans from `10^0` (1) to `6 × 10^0` (6). Major tick marks are present at `10^0`, `2 × 10^0`, `3 × 10^0`, `4 × 10^0`, and `6 × 10^0`.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Entry 1:** `MoBA Projection` - Represented by a blue dashed line (`--`).

* **Entry 2:** `Full Attention Projection` - Represented by a red dashed line (`--`).

### Detailed Analysis

The chart plots two data series, each represented by a dashed line.

1. **MoBA Projection (Blue Dashed Line):**

* **Trend:** The line shows a strong, consistent downward slope from left to right, indicating that loss decreases significantly as compute increases.

* **Data Points (Approximate):**

* At ~`0.1` PFLOP/s-days, Loss is ~`2.2`.

* At ~`1` PFLOP/s-days, Loss is ~`1.5`.

* At ~`10` PFLOP/s-days, Loss is ~`1.1`.

* **Spatial Grounding:** This line originates from the upper-left quadrant and descends diagonally towards the bottom-right, remaining above the red line for the entire visible range until the far right.

2. **Full Attention Projection (Red Dashed Line):**

* **Trend:** The line also shows a consistent downward slope, but it is less steep than the blue line. It starts at a lower loss value for a given compute level compared to the blue line.

* **Data Points (Approximate):**

* At ~`0.1` PFLOP/s-days, Loss is ~`2.0`.

* At ~`1` PFLOP/s-days, Loss is ~`1.4`.

* At ~`10` PFLOP/s-days, Loss is ~`1.1`.

* **Spatial Grounding:** This line originates from the middle-left area and descends diagonally, positioned below the blue line. The two lines appear to converge and nearly intersect at the far right of the chart, near `10` PFLOP/s-days.

### Key Observations

* **Convergence:** The primary observation is the convergence of the two projection lines. The "MoBA Projection" starts with a higher loss but improves at a faster rate with increased compute, eventually matching the performance of the "Full Attention Projection" at approximately `10` PFLOP/s-days.

* **Scaling Efficiency:** The steeper slope of the MoBA line suggests it has a more favorable scaling exponent with respect to compute in this regime. It gains more performance per additional unit of compute compared to the Full Attention model.

* **Log-Log Linearity:** Both projections appear as nearly straight lines on this log-log plot, which is characteristic of power-law scaling relationships commonly observed in neural network training (e.g., the Chinchilla scaling laws).

### Interpretation

This chart presents a technical projection comparing the computational efficiency of two LLM architectures: "MoBA" and "Full Attention."

* **What the data suggests:** The data suggests that while the Full Attention architecture may be more efficient (lower loss) at lower compute budgets, the MoBA architecture is projected to scale more efficiently. Given sufficient computational resources (around 10 PFLOP/s-days in this projection), MoBA is expected to achieve parity with Full Attention.

* **How elements relate:** The relationship is a direct comparison of scaling laws. The x-axis (compute) is the independent variable, and the y-axis (loss) is the dependent performance metric. The two lines represent different model families or architectural choices, with their slopes indicating their respective scaling efficiencies.

* **Notable implications:** This type of analysis is crucial for resource allocation in AI research. It implies that investing in the MoBA architecture could be more beneficial for long-term scaling, as it promises better returns on large compute investments. The convergence point is a critical threshold where the architectural advantage shifts. The chart does not show data points, only projections, so these are theoretical scaling curves based on empirical fits or modeling.