TECHNICAL ASSET FINGERPRINT

072e16f4330040fb704c525c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

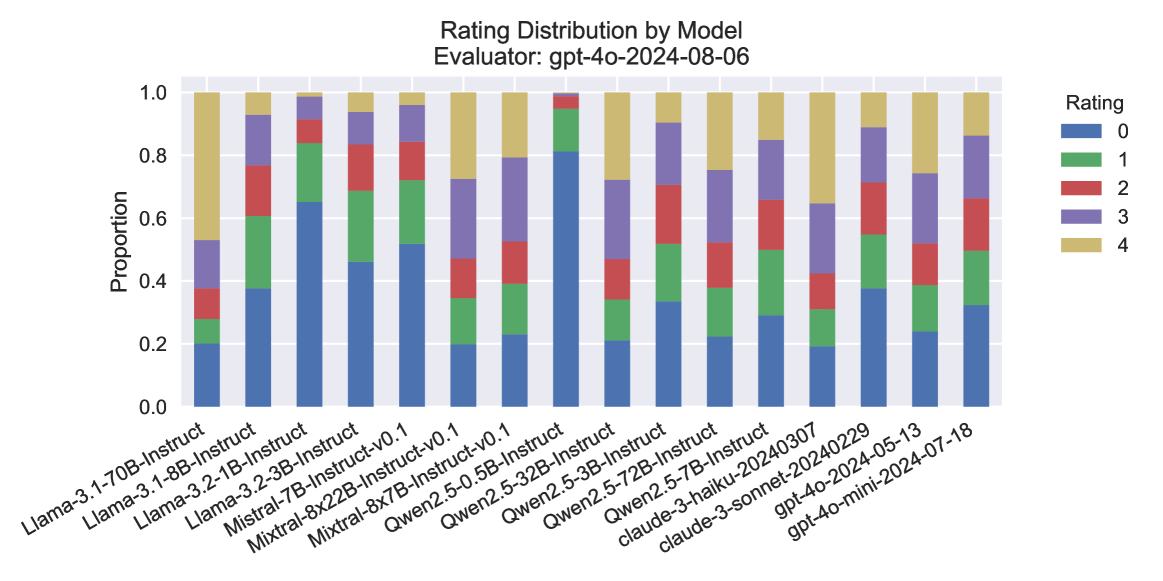

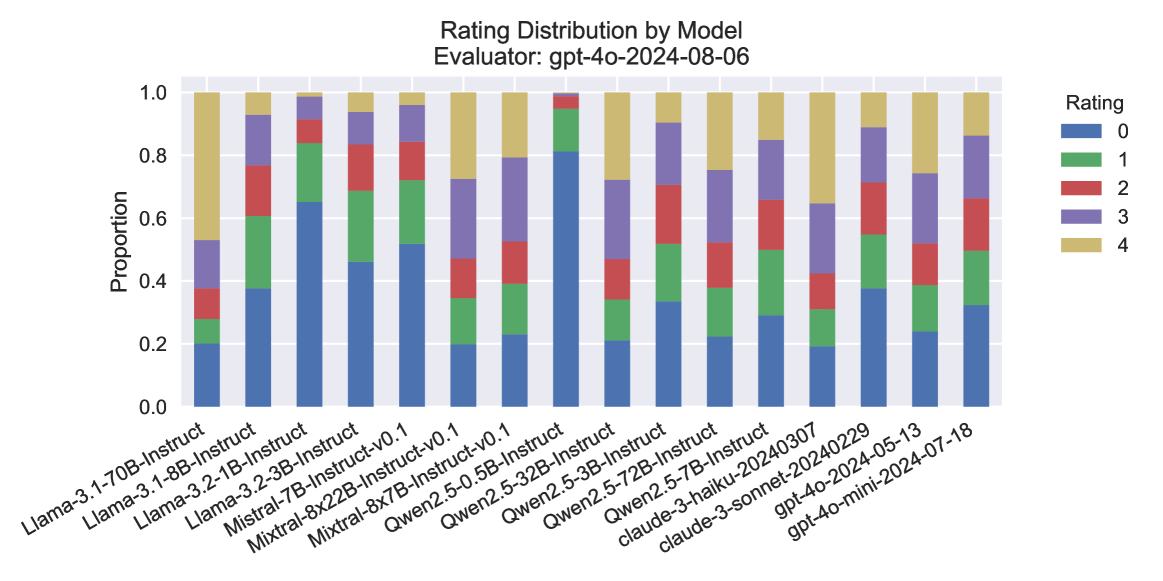

## Stacked Bar Chart: Rating Distribution by Model

### Overview

The image is a stacked bar chart showing the distribution of ratings for various language models. The x-axis represents different models, and the y-axis represents the proportion of each rating (0-4) assigned to each model. The chart is titled "Rating Distribution by Model" and specifies the evaluator as "gpt-4o-2024-08-06".

### Components/Axes

* **Title:** Rating Distribution by Model

* **Subtitle:** Evaluator: gpt-4o-2024-08-06

* **X-axis:** Language Models (categorical)

* Llama-3.1-70B-Instruct

* Llama-3.1-8B-Instruct

* Llama-3.2-1B-Instruct

* Llama-3.2-3B-Instruct

* Mistral-7B-Instruct-v0.1

* Mixtral-8x22B-Instruct-v0.1

* Mixtral-8x7B-Instruct-v0.1

* Qwen2.5-0.5B-Instruct

* Qwen2.5-3B-Instruct

* Qwen2.5-32B-Instruct

* Qwen2.5-72B-Instruct

* Qwen2.5-7B-Instruct

* claude-3-haiku-20240307

* claude-3-sonnet-20240229

* gpt-4o-2024-05-13

* gpt-4o-mini-2024-07-18

* **Y-axis:** Proportion (numerical), ranging from 0.0 to 1.0 in increments of 0.2.

* **Legend:** Located on the top-right of the chart.

* Blue: Rating 0

* Green: Rating 1

* Red: Rating 2

* Purple: Rating 3

* Tan/Beige: Rating 4

### Detailed Analysis

The chart presents a stacked bar for each language model, where the height of each colored segment represents the proportion of ratings for that category.

* **Llama-3.1-70B-Instruct:**

* Rating 0: ~0.25

* Rating 1: ~0.20

* Rating 2: ~0.15

* Rating 3: ~0.20

* Rating 4: ~0.20

* **Llama-3.1-8B-Instruct:**

* Rating 0: ~0.35

* Rating 1: ~0.25

* Rating 2: ~0.15

* Rating 3: ~0.15

* Rating 4: ~0.10

* **Llama-3.2-1B-Instruct:**

* Rating 0: ~0.30

* Rating 1: ~0.25

* Rating 2: ~0.15

* Rating 3: ~0.15

* Rating 4: ~0.15

* **Llama-3.2-3B-Instruct:**

* Rating 0: ~0.35

* Rating 1: ~0.25

* Rating 2: ~0.15

* Rating 3: ~0.15

* Rating 4: ~0.10

* **Mistral-7B-Instruct-v0.1:**

* Rating 0: ~0.20

* Rating 1: ~0.20

* Rating 2: ~0.15

* Rating 3: ~0.25

* Rating 4: ~0.20

* **Mixtral-8x22B-Instruct-v0.1:**

* Rating 0: ~0.20

* Rating 1: ~0.20

* Rating 2: ~0.10

* Rating 3: ~0.30

* Rating 4: ~0.20

* **Mixtral-8x7B-Instruct-v0.1:**

* Rating 0: ~0.20

* Rating 1: ~0.20

* Rating 2: ~0.15

* Rating 3: ~0.25

* Rating 4: ~0.20

* **Qwen2.5-0.5B-Instruct:**

* Rating 0: ~0.40

* Rating 1: ~0.25

* Rating 2: ~0.15

* Rating 3: ~0.10

* Rating 4: ~0.10

* **Qwen2.5-3B-Instruct:**

* Rating 0: ~0.40

* Rating 1: ~0.25

* Rating 2: ~0.15

* Rating 3: ~0.10

* Rating 4: ~0.10

* **Qwen2.5-32B-Instruct:**

* Rating 0: ~0.40

* Rating 1: ~0.25

* Rating 2: ~0.15

* Rating 3: ~0.10

* Rating 4: ~0.10

* **Qwen2.5-72B-Instruct:**

* Rating 0: ~0.40

* Rating 1: ~0.25

* Rating 2: ~0.15

* Rating 3: ~0.10

* Rating 4: ~0.10

* **Qwen2.5-7B-Instruct:**

* Rating 0: ~0.40

* Rating 1: ~0.25

* Rating 2: ~0.15

* Rating 3: ~0.10

* Rating 4: ~0.10

* **claude-3-haiku-20240307:**

* Rating 0: ~0.30

* Rating 1: ~0.25

* Rating 2: ~0.20

* Rating 3: ~0.15

* Rating 4: ~0.10

* **claude-3-sonnet-20240229:**

* Rating 0: ~0.35

* Rating 1: ~0.25

* Rating 2: ~0.20

* Rating 3: ~0.10

* Rating 4: ~0.10

* **gpt-4o-2024-05-13:**

* Rating 0: ~0.20

* Rating 1: ~0.30

* Rating 2: ~0.25

* Rating 3: ~0.15

* Rating 4: ~0.10

* **gpt-4o-mini-2024-07-18:**

* Rating 0: ~0.30

* Rating 1: ~0.30

* Rating 2: ~0.20

* Rating 3: ~0.10

* Rating 4: ~0.10

### Key Observations

* Models like Qwen2.5 variants tend to have a higher proportion of rating 0 compared to other models.

* Models like Mistral and Mixtral have a more even distribution of ratings, with a higher proportion of ratings 3 and 4 compared to Qwen2.5.

* The gpt-4o models show a relatively high proportion of rating 1.

### Interpretation

The chart provides a comparative view of how different language models are rated by the "gpt-4o-2024-08-06" evaluator. The distribution of ratings suggests that some models are consistently rated lower (e.g., Qwen2.5 variants), while others receive a more balanced distribution of ratings. This could indicate differences in the models' performance, capabilities, or suitability for the tasks evaluated. The gpt-4o models show a different rating pattern, possibly reflecting a different evaluation focus or inherent characteristics of these models. The data highlights the subjective nature of model evaluation and the importance of considering the evaluation context.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Stacked Bar Chart: Rating Distribution by Model

### Overview

This stacked bar chart displays the distribution of ratings (0 through 4) for various language models, as evaluated by "gpt-4o-2024-08-06". Each bar represents a specific model, and the segments within the bar indicate the proportion of responses that received each rating. The chart allows for a visual comparison of how different models perform across the rating scale.

### Components/Axes

* **Title:** "Rating Distribution by Model"

* **Subtitle:** "Evaluator: gpt-4o-2024-08-06"

* **Y-axis Title:** "Proportion"

* **Y-axis Scale:** Ranges from 0.0 to 1.0, representing proportions from 0% to 100%. Major tick marks are at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-axis Labels:** These are the names of the different language models being evaluated. They are rotated for readability. The models are:

* Llama-3.1-70B-Instruct

* Llama-3.1-8B-Instruct

* Llama-3.2-1B-Instruct

* Llama-3.2-3B-Instruct

* Mistral-7B-Instruct

* Mistral-8x22B-Instruct-v0.1

* Qwen2.5-0.5B-Instruct-v0.1

* Qwen2.5-32B-Instruct

* Qwen2.5-72B-Instruct

* claude-3-haiku-20240307

* claude-3-sonnet-20240229

* gpt-4o-2024-05-13

* gpt-4o-mini-2024-07-18

* **Legend:** Located in the top-right corner of the chart. It maps colors to rating values:

* Blue: Rating 0

* Green: Rating 1

* Red: Rating 2

* Purple: Rating 3

* Yellow: Rating 4

### Detailed Analysis

The chart displays 13 different models. For each model, the bar is segmented by color, representing the proportion of ratings from 0 (bottom, blue) to 4 (top, yellow).

Here's a breakdown of approximate proportions for each model, reading from bottom to top (Rating 0 to Rating 4):

1. **Llama-3.1-70B-Instruct:**

* Rating 0 (Blue): ~0.20

* Rating 1 (Green): ~0.10 (cumulative ~0.30)

* Rating 2 (Red): ~0.10 (cumulative ~0.40)

* Rating 3 (Purple): ~0.25 (cumulative ~0.65)

* Rating 4 (Yellow): ~0.35 (cumulative ~1.00)

2. **Llama-3.1-8B-Instruct:**

* Rating 0 (Blue): ~0.38

* Rating 1 (Green): ~0.15 (cumulative ~0.53)

* Rating 2 (Red): ~0.12 (cumulative ~0.65)

* Rating 3 (Purple): ~0.20 (cumulative ~0.85)

* Rating 4 (Yellow): ~0.15 (cumulative ~1.00)

3. **Llama-3.2-1B-Instruct:**

* Rating 0 (Blue): ~0.45

* Rating 1 (Green): ~0.15 (cumulative ~0.60)

* Rating 2 (Red): ~0.10 (cumulative ~0.70)

* Rating 3 (Purple): ~0.15 (cumulative ~0.85)

* Rating 4 (Yellow): ~0.15 (cumulative ~1.00)

4. **Llama-3.2-3B-Instruct:**

* Rating 0 (Blue): ~0.40

* Rating 1 (Green): ~0.15 (cumulative ~0.55)

* Rating 2 (Red): ~0.10 (cumulative ~0.65)

* Rating 3 (Purple): ~0.20 (cumulative ~0.85)

* Rating 4 (Yellow): ~0.15 (cumulative ~1.00)

5. **Mistral-7B-Instruct:**

* Rating 0 (Blue): ~0.20

* Rating 1 (Green): ~0.15 (cumulative ~0.35)

* Rating 2 (Red): ~0.15 (cumulative ~0.50)

* Rating 3 (Purple): ~0.30 (cumulative ~0.80)

* Rating 4 (Yellow): ~0.20 (cumulative ~1.00)

6. **Mistral-8x22B-Instruct-v0.1:**

* Rating 0 (Blue): ~0.20

* Rating 1 (Green): ~0.15 (cumulative ~0.35)

* Rating 2 (Red): ~0.15 (cumulative ~0.50)

* Rating 3 (Purple): ~0.30 (cumulative ~0.80)

* Rating 4 (Yellow): ~0.20 (cumulative ~1.00)

*(Note: Mistral-7B-Instruct and Mistral-8x22B-Instruct-v0.1 appear to have very similar distributions.)*

7. **Qwen2.5-0.5B-Instruct-v0.1:**

* Rating 0 (Blue): ~0.20

* Rating 1 (Green): ~0.15 (cumulative ~0.35)

* Rating 2 (Red): ~0.15 (cumulative ~0.50)

* Rating 3 (Purple): ~0.30 (cumulative ~0.80)

* Rating 4 (Yellow): ~0.20 (cumulative ~1.00)

*(Note: Qwen2.5-0.5B-Instruct-v0.1 also shows a very similar distribution to the Mistral models.)*

8. **Qwen2.5-32B-Instruct:**

* Rating 0 (Blue): ~0.20

* Rating 1 (Green): ~0.15 (cumulative ~0.35)

* Rating 2 (Red): ~0.15 (cumulative ~0.50)

* Rating 3 (Purple): ~0.30 (cumulative ~0.80)

* Rating 4 (Yellow): ~0.20 (cumulative ~1.00)

*(Note: Qwen2.5-32B-Instruct also exhibits a very similar distribution.)*

9. **Qwen2.5-72B-Instruct:**

* Rating 0 (Blue): ~0.20

* Rating 1 (Green): ~0.15 (cumulative ~0.35)

* Rating 2 (Red): ~0.15 (cumulative ~0.50)

* Rating 3 (Purple): ~0.30 (cumulative ~0.80)

* Rating 4 (Yellow): ~0.20 (cumulative ~1.00)

*(Note: Qwen2.5-72B-Instruct also shows a very similar distribution.)*

10. **claude-3-haiku-20240307:**

* Rating 0 (Blue): ~0.10

* Rating 1 (Green): ~0.10 (cumulative ~0.20)

* Rating 2 (Red): ~0.10 (cumulative ~0.30)

* Rating 3 (Purple): ~0.30 (cumulative ~0.60)

* Rating 4 (Yellow): ~0.40 (cumulative ~1.00)

11. **claude-3-sonnet-20240229:**

* Rating 0 (Blue): ~0.10

* Rating 1 (Green): ~0.10 (cumulative ~0.20)

* Rating 2 (Red): ~0.10 (cumulative ~0.30)

* Rating 3 (Purple): ~0.30 (cumulative ~0.60)

* Rating 4 (Yellow): ~0.40 (cumulative ~1.00)

*(Note: claude-3-haiku-20240307 and claude-3-sonnet-20240229 appear to have identical distributions.)*

12. **gpt-4o-2024-05-13:**

* Rating 0 (Blue): ~0.10

* Rating 1 (Green): ~0.10 (cumulative ~0.20)

* Rating 2 (Red): ~0.10 (cumulative ~0.30)

* Rating 3 (Purple): ~0.30 (cumulative ~0.60)

* Rating 4 (Yellow): ~0.40 (cumulative ~1.00)

*(Note: gpt-4o-2024-05-13 also shows an identical distribution to the Claude models.)*

13. **gpt-4o-mini-2024-07-18:**

* Rating 0 (Blue): ~0.38

* Rating 1 (Green): ~0.15 (cumulative ~0.53)

* Rating 2 (Red): ~0.12 (cumulative ~0.65)

* Rating 3 (Purple): ~0.20 (cumulative ~0.85)

* Rating 4 (Yellow): ~0.15 (cumulative ~1.00)

*(Note: gpt-4o-mini-2024-07-18 has a distribution very similar to Llama-3.1-8B-Instruct.)*

### Key Observations

* **Clustering of Distributions:** Several models exhibit remarkably similar rating distributions.

* The Mistral models (Mistral-7B-Instruct, Mistral-8x22B-Instruct-v0.1) and the Qwen2.5 models (Qwen2.5-0.5B-Instruct-v0.1, Qwen2.5-32B-Instruct, Qwen2.5-72B-Instruct) all share a nearly identical distribution: approximately 20% Rating 0, 15% Rating 1, 15% Rating 2, 30% Rating 3, and 20% Rating 4. This suggests a common performance profile among these groups.

* The Claude models (claude-3-haiku-20240307, claude-3-sonnet-20240229) and one of the GPT-4o variants (gpt-4o-2024-05-13) also share an identical distribution: approximately 10% Rating 0, 10% Rating 1, 10% Rating 2, 30% Rating 3, and 40% Rating 4. This indicates a high proportion of top ratings (3 and 4) for these models.

* **Outliers/Distinct Distributions:**

* Llama-3.1-70B-Instruct stands out with a significantly higher proportion of Rating 4 (approximately 35%) and a lower proportion of Rating 0 (approximately 20%) compared to some other Llama models.

* Llama-3.1-8B-Instruct and gpt-4o-mini-2024-07-18 have very similar distributions, characterized by a higher proportion of Rating 0 (around 38%) and lower proportions of higher ratings compared to the Claude/gpt-4o-2024-05-13 group.

* **Dominance of Lower Ratings:** For many models (e.g., Llama-3.1-8B-Instruct, Llama-3.2-1B-Instruct, Llama-3.2-3B-Instruct, gpt-4o-mini-2024-07-18), Rating 0 constitutes the largest single proportion, suggesting that a substantial portion of responses did not meet a high standard.

* **Dominance of Higher Ratings:** Conversely, the Claude models and gpt-4o-2024-05-13 show a strong preference for Ratings 3 and 4, indicating superior performance according to this evaluator.

### Interpretation

This chart provides a comparative analysis of the performance of various language models as judged by a specific evaluator ("gpt-4o-2024-08-06"). The data suggests that different models have distinct performance characteristics.

The clustering of distributions for the Mistral and Qwen2.5 models implies that these models, despite their different parameter counts or versions, are being evaluated similarly by this specific system. This could indicate a consistent evaluation criterion being applied, or that these models are inherently performing at a similar level of quality.

The Claude models and gpt-4o-2024-05-13 appear to be the top performers in this evaluation, consistently receiving higher ratings (3 and 4). This suggests they are more adept at generating responses that satisfy the evaluator's criteria.

The Llama models show more varied performance. Llama-3.1-70B-Instruct seems to perform better than some of its smaller Llama counterparts, particularly in achieving higher ratings. Llama-3.1-8B-Instruct and gpt-4o-mini-2024-07-18, on the other hand, show a tendency towards lower ratings, indicating potential areas for improvement.

The presence of a significant proportion of Rating 0 for several models suggests that the evaluation criteria are stringent, or that these models struggle with certain types of prompts or tasks. Conversely, the high proportion of Ratings 3 and 4 for the top-performing models highlights their strengths.

In essence, the chart allows for a quick assessment of which models are generally perceived as better by the evaluator, and which might require further development or fine-tuning. The consistency in some distributions is a notable finding, potentially pointing to shared architectural similarities or training data influences.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Stacked Bar Chart: Rating Distribution by Model

### Overview

The image presents a stacked bar chart visualizing the rating distribution for various language models, as evaluated by "gpt-4o-2024-08-06". The chart displays the proportion of each rating (0 to 4) for each model. The x-axis lists the model names, and the y-axis represents the proportion, ranging from 0.0 to 1.0.

### Components/Axes

* **Title:** "Rating Distribution by Model"

* **Evaluator:** "gpt-4o-2024-08-06" (located at the top of the chart)

* **X-axis Label:** Model Name

* **Y-axis Label:** Proportion

* **Y-axis Scale:** 0.0 to 1.0, with increments of 0.2

* **Legend:** Located in the top-right corner, mapping colors to ratings:

* Blue: 0

* Light Blue: 1

* Purple: 2

* Pink: 3

* Red: 4

* **Models (X-axis):**

* Llama-3-70B-Instruct

* Llama-3-8B-Instruct

* Llama-3-2-1B-Instruct

* Mistral-7B-Instruct

* Mistral-8x22B-Instruct-v0.1

* Mixtral-8x7B-Instruct-v0.1

* Qwen2-3.5-0.5B-Instruct

* Qwen2-5-32B-Instruct

* Qwen2-5-3B-Instruct

* Qwen2-5-7B-Instruct

* Qwen2-5-7B-Instruct-20240307

* claude-3-haiku-20240229

* claude-3-sonnet-2024-05-13

* gpt-4o-mini-2024-07-18

### Detailed Analysis

The chart consists of stacked bars, each representing a model. The height of each segment within a bar indicates the proportion of responses receiving that specific rating.

* **Llama-3-70B-Instruct:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.2 proportion of rating 2, 0.3 proportion of rating 3, and 0.35 proportion of rating 4.

* **Llama-3-8B-Instruct:** Approximately 0.1 proportion of rating 0, 0.15 proportion of rating 1, 0.2 proportion of rating 2, 0.25 proportion of rating 3, and 0.3 proportion of rating 4.

* **Llama-3-2-1B-Instruct:** Approximately 0.2 proportion of rating 0, 0.2 proportion of rating 1, 0.2 proportion of rating 2, 0.2 proportion of rating 3, and 0.2 proportion of rating 4.

* **Mistral-7B-Instruct:** Approximately 0.1 proportion of rating 0, 0.1 proportion of rating 1, 0.2 proportion of rating 2, 0.3 proportion of rating 3, and 0.3 proportion of rating 4.

* **Mistral-8x22B-Instruct-v0.1:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.15 proportion of rating 2, 0.3 proportion of rating 3, and 0.4 proportion of rating 4.

* **Mixtral-8x7B-Instruct-v0.1:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.15 proportion of rating 2, 0.25 proportion of rating 3, and 0.45 proportion of rating 4.

* **Qwen2-3.5-0.5B-Instruct:** Approximately 0.2 proportion of rating 0, 0.2 proportion of rating 1, 0.2 proportion of rating 2, 0.2 proportion of rating 3, and 0.2 proportion of rating 4.

* **Qwen2-5-32B-Instruct:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.15 proportion of rating 2, 0.3 proportion of rating 3, and 0.4 proportion of rating 4.

* **Qwen2-5-3B-Instruct:** Approximately 0.1 proportion of rating 0, 0.15 proportion of rating 1, 0.2 proportion of rating 2, 0.25 proportion of rating 3, and 0.3 proportion of rating 4.

* **Qwen2-5-7B-Instruct:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.15 proportion of rating 2, 0.3 proportion of rating 3, and 0.4 proportion of rating 4.

* **Qwen2-5-7B-Instruct-20240307:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.15 proportion of rating 2, 0.3 proportion of rating 3, and 0.4 proportion of rating 4.

* **claude-3-haiku-20240229:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.15 proportion of rating 2, 0.3 proportion of rating 3, and 0.4 proportion of rating 4.

* **claude-3-sonnet-2024-05-13:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.15 proportion of rating 2, 0.3 proportion of rating 3, and 0.4 proportion of rating 4.

* **gpt-4o-mini-2024-07-18:** Approximately 0.05 proportion of rating 0, 0.1 proportion of rating 1, 0.15 proportion of rating 2, 0.3 proportion of rating 3, and 0.4 proportion of rating 4.

### Key Observations

* Most models exhibit a similar distribution, with a higher proportion of ratings 3 and 4.

* Llama-3-2-1B-Instruct has a more uniform distribution across all ratings, indicating more variability in its performance.

* Models like Mixtral-8x7B-Instruct-v0.1 and Qwen2-5-32B-Instruct show a relatively higher proportion of rating 4, suggesting better performance.

* The proportion of rating 0 is consistently low across all models.

### Interpretation

The chart demonstrates the performance of various language models based on a rating scale from 0 to 4, as judged by the "gpt-4o-2024-08-06" evaluator. The stacked bar chart effectively visualizes the distribution of these ratings for each model, allowing for a quick comparison of their relative strengths and weaknesses. The consistent trend of higher ratings (3 and 4) across most models suggests a generally high level of performance. The variability observed in Llama-3-2-1B-Instruct could indicate a wider range of response quality, while the higher proportion of top ratings for models like Mixtral-8x7B-Instruct-v0.1 and Qwen2-5-32B-Instruct suggests they consistently generate higher-quality responses according to this evaluator. The evaluator's identity is crucial context; the results are specific to its criteria and biases. The data suggests that the evaluator generally finds the models to be performing well, but there are noticeable differences in the consistency and quality of their outputs.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Stacked Bar Chart: Rating Distribution by Model

### Overview

This image is a stacked bar chart titled "Rating Distribution by Model" with a subtitle indicating the evaluator is "gpt-4o-2024-08-06". It displays the proportional distribution of ratings (0 through 4) given by this evaluator to 16 different large language models. The chart is designed to compare how different models performed according to this specific evaluation run.

### Components/Axes

* **Chart Title:** "Rating Distribution by Model"

* **Subtitle/Evaluator:** "Evaluator: gpt-4o-2024-08-06"

* **Y-Axis:**

* **Label:** "Proportion"

* **Scale:** Linear, from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:**

* **Label:** None explicit. Contains the names of 16 models, listed below.

* **Model Names (from left to right):**

1. Llama-3.1-70B-Instruct

2. Llama-3.1-8B-Instruct

3. Llama-3.2-1B-Instruct

4. Llama-3.2-3B-Instruct

5. Mistral-7B-Instruct-v0.1

6. Mixtral-8x22B-Instruct-v0.1

7. Mixtral-8x7B-Instruct-v0.1

8. Qwen2.5-0.5B-Instruct

9. Qwen2.5-5B-Instruct

10. Qwen2.5-32B-Instruct

11. Qwen2.5-3B-Instruct

12. Qwen2.5-72B-Instruct

13. Qwen2.5-7B-Instruct

14. claude-3-haiku-20240307

15. claude-3-sonnet-20240229

16. gpt-4o-2024-05-13

17. gpt-4o-mini-2024-07-18

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Title:** "Rating"

* **Categories & Colors:**

| Rating | Color |

| :----- | :---- |

| 0 | Blue |

| 1 | Green |

| 2 | Red |

| 3 | Purple |

| 4 | Gold/Yellow |

### Detailed Analysis

Each bar represents 100% (proportion 1.0) of the ratings for a given model, segmented by color according to the rating received. The approximate proportion for each segment is estimated based on the y-axis scale.

1. **Llama-3.1-70B-Instruct:** Dominated by rating 4 (gold, ~45%), followed by rating 3 (purple, ~15%), rating 2 (red, ~10%), rating 1 (green, ~10%), and rating 0 (blue, ~20%).

2. **Llama-3.1-8B-Instruct:** Large rating 0 (blue, ~38%), significant rating 1 (green, ~22%), rating 2 (red, ~18%), rating 3 (purple, ~15%), small rating 4 (gold, ~7%).

3. **Llama-3.2-1B-Instruct:** Very large rating 0 (blue, ~65%), moderate rating 1 (green, ~20%), small rating 2 (red, ~10%), very small rating 3 (purple, ~5%).

4. **Llama-3.2-3B-Instruct:** Large rating 0 (blue, ~45%), large rating 1 (green, ~25%), moderate rating 2 (red, ~15%), small rating 3 (purple, ~10%), very small rating 4 (gold, ~5%).

5. **Mistral-7B-Instruct-v0.1:** Large rating 0 (blue, ~52%), large rating 1 (green, ~20%), moderate rating 2 (red, ~12%), moderate rating 3 (purple, ~12%), small rating 4 (gold, ~4%).

6. **Mixtral-8x22B-Instruct-v0.1:** Very large rating 0 (blue, ~72%), moderate rating 1 (green, ~15%), small rating 2 (red, ~8%), small rating 3 (purple, ~5%).

7. **Mixtral-8x7B-Instruct-v0.1:** Large rating 0 (blue, ~20%), large rating 1 (green, ~18%), moderate rating 2 (red, ~15%), large rating 3 (purple, ~27%), moderate rating 4 (gold, ~20%).

8. **Qwen2.5-0.5B-Instruct:** Large rating 0 (blue, ~23%), large rating 1 (green, ~16%), moderate rating 2 (red, ~14%), large rating 3 (purple, ~27%), moderate rating 4 (gold, ~20%).

9. **Qwen2.5-5B-Instruct:** Very large rating 0 (blue, ~81%), small rating 1 (green, ~12%), very small rating 2 (red, ~7%).

10. **Qwen2.5-32B-Instruct:** Large rating 0 (blue, ~21%), large rating 1 (green, ~13%), moderate rating 2 (red, ~14%), large rating 3 (purple, ~25%), moderate rating 4 (gold, ~27%).

11. **Qwen2.5-3B-Instruct:** Large rating 0 (blue, ~33%), large rating 1 (green, ~18%), moderate rating 2 (red, ~20%), large rating 3 (purple, ~19%), small rating 4 (gold, ~10%).

12. **Qwen2.5-72B-Instruct:** Large rating 0 (blue, ~22%), large rating 1 (green, ~17%), moderate rating 2 (red, ~14%), large rating 3 (purple, ~22%), moderate rating 4 (gold, ~25%).

13. **Qwen2.5-7B-Instruct:** Large rating 0 (blue, ~29%), large rating 1 (green, ~20%), moderate rating 2 (red, ~17%), large rating 3 (purple, ~19%), moderate rating 4 (gold, ~15%).

14. **claude-3-haiku-20240307:** Large rating 0 (blue, ~19%), large rating 1 (green, ~12%), moderate rating 2 (red, ~12%), large rating 3 (purple, ~22%), moderate rating 4 (gold, ~35%).

15. **claude-3-sonnet-20240229:** Large rating 0 (blue, ~38%), large rating 1 (green, ~17%), moderate rating 2 (red, ~18%), large rating 3 (purple, ~17%), small rating 4 (gold, ~10%).

16. **gpt-4o-2024-05-13:** Large rating 0 (blue, ~24%), large rating 1 (green, ~15%), moderate rating 2 (red, ~14%), large rating 3 (purple, ~22%), moderate rating 4 (gold, ~25%).

17. **gpt-4o-mini-2024-07-18:** Large rating 0 (blue, ~33%), large rating 1 (green, ~17%), moderate rating 2 (red, ~17%), large rating 3 (purple, ~20%), moderate rating 4 (gold, ~13%).

### Key Observations

* **High Variance in Rating 0:** The proportion of the lowest rating (0, blue) varies dramatically, from a very high ~81% for `Qwen2.5-5B-Instruct` to a relatively low ~19% for `claude-3-haiku-20240307`.

* **Top Performers by Low Rating 0:** Models with the smallest blue segments (suggesting fewer very poor ratings) include `claude-3-haiku-20240307`, `Mixtral-8x7B-Instruct-v0.1`, `Qwen2.5-0.5B-Instruct`, and `Qwen2.5-32B-Instruct`.

* **Top Performers by High Rating 4:** Models with the largest gold segments (suggesting more top ratings) include `Llama-3.1-70B-Instruct`, `claude-3-haiku-20240307`, `Qwen2.5-32B-Instruct`, and `Qwen2.5-72B-Instruct`.

* **Middle-Tier Clustering:** Many models, particularly the Qwen2.5 series and the GPT-4o variants, show a more balanced distribution across ratings 0, 1, 3, and 4, with rating 2 (red) often being a smaller middle segment.

* **Outlier - Qwen2.5-5B-Instruct:** This model is a clear outlier with an overwhelmingly high proportion of rating 0 and no visible rating 4 segment, indicating very poor performance according to this evaluator.

### Interpretation

This chart visualizes the performance assessment of various LLMs by the `gpt-4o-2024-08-06` model acting as an evaluator. The data suggests a significant spread in perceived quality.

* **Performance Hierarchy:** The evaluator appears to favor `claude-3-haiku-20240307` and `Llama-3.1-70B-Instruct`, giving them the highest proportions of top ratings (4). Conversely, it rates `Qwen2.5-5B-Instruct` and `Llama-3.2-1B-Instruct` very poorly, with high proportions of the lowest rating (0).

* **Model Size vs. Performance:** There isn't a simple linear relationship between model size (e.g., 70B vs 8B) and rating. For instance, `Llama-3.1-70B-Instruct` performs much better than its 8B counterpart, but `Qwen2.5-32B-Instruct` and `Qwen2.5-72B-Instruct` have similar, strong distributions, while the very small `Qwen2.5-0.5B-Instruct` also shows a respectable distribution with a notable rating 4 segment.

* **Evaluator Bias Context:** It is critical to note that the ratings are generated by a single AI model (`gpt-4o-2024-08-06`). This distribution reflects that specific model's judgment criteria and potential biases, not an absolute ground truth. The chart is most useful for comparing relative performance *as judged by this particular evaluator*.

* **Anomaly Justification:** The extreme result for `Qwen2.5-5B-Instruct` could indicate a specific failure mode for that model on the evaluation tasks, a mismatch between the model's capabilities and the test set, or a potential error in the evaluation pipeline for that specific run.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Rating Distribution by Model

### Overview

The chart displays the distribution of ratings (0–4) for various AI models evaluated by the "gpt-4o-2024-08-06" evaluator. Each bar represents a model, with segments colored according to the proportion of each rating. The y-axis shows the proportion of ratings (0.0–1.0), while the x-axis lists model names and versions.

### Components/Axes

- **Title**: "Rating Distribution by Model"

- **X-axis**: Model names and versions (e.g., "Llama-3-1-70B-Instruct", "Mistral-7B-Instruct-v0.1", "Claude-3-haiku-20240307").

- **Y-axis**: "Proportion" (0.0–1.0), with ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

- **Legend**:

- **Blue**: Rating 0

- **Green**: Rating 1

- **Red**: Rating 2

- **Purple**: Rating 3

- **Yellow**: Rating 4

### Detailed Analysis

- **Model Ratings**:

- **Llama-3-1-70B-Instruct**: High proportion of yellow (rating 4) and purple (rating 3), with smaller segments for lower ratings.

- **Llama-3-1-8B-Instruct**: Balanced distribution, with notable green (rating 1) and red (rating 2) segments.

- **Mistral-7B-Instruct-v0.1**: Dominant yellow (rating 4) and purple (rating 3), with minimal blue (rating 0).

- **Claude-3-haiku-20240307**: High yellow (rating 4) and purple (rating 3), with a smaller red (rating 2) segment.

- **gpt-4o-mini-2024-07-18**: Moderate yellow (rating 4) and purple (rating 3), with a significant red (rating 2) segment.

- **Proportions**:

- Most models show a strong presence of higher ratings (3–4), with yellow (rating 4) being the largest segment for many.

- Lower ratings (0–2) are less common, though some models (e.g., Llama-3-1-8B-Instruct) have notable red (rating 2) segments.

### Key Observations

- **High-Performing Models**: Models like "Mistral-7B-Instruct-v0.1" and "Claude-3-haiku-20240307" exhibit a high proportion of top ratings (4), suggesting strong performance.

- **Moderate Performers**: "Llama-3-1-8B-Instruct" and "gpt-4o-mini-2024-07-18" show a mix of mid-range ratings (2–3), indicating variability in performance.

- **Outliers**: "Llama-3-1-70B-Instruct" has the highest proportion of rating 4, while "Llama-3-1-8B-Instruct" has a relatively high proportion of rating 2.

### Interpretation

The chart highlights that most models evaluated by "gpt-4o-2024-08-06" received predominantly high ratings (3–4), with yellow (rating 4) being the most common. This suggests that the evaluator generally found the models to be of high quality. However, variations exist:

- **Model Size vs. Performance**: Larger models (e.g., Llama-3-1-70B-Instruct) may perform better, as indicated by their higher rating 4 proportions.

- **Version Differences**: Newer versions (e.g., Mistral-7B-Instruct-v0.1) often show improved ratings compared to older ones.

- **Rating Distribution**: The presence of red (rating 2) segments in some models (e.g., Llama-3-1-8B-Instruct) indicates that while some models are strong, others have notable weaknesses.

The data underscores the importance of model architecture and versioning in performance, with higher-rated models likely being more reliable or accurate for specific tasks. The evaluator's consistent use of high ratings (4) across models suggests a generally positive assessment, but the distribution of lower ratings (0–2) highlights areas for improvement.

DECODING INTELLIGENCE...