## ScatterPlot: Syntax Accuracy vs. LMArena Rank

### Overview

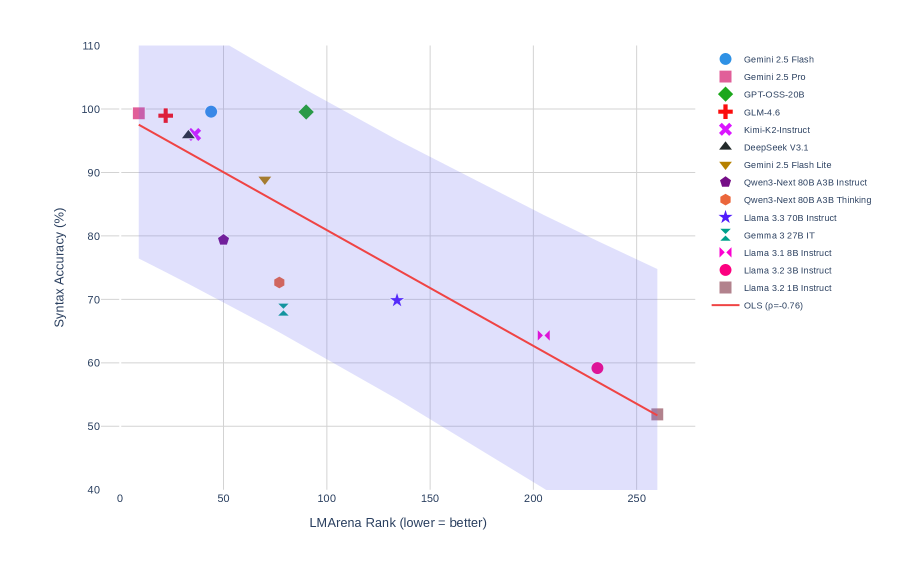

The image is a scatter plot visualizing the relationship between **LMArena Rank** (x-axis) and **Syntax Accuracy (%)** (y-axis) for 14 AI language models. A red Ordinary Least Squares (OLS) regression line with a confidence interval (purple shaded area) is overlaid. The legend maps model names to colored symbols.

---

### Components/Axes

- **X-axis (LMArena Rank)**:

- Label: "LMArena Rank (lower = better)"

- Scale: 0 to 250 (increments of 50)

- **Y-axis (Syntax Accuracy)**:

- Label: "Syntax Accuracy (%)"

- Scale: 40% to 110% (increments of 10%)

- **Legend**:

- Located in the top-right corner.

- 14 entries with model names, colors, and symbols (e.g., "Gemini 2.5 Flash" = blue circle, "GPT-OSS-20B" = green diamond).

- **Regression Line**:

- Red line labeled "OLS (p=0.76)".

- Shaded purple area represents the 95% confidence interval.

---

### Detailed Analysis

1. **Data Points**:

- **Gemini 2.5 Flash** (blue circle): (50, 100)

- **Gemini 2.5 Pro** (pink square): (0, 100)

- **GPT-OSS-20B** (green diamond): (100, 100)

- **GLM-4.6** (red cross): (20, 98)

- **Kimi-K2-Instruct** (purple X): (40, 95)

- **DeepSeek V3.1** (black triangle): (30, 93)

- **Gemini 2.5 Flash Lite** (brown triangle): (60, 88)

- **Qwen3-Next 80B A3B Instruct** (purple pentagon): (50, 79)

- **Qwen3-Next 80B A3B Thinking** (orange hexagon): (70, 72)

- **Llama 3.3 70B Instruct** (blue star): (130, 70)

- **Gemma 3.27B IT** (teal triangle): (80, 68)

- **Llama 3.1 8B Instruct** (pink bowtie): (210, 64)

- **Llama 3.2 3B Instruct** (pink circle): (230, 59)

- **Llama 3.2 1B Instruct** (brown hexagon): (260, 52)

2. **Regression Line**:

- Slope: Negative (higher ranks correlate with lower accuracy).

- Intercept: ~100% at rank 0.

- Confidence interval: Wide, suggesting uncertainty in the slope estimate.

---

### Key Observations

1. **High-Performing Models**:

- Gemini 2.5 Flash, Gemini 2.5 Pro, and GPT-OSS-20B cluster near the top of the y-axis (98–100% accuracy) despite varying ranks.

- GLM-4.6 (98%) and Kimi-K2-Instruct (95%) also show strong performance.

2. **Low-Performing Models**:

- Llama 3.2 1B Instruct (52%) and Llama 3.2 3B Instruct (59%) are at the bottom of the y-axis.

3. **Regression Line**:

- The OLS line (p=0.76) indicates a **non-significant negative correlation** between rank and accuracy (p > 0.05).

4. **Confidence Interval**:

- The shaded purple area spans ~40% of the y-axis range, reflecting high variability in the data.

---

### Interpretation

- **Model Performance**: Top-tier models (e.g., Gemini, GPT-OSS) achieve near-perfect syntax accuracy regardless of rank, suggesting rank may not fully capture their capabilities.

- **Llama Models**: Lower-ranked Llama variants (3.2 1B/3B) underperform significantly, highlighting potential limitations in smaller models.

- **Statistical Insight**: The OLS line’s p-value (0.76) implies the observed negative trend is likely due to random variation rather than a true relationship.

- **Confidence Interval**: The wide shaded area suggests the regression model has low predictive power for this dataset.

This plot underscores the disconnect between competitive ranking (LMArena) and syntactic accuracy, emphasizing the need for domain-specific evaluation metrics.