## Diagram: MLE-Bench Competition Workflow

### Overview

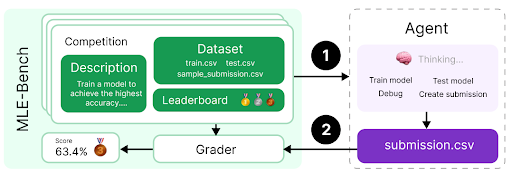

The diagram illustrates the workflow for an MLE-Bench competition, showing how an agent interacts with competition components to produce a submission. It includes competition details (description, dataset, leaderboard, score) and the agent's process (thinking, model training/testing, debugging, submission creation).

### Components/Axes

#### Left Section (Competition Details):

- **Competition**: Contains sub-components:

- **Description**: "Train a model to achieve the highest accuracy..."

- **Dataset**: Files listed as `train.csv`, `test.csv`, `sample_submission.csv`.

- **Leaderboard**: Visualized with gold, silver, and bronze medals.

- **Score**: 63.4% with a gold medal icon.

- **Grader**: A component that evaluates submissions, connected to the competition via an arrow.

#### Right Section (Agent Process):

- **Agent**: A box labeled "Thinking..." with a brain icon.

- Actions listed: "Train model," "Test model," "Debug," "Create submission."

- **submission.csv**: A purple box representing the final output file.

#### Arrows/Flow:

1. **Arrow 1**: Connects the competition to the agent's "Thinking" step.

2. **Arrow 2**: Links the agent's actions to the `submission.csv` file.

3. **Arrow 3**: Connects the competition's score (63.4%) to the grader.

### Detailed Analysis

- **Competition**:

- The dataset includes standard machine learning files (`train.csv` for training, `test.csv` for evaluation, `sample_submission.csv` for formatting guidance).

- The leaderboard uses medals to rank participants, with the gold medal indicating the highest score (63.4%).

- **Agent**:

- The workflow follows a typical ML pipeline: training → testing → debugging → submission.

- The "Thinking" step suggests iterative problem-solving before execution.

### Key Observations

- The agent’s score (63.4%) is the highest (gold medal), implying optimal performance.

- The `sample_submission.csv` file likely provides a template for formatting predictions.

- The grader acts as a validation mechanism, ensuring submissions meet competition criteria.

### Interpretation

This diagram represents a structured approach to competitive machine learning. The agent’s process mirrors real-world ML workflows, emphasizing iterative refinement. The high score (63.4%) suggests the model effectively solved the task, while the leaderboard medals highlight competitive ranking. The inclusion of a sample submission file underscores the importance of adhering to formatting standards, a common requirement in Kaggle-style competitions. The grader’s role ensures fairness by automating evaluation, reducing human bias. Overall, the diagram encapsulates the intersection of algorithmic design, competition mechanics, and automated validation.