TECHNICAL ASSET FINGERPRINT

07c5dc2312aefbeb5eee6ad8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart Type: Comparative Analysis of Risk Coverage and Receiver Operator Curves

### Overview

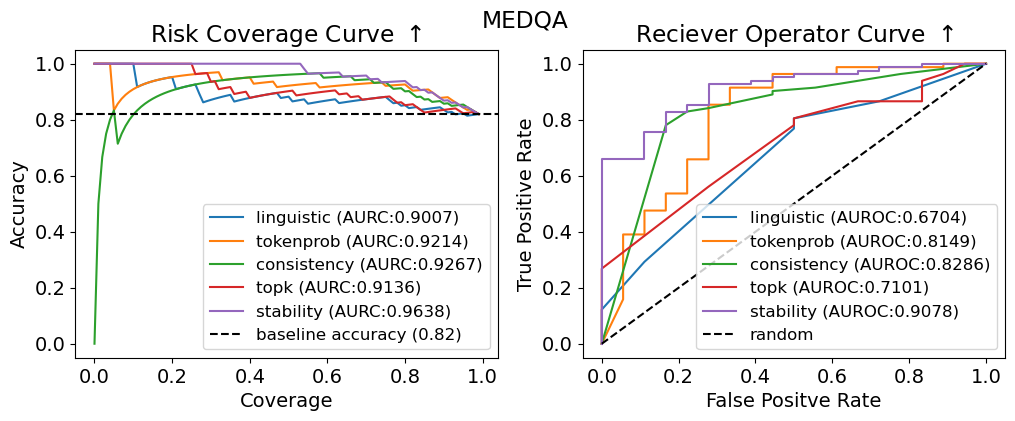

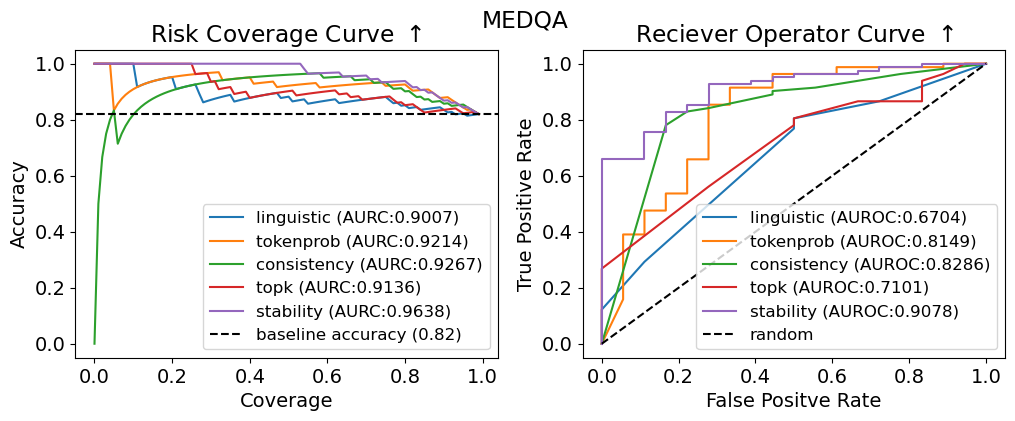

The image presents two charts side-by-side, both related to the MEDQA dataset. The left chart is a "Risk Coverage Curve," displaying accuracy as a function of coverage for different methods. The right chart is a "Receiver Operator Curve," showing the true positive rate against the false positive rate. Both charts compare the performance of 'linguistic', 'tokenprob', 'consistency', 'topk', and 'stability' methods.

### Components/Axes

**Left Chart: Risk Coverage Curve**

* **Title:** Risk Coverage Curve ↑

* **Y-axis:** Accuracy, ranging from 0.0 to 1.0 in increments of 0.2.

* **X-axis:** Coverage, ranging from 0.0 to 1.0 in increments of 0.2.

* **Legend (bottom-center):**

* linguistic (AURC:0.9007) - Blue line

* tokenprob (AURC:0.9214) - Orange line

* consistency (AURC:0.9267) - Green line

* topk (AURC:0.9136) - Red line

* stability (AURC:0.9638) - Purple line

* baseline accuracy (0.82) - Dashed black line

**Right Chart: Receiver Operator Curve**

* **Title:** Reciever Operator Curve ↑

* **Y-axis:** True Positive Rate, ranging from 0.0 to 1.0 in increments of 0.2.

* **X-axis:** False Positive Rate, ranging from 0.0 to 1.0 in increments of 0.2.

* **Legend (bottom-center):**

* linguistic (AUROC:0.6704) - Blue line

* tokenprob (AUROC:0.8149) - Orange line

* consistency (AUROC:0.8286) - Green line

* topk (AUROC:0.7101) - Red line

* stability (AUROC:0.9078) - Purple line

* random - Dashed black line

### Detailed Analysis

**Left Chart: Risk Coverage Curve**

* **baseline accuracy:** A horizontal dashed black line at approximately 0.82.

* **linguistic (AURC:0.9007):** The blue line starts at approximately 0.75 accuracy at 0 coverage, rises sharply, and then gradually decreases to approximately 0.85 accuracy at 1.0 coverage.

* **tokenprob (AURC:0.9214):** The orange line starts at approximately 0.70 accuracy at 0 coverage, rises sharply, and then gradually decreases to approximately 0.90 accuracy at 1.0 coverage.

* **consistency (AURC:0.9267):** The green line starts at approximately 0.0 accuracy at 0 coverage, rises sharply to approximately 0.90 accuracy at 0.1 coverage, and then gradually decreases to approximately 0.83 accuracy at 1.0 coverage.

* **topk (AURC:0.9136):** The red line starts at approximately 0.85 accuracy at 0 coverage, and then gradually decreases to approximately 0.83 accuracy at 1.0 coverage.

* **stability (AURC:0.9638):** The purple line starts at 1.0 accuracy at 0 coverage, and then remains at 1.0 accuracy until 0.1 coverage, and then gradually decreases to approximately 0.92 accuracy at 1.0 coverage.

**Right Chart: Receiver Operator Curve**

* **random:** A dashed black line representing a random classifier, rising linearly from (0.0, 0.0) to (1.0, 1.0).

* **linguistic (AUROC:0.6704):** The blue line rises linearly from (0.0, 0.0) to approximately (0.8, 0.6), and then remains constant.

* **tokenprob (AUROC:0.8149):** The orange line is a step function, rising in steps from (0.0, 0.0) to approximately (0.8, 0.9).

* **consistency (AUROC:0.8286):** The green line rises sharply from (0.0, 0.0) to approximately (0.2, 0.4), then rises more gradually to approximately (0.8, 0.9).

* **topk (AUROC:0.7101):** The red line rises sharply from (0.0, 0.0) to approximately (0.2, 0.3), then rises more gradually to approximately (0.8, 0.9).

* **stability (AUROC:0.9078):** The purple line is a step function, rising in steps from (0.0, 0.0) to approximately (0.2, 0.7), and then to approximately (0.9, 1.0).

### Key Observations

* In the Risk Coverage Curve, 'stability' consistently maintains the highest accuracy across different coverage levels, while 'consistency' starts with low accuracy but improves rapidly with increasing coverage.

* In the Receiver Operator Curve, 'stability' also demonstrates the best performance, closely followed by 'consistency' and 'tokenprob'. 'linguistic' and 'topk' perform relatively worse.

* The baseline accuracy in the Risk Coverage Curve is approximately 0.82.

### Interpretation

The charts provide a comparative analysis of different methods ('linguistic', 'tokenprob', 'consistency', 'topk', and 'stability') in the context of the MEDQA dataset. The Risk Coverage Curve illustrates how accuracy changes with increasing coverage, while the Receiver Operator Curve assesses the trade-off between true positive rate and false positive rate.

The 'stability' method appears to be the most effective overall, achieving high accuracy and a favorable true positive rate/false positive rate balance. 'consistency' and 'tokenprob' also perform well, while 'linguistic' and 'topk' show relatively weaker performance. The baseline accuracy in the Risk Coverage Curve provides a benchmark for evaluating the performance of these methods. The AUROC values in the legend quantify the overall performance of each method in the Receiver Operator Curve.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Charts: Risk Coverage and Receiver Operator Curves

### Overview

The image presents two charts side-by-side. The left chart is a "Risk Coverage Curve" and the right chart is a "Receiver Operator Curve" (ROC). Both charts evaluate the performance of different methods (linguistic, tokenprob, consistency, topk, stability) against a baseline, likely in a medical context given the "MEDQA" title. Both charts share a similar color scheme for the methods being evaluated.

### Components/Axes

**Left Chart (Risk Coverage Curve):**

* **Title:** Risk Coverage Curve ↑ (The arrow indicates increasing is better)

* **X-axis:** Coverage (ranging from 0.0 to 1.0)

* **Y-axis:** Accuracy (ranging from 0.0 to 1.0)

* **Legend (top-right):**

* linguistic (AUROC: 0.9007) - Yellow

* tokenprob (AUROC: 0.9214) - Blue

* consistency (AUROC: 0.9267) - Green

* topk (AUROC: 0.9136) - Red

* stability (AUROC: 0.9638) - Purple

* baseline accuracy (0.82) - Black dashed line

**Right Chart (Receiver Operator Curve):**

* **Title:** Receiver Operator Curve ↑ (The arrow indicates increasing is better)

* **X-axis:** False Positive Rate (ranging from 0.0 to 1.0)

* **Y-axis:** True Positive Rate (ranging from 0.0 to 1.0)

* **Legend (top-right):**

* linguistic (AUROC: 0.6704) - Yellow

* tokenprob (AUROC: 0.8149) - Blue

* consistency (AUROC: 0.8286) - Green

* topk (AUROC: 0.7101) - Red

* stability (AUROC: 0.9078) - Purple

* random - Black dashed line

### Detailed Analysis or Content Details

**Left Chart (Risk Coverage Curve):**

* The 'stability' line (purple) shows the highest accuracy across all coverage values, consistently above 0.9. It slopes downward slightly as coverage increases.

* The 'consistency' line (green) is also high, generally above 0.9, and similar to 'stability' in its trend.

* 'tokenprob' (blue) and 'topk' (red) lines are slightly lower, hovering around 0.85-0.95.

* 'linguistic' (yellow) is the lowest of the methods, starting around 0.8 and decreasing more rapidly with increasing coverage.

* The baseline accuracy (black dashed) is a horizontal line at approximately 0.82. All methods outperform the baseline.

**Right Chart (Receiver Operator Curve):**

* The 'stability' line (purple) demonstrates the best performance, curving sharply upwards and reaching a True Positive Rate close to 1.0 with a False Positive Rate below 0.2.

* 'consistency' (green) and 'tokenprob' (blue) show moderate performance, with curves that are less steep than 'stability' but still above the 'random' line.

* 'linguistic' (yellow) and 'topk' (red) have the lowest performance, with curves that are closer to the 'random' line.

* The 'random' line (black dashed) represents the performance of a random classifier, serving as a benchmark.

### Key Observations

* 'Stability' consistently outperforms all other methods on both charts, indicating it is the most robust approach.

* The Risk Coverage Curve shows that higher coverage generally comes at the cost of accuracy, particularly for the 'linguistic' method.

* The ROC curve highlights the ability of each method to discriminate between true positives and false positives.

* The AUROC (Area Under the Receiver Operating Characteristic curve) values provided in the legend quantify the overall performance of each method. Higher AUROC values indicate better performance.

### Interpretation

These charts evaluate the effectiveness of different methods for identifying risks or making predictions in a medical question answering (MEDQA) context. The "Risk Coverage Curve" assesses how well each method can identify a broad range of risks while maintaining accuracy. The "Receiver Operator Curve" evaluates the trade-off between sensitivity (True Positive Rate) and specificity (1 - False Positive Rate).

The consistent superior performance of the 'stability' method suggests that it is the most reliable approach for this task. It achieves high accuracy across a wide range of coverage levels and effectively discriminates between true and false positives. The lower performance of the 'linguistic' method indicates that relying solely on linguistic features may not be sufficient for accurate risk assessment. The baseline accuracy provides a point of reference, and all methods demonstrate improvement over random chance. The difference in AUROC values between the methods is significant, indicating varying degrees of predictive power. The charts suggest that a combination of methods, potentially weighted towards 'stability', could yield the best overall performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Risk Coverage Curve & Receiver Operator Curve: MEDQA Model Evaluation

### Overview

The image displays two side-by-side performance evaluation charts for five different methods (linguistic, tokenprob, consistency, topk, stability) on the MEDQA dataset. The left chart is a Risk Coverage Curve, and the right chart is a Receiver Operator Characteristic (ROC) Curve. Both charts compare the performance of these methods against a baseline.

### Components/Axes

**Overall Title:** MEDQA (centered at the top)

**Left Chart: Risk Coverage Curve**

* **Chart Title:** Risk Coverage Curve ↑ (top-left)

* **X-axis:** "Coverage" (scale from 0.0 to 1.0, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0)

* **Y-axis:** "Accuracy" (scale from 0.0 to 1.0, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0)

* **Legend (bottom-right):**

* `linguistic (AURC:0.9007)` - Blue line

* `tokenprob (AURC:0.9214)` - Orange line

* `consistency (AURC:0.9267)` - Green line

* `topk (AURC:0.9136)` - Red line

* `stability (AURC:0.9638)` - Purple line

* `baseline accuracy (0.82)` - Black dashed line

**Right Chart: Receiver Operator Curve**

* **Chart Title:** Receiver Operator Curve ↑ (top-right)

* **X-axis:** "False Positive Rate" (scale from 0.0 to 1.0, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0)

* **Y-axis:** "True Positive Rate" (scale from 0.0 to 1.0, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0)

* **Legend (bottom-right):**

* `linguistic (AUROC:0.6704)` - Blue line

* `tokenprob (AUROC:0.8149)` - Orange line

* `consistency (AUROC:0.8286)` - Green line

* `topk (AUROC:0.7101)` - Red line

* `stability (AUROC:0.9078)` - Purple line

* `random` - Black dashed diagonal line (from (0,0) to (1,1))

### Detailed Analysis

**Risk Coverage Curve (Left Chart):**

* **Trend Verification:** All five method lines start at or near (Coverage=0.0, Accuracy=1.0). As Coverage increases, Accuracy generally decreases, but the rate of decrease varies significantly between methods.

* **Data Series & Points:**

* **stability (Purple):** Maintains the highest accuracy for the longest duration. It stays near Accuracy=1.0 until Coverage ≈ 0.5, then gradually declines to meet the baseline at Coverage=1.0. AURC = 0.9638 (highest).

* **consistency (Green):** Shows a sharp, anomalous dip in accuracy to ~0.7 at very low Coverage (≈0.05), then recovers sharply to near 1.0 before beginning a steady decline. AURC = 0.9267.

* **tokenprob (Orange):** Follows a smooth, convex curve, declining steadily from 1.0. AURC = 0.9214.

* **topk (Red):** Declines in a step-like, jagged pattern. AURC = 0.9136.

* **linguistic (Blue):** Also shows a jagged, step-like decline, generally below the tokenprob and consistency lines after the initial coverage. AURC = 0.9007 (lowest among methods).

* **Baseline (Black Dashed):** A horizontal line at Accuracy = 0.82, representing a fixed performance threshold.

**Receiver Operator Curve (Right Chart):**

* **Trend Verification:** All method lines start at (0,0) and end at (1,1). They bow toward the top-left corner, indicating better-than-random classification performance. The "random" line is the diagonal baseline.

* **Data Series & Points:**

* **stability (Purple):** Exhibits the best performance, with the curve closest to the top-left corner. It reaches a True Positive Rate (TPR) of ~0.65 at a very low False Positive Rate (FPR) of ~0.05. AUROC = 0.9078 (highest).

* **consistency (Green):** The second-best performer. It has a steep initial rise, reaching TPR ≈ 0.8 at FPR ≈ 0.2. AUROC = 0.8286.

* **tokenprob (Orange):** Shows a stepped increase, performing better than linguistic and topk. AUROC = 0.8149.

* **topk (Red):** Follows a path slightly above the linguistic line for most of the curve. AUROC = 0.7101.

* **linguistic (Blue):** The lowest-performing method, with the curve closest to the random diagonal. AUROC = 0.6704.

* **random (Black Dashed):** The diagonal line representing the performance of a random classifier (AUROC = 0.5).

### Key Observations

1. **Consistent Hierarchy:** The `stability` method is the top performer on both metrics (highest AURC and AUROC). The `linguistic` method is the lowest performer on both.

2. **Anomaly in Risk Coverage:** The `consistency` method (green line) shows a significant, sharp drop in accuracy at very low coverage before recovering. This suggests a potential instability or edge-case failure mode when the model is only required to be confident on a very small subset of data.

3. **Metric Discrepancy:** While the performance *ranking* of methods is similar across both charts, the *absolute performance gaps* differ. For example, the gap between `stability` and `consistency` is much larger in the ROC space (AUROC: 0.9078 vs. 0.8286) than in the Risk Coverage space (AURC: 0.9638 vs. 0.9267).

4. **Step-like Patterns:** The `topk` and `linguistic` lines in the Risk Coverage curve, and the `tokenprob` line in the ROC curve, exhibit distinct step-like patterns rather than smooth curves. This may indicate discrete changes in model behavior at specific confidence thresholds.

### Interpretation

This visualization provides a multi-faceted evaluation of model confidence estimation or selective prediction methods on the MEDQA (medical question answering) task.

* **What the data suggests:** The `stability` method is demonstrably superior for both selective prediction (maintaining high accuracy as more predictions are accepted) and general classification discrimination (separating positive and negative classes). The `consistency` method is a strong second but has a concerning failure mode at low coverage.

* **Relationship between elements:** The two charts answer related but different questions. The Risk Coverage Curve answers: "If I only accept the model's top X% most confident predictions, how accurate will it be?" The ROC Curve answers: "How well can this method distinguish between correct and incorrect predictions across all confidence thresholds?" A good method should perform well on both.

* **Notable implications:** For a high-stakes domain like medical QA, the `stability` method appears most reliable. The poor performance of the `linguistic` method suggests that using linguistic features alone is insufficient for robust confidence estimation in this context. The step-like patterns warrant investigation, as they may reveal artifacts in how these methods compute confidence scores. The misspelling "Reciever" in the right chart title is a minor presentational error.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Risk Coverage Curve and Receiver Operator Curve Analysis

### Overview

The image contains two comparative performance charts:

1. **Risk Coverage Curve** (left): Accuracy vs. Coverage for multiple methods.

2. **Receiver Operator Curve (ROC)** (right): True Positive Rate vs. False Positive Rate for the same methods.

Both charts include labeled data series, AUC/AUROC metrics, and baseline/random references.

---

### Components/Axes

#### Risk Coverage Curve (Left)

- **X-axis**: Coverage (0.0 to 1.0)

- **Y-axis**: Accuracy (0.0 to 1.0)

- **Legend**:

- Linguistic (AUC: 0.9007) – Blue

- Tokenprob (AUC: 0.9214) – Orange

- Consistency (AUC: 0.9267) – Green

- Topk (AUC: 0.9136) – Red

- Stability (AUC: 0.9638) – Purple

- Baseline Accuracy (0.82) – Dashed black

#### Receiver Operator Curve (Right)

- **X-axis**: False Positive Rate (0.0 to 1.0)

- **Y-axis**: True Positive Rate (0.0 to 1.0)

- **Legend**:

- Linguistic (AUROC: 0.6704) – Blue

- Tokenprob (AUROC: 0.8149) – Orange

- Consistency (AUROC: 0.8286) – Green

- Topk (AUROC: 0.7101) – Red

- Stability (AUROC: 0.9078) – Purple

- Random – Dashed black

---

### Detailed Analysis

#### Risk Coverage Curve

- **Trends**:

- All lines start near 1.0 accuracy at low coverage, then decline as coverage increases.

- **Stability** (purple) maintains the highest accuracy across coverage levels (AUC: 0.9638).

- **Linguistic** (blue) drops sharply, reaching ~0.6 accuracy at 0.2 coverage.

- **Baseline** (dashed black) remains flat at 0.82 accuracy.

#### Receiver Operator Curve

- **Trends**:

- Lines start at the bottom-left (0,0) and ascend toward the top-right.

- **Stability** (purple) achieves the highest AUROC (0.9078), closest to the top-left corner.

- **Random** (dashed black) follows the diagonal (AUROC ~0.5).

- **Topk** (red) has the lowest AUROC (0.7101), barely above random.

---

### Key Observations

1. **Stability** dominates both metrics, suggesting robustness in balancing accuracy and coverage.

2. **Linguistic** and **Topk** underperform, with steep declines in accuracy (Risk Coverage) and poor discrimination (ROC).

3. **Baseline Accuracy** (0.82) is outperformed by all methods except Linguistic at high coverage.

4. **AUROC Values**: Stability (0.9078) > Consistency (0.8286) > Tokenprob (0.8149) > Linguistic (0.6704) > Topk (0.7101).

---

### Interpretation

- **Performance Hierarchy**: Stability and Consistency methods are most reliable, likely due to their design prioritizing robustness.

- **Baseline vs. Models**: The baseline accuracy (0.82) is surpassed by all methods except Linguistic, indicating models generally improve upon simple heuristics.

- **ROC Insights**: High AUROC values (e.g., Stability’s 0.9078) suggest strong class separation, while Topk’s low AUROC (0.7101) implies poor discriminative power.

- **Trade-offs**: Methods like Linguistic sacrifice accuracy for coverage, while Stability optimizes both.

The data highlights Stability as the optimal choice for balancing accuracy, coverage, and discrimination, while Topk and Linguistic require further refinement.

DECODING INTELLIGENCE...