## Risk Coverage Curve and Receiver Operator Curve Analysis

### Overview

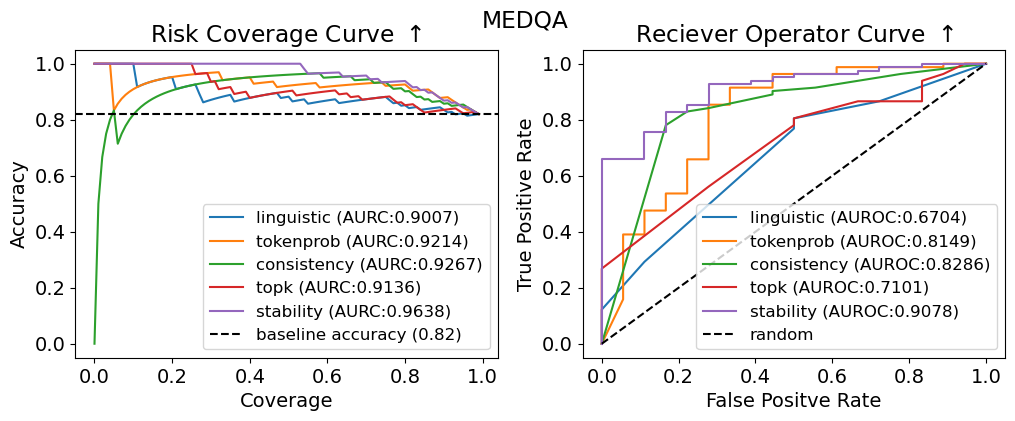

The image contains two comparative performance charts:

1. **Risk Coverage Curve** (left): Accuracy vs. Coverage for multiple methods.

2. **Receiver Operator Curve (ROC)** (right): True Positive Rate vs. False Positive Rate for the same methods.

Both charts include labeled data series, AUC/AUROC metrics, and baseline/random references.

---

### Components/Axes

#### Risk Coverage Curve (Left)

- **X-axis**: Coverage (0.0 to 1.0)

- **Y-axis**: Accuracy (0.0 to 1.0)

- **Legend**:

- Linguistic (AUC: 0.9007) – Blue

- Tokenprob (AUC: 0.9214) – Orange

- Consistency (AUC: 0.9267) – Green

- Topk (AUC: 0.9136) – Red

- Stability (AUC: 0.9638) – Purple

- Baseline Accuracy (0.82) – Dashed black

#### Receiver Operator Curve (Right)

- **X-axis**: False Positive Rate (0.0 to 1.0)

- **Y-axis**: True Positive Rate (0.0 to 1.0)

- **Legend**:

- Linguistic (AUROC: 0.6704) – Blue

- Tokenprob (AUROC: 0.8149) – Orange

- Consistency (AUROC: 0.8286) – Green

- Topk (AUROC: 0.7101) – Red

- Stability (AUROC: 0.9078) – Purple

- Random – Dashed black

---

### Detailed Analysis

#### Risk Coverage Curve

- **Trends**:

- All lines start near 1.0 accuracy at low coverage, then decline as coverage increases.

- **Stability** (purple) maintains the highest accuracy across coverage levels (AUC: 0.9638).

- **Linguistic** (blue) drops sharply, reaching ~0.6 accuracy at 0.2 coverage.

- **Baseline** (dashed black) remains flat at 0.82 accuracy.

#### Receiver Operator Curve

- **Trends**:

- Lines start at the bottom-left (0,0) and ascend toward the top-right.

- **Stability** (purple) achieves the highest AUROC (0.9078), closest to the top-left corner.

- **Random** (dashed black) follows the diagonal (AUROC ~0.5).

- **Topk** (red) has the lowest AUROC (0.7101), barely above random.

---

### Key Observations

1. **Stability** dominates both metrics, suggesting robustness in balancing accuracy and coverage.

2. **Linguistic** and **Topk** underperform, with steep declines in accuracy (Risk Coverage) and poor discrimination (ROC).

3. **Baseline Accuracy** (0.82) is outperformed by all methods except Linguistic at high coverage.

4. **AUROC Values**: Stability (0.9078) > Consistency (0.8286) > Tokenprob (0.8149) > Linguistic (0.6704) > Topk (0.7101).

---

### Interpretation

- **Performance Hierarchy**: Stability and Consistency methods are most reliable, likely due to their design prioritizing robustness.

- **Baseline vs. Models**: The baseline accuracy (0.82) is surpassed by all methods except Linguistic, indicating models generally improve upon simple heuristics.

- **ROC Insights**: High AUROC values (e.g., Stability’s 0.9078) suggest strong class separation, while Topk’s low AUROC (0.7101) implies poor discriminative power.

- **Trade-offs**: Methods like Linguistic sacrifice accuracy for coverage, while Stability optimizes both.

The data highlights Stability as the optimal choice for balancing accuracy, coverage, and discrimination, while Topk and Linguistic require further refinement.