## Technical Diagram: Variational Router Training and Inference Flowchart

### Overview

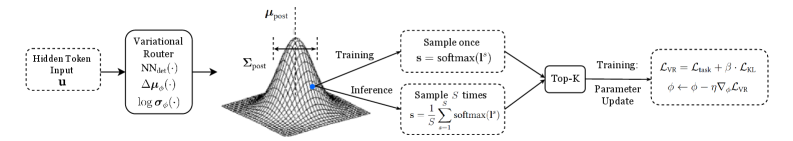

The image is a technical flowchart illustrating a machine learning process involving a "Variational Router" component. It depicts the flow from an input token through a variational distribution, branching into separate training and inference pathways, and culminating in a parameter update step. The diagram is monochrome (black and white) and uses a combination of text boxes, arrows, mathematical notation, and a 3D surface plot to represent the process.

### Components/Axes

The diagram is organized horizontally from left to right, with a central 3D plot. The components are:

1. **Input (Far Left):** A dashed box labeled `Hidden Token Input (t)`.

2. **Variational Router (Left-Center):** A solid box labeled `Variational Router`. Inside, three functions are listed vertically:

- `SNN(·)`

- `Δμ(·)`

- `log σ(·)`

3. **Posterior Distribution (Center):** A 3D wireframe surface plot of a bell-shaped (Gaussian) distribution. It is annotated with:

- `μ_post` (pointing to the peak along the vertical axis).

- `Σ_post` (pointing to the spread/width of the distribution).

- A blue dot is placed on the surface of the plot.

4. **Process Branches (Center-Right):** Two parallel paths diverge from the distribution plot:

- **Top Path (Training):** Labeled `Training`. Contains a dashed box with the text `Sample once` and the equation `s = softmax(V)`.

- **Bottom Path (Inference):** Labeled `Inference`. Contains a dashed box with the text `Sample N times` and the equation `s = 1/N Σ_{i=1}^{N} softmax(V_i)`.

5. **Selection Module (Right-Center):** A solid box labeled `Top-K` that receives input from both the Training and Inference branches.

6. **Parameter Update (Far Right):** A dashed box connected to the `Top-K` module, labeled `Training`. It contains two lines of mathematical text:

- `L_VR = L_CE + β · L_KL`

- `θ ← θ - α∇_θ L_VR`

### Detailed Analysis

- **Flow Direction:** The process flows unidirectionally from left (`Hidden Token Input`) to right (`Parameter Update`). The central distribution acts as a hub, feeding into two distinct operational modes (Training and Inference) which later converge at the `Top-K` module.

- **Mathematical Content:**

- The Variational Router outputs parameters for a distribution, likely a Gaussian, given `μ_post` (mean) and `Σ_post` (covariance).

- The **Training** path uses a single sample (`Sample once`) passed through a softmax function to produce `s`.

- The **Inference** path uses an average over `N` samples (`Sample N times`), each passed through softmax, to produce a more stable estimate `s`.

- The final loss function `L_VR` is a weighted sum of a Cross-Entropy loss (`L_CE`) and a Kullback-Leibler divergence loss (`L_KL`), scaled by a hyperparameter `β`.

- Parameters `θ` are updated via gradient descent with learning rate `α`, using the gradient of the total loss `∇_θ L_VR`.

- **Spatial Grounding:** The legend/labels (`Training`, `Inference`) are placed directly above their respective process boxes. The mathematical equations are contained within dashed boxes associated with their respective process step. The 3D plot is centrally located, visually emphasizing its role as the core probabilistic model.

### Key Observations

1. **Dual-Path Architecture:** The system explicitly separates the stochastic sampling process during training (single sample) from the inference phase (averaged over N samples). This is a common technique to balance training efficiency with inference robustness.

2. **Top-K Integration:** Both pathways feed into a `Top-K` module before the final training step. This suggests a selection or filtering mechanism is applied to the outputs (`s`) from either path before computing the loss.

3. **Loss Composition:** The training objective combines a task-specific loss (`L_CE`) with a regularization term (`L_KL`), which is characteristic of variational methods to prevent overfitting and encourage the learned distribution to stay close to a prior.

4. **Visual Emphasis:** The 3D Gaussian plot is the most visually complex element, highlighting the importance of the variational posterior distribution in this architecture.

### Interpretation

This diagram outlines a **variational inference framework for a routing mechanism** within a neural network. The "Variational Router" likely decides how to process or route the input token `t` by sampling from a learned probability distribution (`μ_post`, `Σ_post`).

- **Purpose:** The system aims to learn a robust routing policy. During training, it uses a noisy, single-sample estimate to explore options. During inference, it uses a smoothed, averaged estimate for stable, reliable decisions.

- **Relationships:** The `Top-K` module acts as a bottleneck or selector, possibly choosing the most promising routing decisions before they are used to compute the final loss and update the router's parameters (`θ`). The KL divergence term ensures the learned distribution doesn't deviate too far from a predefined prior, providing regularization.

- **Underlying Principle:** This is a **reparameterized gradient estimation** setup (implied by the sampling and backpropagation through `θ`). The architecture is designed to train a stochastic, probabilistic component (the router) within a larger deterministic system using standard gradient-based optimization. The separation of training and inference sampling strategies is a key design choice to mitigate the variance often associated with stochastic units during training.