## Diagram: Variational Router Architecture for Token Sampling

### Overview

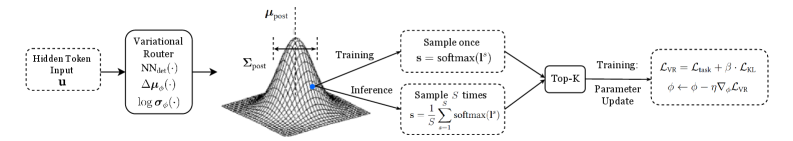

This diagram illustrates a variational router architecture used in natural language processing (NLP) for token selection. It shows the flow from hidden token input to parameterized distributions, training/inference processes, and parameter updates. The architecture combines probabilistic modeling with optimization techniques to balance exploration and exploitation in token sampling.

### Components/Axes

1. **Input**:

- "Hidden Token Input u" (dashed box on the left)

2. **Variational Router**:

- Outputs three parameters:

- `NN_det(·)` (deterministic neural network)

- `Δμ_φ(·)` (mean shift)

- `log σ_φ(·)` (log variance)

3. **Gaussian Distribution**:

- Visualized as a 3D surface plot

- Labeled with:

- `μ_post` (posterior mean)

- `Σ_post` (posterior covariance)

4. **Training Path**:

- "Sample once" block with `s = softmax(l^s)`

- "Top-K" selection

- Loss function: `L_VR = L_task + β·L_KL`

- Parameter update: `φ ← φ - η∇_φL_VR`

5. **Inference Path**:

- Two sampling strategies:

- Single sample: `s = softmax(l^s)`

- Multiple samples: `s = 1/S ∑_{s=1}^S softmax(l^s)`

### Detailed Analysis

- **Gaussian Distribution**: The 3D plot shows a unimodal distribution centered at `μ_post` with spread determined by `Σ_post`. The dashed lines indicate confidence intervals around the mean.

- **Training Process**:

- Uses softmax to convert logits `l^s` into probabilities

- Applies Top-K sampling to select most probable tokens

- Combines task loss (`L_task`) with KL divergence (`L_KL`) regularization

- Updates parameters using gradient descent with learning rate η

- **Inference Process**:

- Offers two sampling approaches:

1. Deterministic single-sample softmax

2. Stochastic ensemble sampling (average of S softmax outputs)

- **Parameterization**: The variational router parameterizes the posterior distribution through neural network outputs, enabling end-to-end learning of uncertainty estimates.

### Key Observations

1. The architecture explicitly models uncertainty through the variational distribution (μ_post, Σ_post)

2. Training balances task performance (L_task) with distribution fidelity (L_KL) via the β hyperparameter

3. Inference provides flexibility between deterministic and stochastic sampling strategies

4. Top-K sampling introduces a trade-off between exploration (full softmax) and efficiency (limited candidates)

### Interpretation

This architecture demonstrates a Bayesian approach to sequence modeling where:

- The variational router learns to estimate token uncertainty through posterior distributions

- The KL divergence term prevents overconfidence in predictions

- The dual sampling strategies in inference allow adaptation to different deployment requirements

- The parameter update rule shows standard stochastic gradient descent with backpropagation through the variational objective

The diagram reveals a sophisticated method for handling discrete token selection with continuous uncertainty estimation, combining elements of variational inference and neural network training. The use of both single and ensemble sampling in inference suggests an awareness of the exploration-exploitation tradeoff in language generation tasks.