# Technical Document Extraction: Neural Network Architecture Diagram

## Diagram Overview

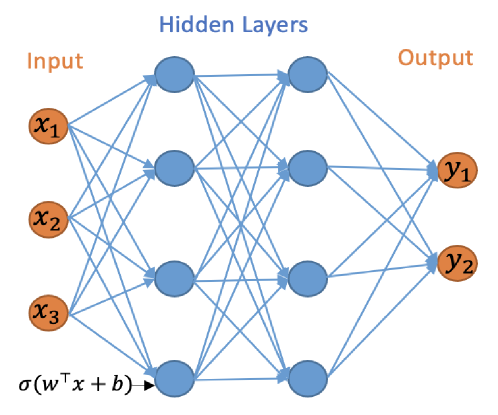

The image depicts a **feedforward neural network architecture** with labeled components and mathematical notation. Below is a structured extraction of all textual and symbolic information.

---

### 1. **Component Labels and Flow**

#### Input Layer

- **Nodes**:

- `x₁`, `x₂`, `x₃` (input features)

- **Position**: Leftmost column of orange nodes.

#### Hidden Layers

- **Nodes**:

- Blue circular nodes with no explicit labels (universal representation).

- **Connections**:

- Directed edges (blue arrows) between all input-to-hidden and hidden-to-output nodes.

- **Activation Function**:

- Notation: `σ(wᵀx + b)` (sigmoid function applied to weighted input + bias).

- Position: Annotated at the bottom of the hidden layer.

#### Output Layer

- **Nodes**:

- `y₁`, `y₂` (output predictions).

- **Position**: Rightmost column of orange nodes.

---

### 2. **Architectural Structure**

- **Layers**:

- **Input**: 3 nodes (`x₁`, `x₂`, `x₃`).

- **Hidden**: 6 nodes (arranged in two rows of three nodes each).

- **Output**: 2 nodes (`y₁`, `y₂`).

- **Connections**:

- Fully connected (dense) architecture: Every input node connects to every hidden node, and every hidden node connects to every output node.

- No skip connections or recurrent loops.

---

### 3. **Mathematical Notation**

- **Activation Function**:

- `σ(wᵀx + b)`:

- `w`: Weight vector (transposed, `wᵀ`).

- `x`: Input vector.

- `b`: Bias term.

- `σ`: Sigmoid function (commonly used for binary classification).

---

### 4. **Color Coding**

- **Node Colors**:

- **Input/Output**: Orange (`x₁`, `x₂`, `x₃`, `y₁`, `y₂`).

- **Hidden**: Blue (universal representation).

- **Edges**: Blue arrows (directional flow from input → hidden → output).

---

### 5. **Spatial Grounding**

- **Input Layer**: Leftmost column (x-axis: 0–1).

- **Hidden Layers**: Middle region (x-axis: 1–2).

- **Output Layer**: Rightmost column (x-axis: 2–3).

- **Activation Function**: Annotated at the bottom of the hidden layer (y-axis: -0.5).

---

### 6. **Key Trends and Observations**

- **Data Flow**: Unidirectional (input → hidden → output).

- **Complexity**:

- Total connections:

- Input-to-hidden: 3 inputs × 6 hidden = 18 edges.

- Hidden-to-output: 6 hidden × 2 outputs = 12 edges.

- Total parameters (weights + biases):

- Weights: 18 + 12 = 30.

- Biases: 6 (hidden) + 2 (output) = 8.

- **Total**: 38 trainable parameters.

---

### 7. **Missing Elements**

- **No Legends**: No explicit legend present (colors are self-explanatory).

- **No Data Table**: Diagram is structural, not data-driven.

- **No Axes**: No numerical axes (qualitative spatial layout only).

---

### 8. **Summary**

This diagram illustrates a **3-input, 6-hidden, 2-output feedforward neural network** with a sigmoid activation function. The architecture is fully connected, with 38 trainable parameters. Inputs (`x₁`, `x₂`, `x₃`) are processed through hidden layers to produce outputs (`y₁`, `y₂`).