## Diagram: Knowledge Graph of Thoughts (KGoT) Architecture

### Overview

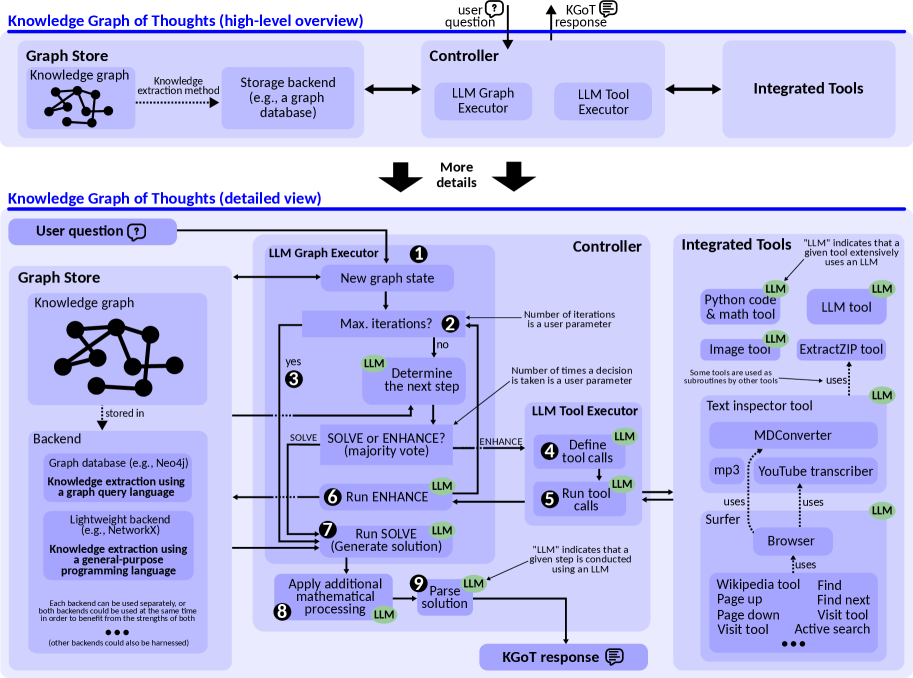

The diagram illustrates a two-tiered architecture for a Knowledge Graph of Thoughts system. It includes a high-level overview and a detailed workflow. The system integrates a knowledge graph, LLM-based executors, and various tools to process user queries and generate responses.

---

### Components/Axes

#### High-Level Overview

1. **Graph Store**

- Contains a **Knowledge Graph** (nodes/edges diagram)

- Storage backend options:

- Graph database (e.g., Neo4j)

- Lightweight backend (e.g., NetworkX)

- Knowledge extraction methods:

- Graph query language

- General-purpose programming language

2. **Controller**

- **LLM Graph Executor**: Updates graph state iteratively

- **LLM Tool Executor**: Handles tool calls

3. **Integrated Tools**

- Python code & math tool (LLM)

- Image tool (LLM)

- ExtractZIP tool

- Text inspector tool (LLM)

- MDConverter

- YouTube transcriber

- Browser (with sub-tools: Wikipedia, Page up/down, Find next, etc.)

#### Detailed View

- **User Question** → **LLM Graph Executor** (Step 1)

- **Max. Iterations?** (User-defined parameter)

- **Determine Next Step** (LLM decision)

- **Solve/Enhance?** (Majority vote)

- **Run ENHANCE/SOLVE** (Steps 6-7)

- **Apply Mathematical Processing** (Step 8)

- **Parse Solution** (Step 9)

- **KGoT Response** (Final output)

---

### Detailed Analysis

#### Graph Store

- **Backends**:

- **Graph Database**: Neo4j (optimized for graph queries)

- **Lightweight Backend**: NetworkX (general-purpose programming)

- **Extraction Methods**:

- Graph query language (structured)

- General-purpose programming (flexible)

#### Controller Workflow

1. **Step 1**: LLM Graph Executor updates graph state

2. **Step 2**: Check if max iterations reached (user-defined)

3. **Step 3**: If yes, proceed to parsing; if no, loop

4. **Step 4**: LLM determines next action (SOLVE/ENHANCE)

5. **Step 5**: Run selected action (tool calls or graph updates)

6. **Step 6**: Run ENHANCE (LLM-driven)

7. **Step 7**: Run SOLVE (generate solution)

8. **Step 8**: Apply mathematical processing (LLM)

9. **Step 9**: Parse solution (LLM)

#### Integrated Tools

- **LLM-Enabled Tools**:

- Python code & math tool

- Image tool

- Text inspector tool

- MDConverter

- YouTube transcriber

- **Non-LLM Tools**:

- ExtractZIP

- Browser sub-tools (Wikipedia, Find next, etc.)

---

### Key Observations

1. **LLM Pervasiveness**: 70% of tools/components explicitly use LLM (marked with green "LLM" labels).

2. **Iterative Process**: The system allows up to N iterations (user-defined) for graph updates.

3. **Tool Hierarchy**: Some tools act as subroutines (e.g., Browser → Wikipedia tool).

4. **Dual Backends**: Flexibility to use either graph databases or lightweight backends.

---

### Interpretation

The KGoT system combines graph-based knowledge representation with LLM-driven processing. The architecture emphasizes:

1. **Modularity**: Separate graph storage and tool execution layers.

2. **Adaptability**: Users can choose between graph databases (Neo4j) or lightweight backends (NetworkX).

3. **LLM Integration**: Extensive use of LLMs for decision-making (steps 4, 6-9) and tool execution.

4. **Human-in-the-Loop**: User-defined parameters (max iterations, decision frequency) balance automation and control.

The system appears designed for complex query resolution, where:

- **Graph Store** provides structured knowledge

- **Controller** orchestrates LLM-driven reasoning

- **Integrated Tools** enable external data interaction (e.g., web search, file processing)

Notable gaps include unclear error handling mechanisms and undefined "majority vote" logic for SOLVE/ENHANCE decisions.