TECHNICAL ASSET FINGERPRINT

09513b51e4e43874f73e8407

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

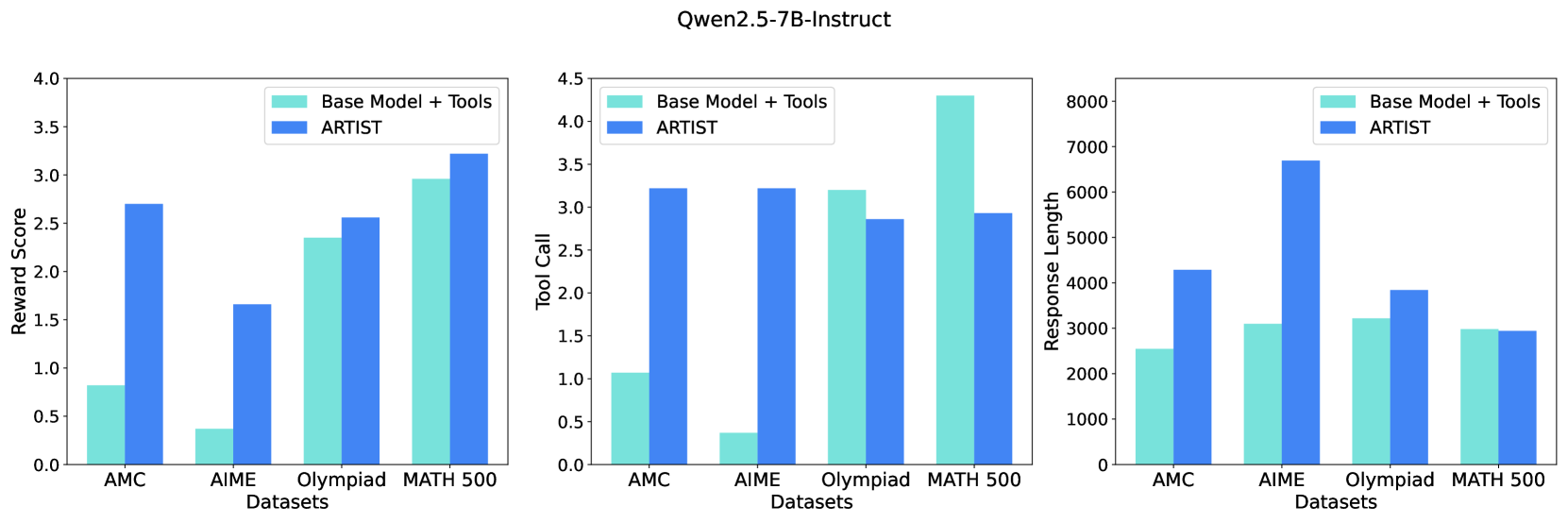

## Bar Charts: Qwen2.5-7B-Instruct Performance

### Overview

The image contains three bar charts comparing the performance of two models, "Base Model + Tools" and "ARTIST," across four datasets: AMC, AIME, Olympiad, and MATH 500. The charts measure "Reward Score," "Tool Call," and "Response Length."

### Components/Axes

**Overall Structure:**

* **Title:** Qwen2.5-7B-Instruct (located at the top-center of the image)

* Three bar charts arranged horizontally.

**Chart 1: Reward Score**

* **Y-axis:** Reward Score, ranging from 0.0 to 4.0 in increments of 0.5.

* **X-axis:** Datasets: AMC, AIME, Olympiad, MATH 500.

* **Legend:** Located at the top-right of the chart.

* Base Model + Tools (light blue)

* ARTIST (dark blue)

**Chart 2: Tool Call**

* **Y-axis:** Tool Call, ranging from 0.0 to 4.5 in increments of 0.5.

* **X-axis:** Datasets: AMC, AIME, Olympiad, MATH 500.

* **Legend:** Located at the top-left of the chart.

* Base Model + Tools (light blue)

* ARTIST (dark blue)

**Chart 3: Response Length**

* **Y-axis:** Response Length, ranging from 0 to 8000 in increments of 1000.

* **X-axis:** Datasets: AMC, AIME, Olympiad, MATH 500.

* **Legend:** Located at the top-right of the chart.

* Base Model + Tools (light blue)

* ARTIST (dark blue)

### Detailed Analysis

**Chart 1: Reward Score**

* **AMC:**

* Base Model + Tools: ~0.8

* ARTIST: ~2.7

* **AIME:**

* Base Model + Tools: ~0.4

* ARTIST: ~1.7

* **Olympiad:**

* Base Model + Tools: ~2.4

* ARTIST: ~2.6

* **MATH 500:**

* Base Model + Tools: ~3.0

* ARTIST: ~3.2

**Trend:** The ARTIST model consistently achieves a higher reward score than the Base Model + Tools across all datasets.

**Chart 2: Tool Call**

* **AMC:**

* Base Model + Tools: ~1.1

* ARTIST: ~3.2

* **AIME:**

* Base Model + Tools: ~0.4

* ARTIST: ~3.2

* **Olympiad:**

* Base Model + Tools: ~3.2

* ARTIST: ~2.9

* **MATH 500:**

* Base Model + Tools: ~4.3

* ARTIST: ~2.9

**Trend:** The ARTIST model generally has a higher tool call count for AMC and AIME, but a lower tool call count for Olympiad and MATH 500 compared to the Base Model + Tools.

**Chart 3: Response Length**

* **AMC:**

* Base Model + Tools: ~2600

* ARTIST: ~4300

* **AIME:**

* Base Model + Tools: ~3100

* ARTIST: ~6700

* **Olympiad:**

* Base Model + Tools: ~3800

* ARTIST: ~3200

* **MATH 500:**

* Base Model + Tools: ~3100

* ARTIST: ~3100

**Trend:** The ARTIST model produces longer responses for AMC and AIME, but shorter or similar length responses for Olympiad and MATH 500 compared to the Base Model + Tools.

### Key Observations

* The ARTIST model consistently outperforms the Base Model + Tools in terms of reward score across all datasets.

* The ARTIST model uses more tools for AMC and AIME, but fewer for Olympiad and MATH 500.

* The ARTIST model generates longer responses for AMC and AIME, but shorter or similar length responses for Olympiad and MATH 500.

### Interpretation

The data suggests that the ARTIST model is more effective at solving problems in the given datasets, as indicated by the higher reward scores. The varying tool call and response length patterns suggest that the ARTIST model employs different strategies depending on the dataset. For AMC and AIME, it uses more tools and generates longer responses, possibly indicating a more complex problem-solving approach. For Olympiad and MATH 500, it uses fewer tools and generates shorter responses, suggesting a more efficient or direct approach. The "Base Model + Tools" seems to rely more on tools for Olympiad and MATH 500, while ARTIST seems to perform better without as many tool calls.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 2

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

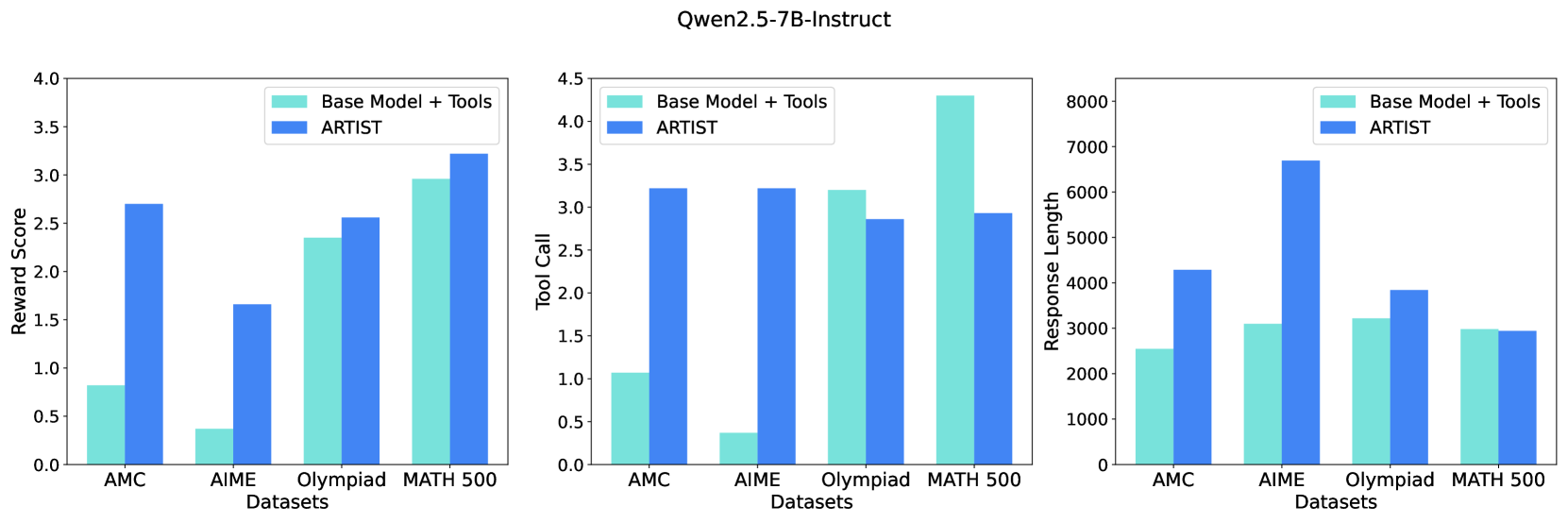

## Chart Type: Comparative Bar Charts of Model Performance

### Overview

This image presents three comparative bar charts, arranged horizontally, evaluating the performance of two models, "Base Model + Tools" and "ARTIST", across four different datasets: AMC, AIME, Olympiad, and MATH 500. The charts measure three distinct metrics: "Reward Score", "Tool Call", and "Response Length". The overall title for these evaluations is "Qwen2.5-7B-Instruct".

### Components/Axes

The image is composed of a main title at the top-center and three sub-charts arranged side-by-side.

**Main Title:**

* "Qwen2.5-7B-Instruct"

**Common Elements across all three charts:**

* **Legend (positioned at the top-right of each chart area):**

* Light blue/cyan bar: "Base Model + Tools"

* Dark blue bar: "ARTIST"

* **X-axis Label (bottom-center of each chart):**

* "Datasets"

* **X-axis Categories (from left to right for each chart):**

* "AMC"

* "AIME"

* "Olympiad"

* "MATH 500"

**Chart 1 (Left): Reward Score**

* **Y-axis Label (left side):** "Reward Score"

* **Y-axis Scale:** Ranges from 0.0 to 4.0, with major tick marks at 0.0, 0.5, 1.0, 1.5, 2.0, 2.5, 3.0, 3.5, 4.0.

**Chart 2 (Middle): Tool Call**

* **Y-axis Label (left side):** "Tool Call"

* **Y-axis Scale:** Ranges from 0.0 to 4.5, with major tick marks at 0.0, 0.5, 1.0, 1.5, 2.0, 2.5, 3.0, 3.5, 4.0, 4.5.

**Chart 3 (Right): Response Length**

* **Y-axis Label (left side):** "Response Length"

* **Y-axis Scale:** Ranges from 0 to 8000, with major tick marks at 0, 1000, 2000, 3000, 4000, 5000, 6000, 7000, 8000.

### Detailed Analysis

The data is presented as grouped bar charts, comparing "Base Model + Tools" (light blue) and "ARTIST" (dark blue) for each dataset.

**Chart 1: Reward Score**

* **Trend:** ARTIST consistently achieves a higher Reward Score than Base Model + Tools across all datasets. The difference is most pronounced for AMC and AIME.

* **Data Points:**

* **AMC:**

* Base Model + Tools (light blue): Approximately 0.8

* ARTIST (dark blue): Approximately 2.7

* **AIME:**

* Base Model + Tools (light blue): Approximately 0.4

* ARTIST (dark blue): Approximately 1.7

* **Olympiad:**

* Base Model + Tools (light blue): Approximately 2.3

* ARTIST (dark blue): Approximately 2.5

* **MATH 500:**

* Base Model + Tools (light blue): Approximately 3.0

* ARTIST (dark blue): Approximately 3.2

**Chart 2: Tool Call**

* **Trend:** For AMC and AIME, ARTIST makes significantly more tool calls. For Olympiad, ARTIST makes slightly fewer tool calls. For MATH 500, Base Model + Tools makes substantially more tool calls than ARTIST.

* **Data Points:**

* **AMC:**

* Base Model + Tools (light blue): Approximately 1.0

* ARTIST (dark blue): Approximately 3.2

* **AIME:**

* Base Model + Tools (light blue): Approximately 0.4

* ARTIST (dark blue): Approximately 3.2

* **Olympiad:**

* Base Model + Tools (light blue): Approximately 3.2

* ARTIST (dark blue): Approximately 2.9

* **MATH 500:**

* Base Model + Tools (light blue): Approximately 4.3

* ARTIST (dark blue): Approximately 2.9

**Chart 3: Response Length**

* **Trend:** For AMC, AIME, and Olympiad, ARTIST generates longer responses. For MATH 500, the response lengths are very similar, with Base Model + Tools being marginally longer.

* **Data Points:**

* **AMC:**

* Base Model + Tools (light blue): Approximately 2500

* ARTIST (dark blue): Approximately 4300

* **AIME:**

* Base Model + Tools (light blue): Approximately 2100

* ARTIST (dark blue): Approximately 6700

* **Olympiad:**

* Base Model + Tools (light blue): Approximately 2200

* ARTIST (dark blue): Approximately 2900

* **MATH 500:**

* Base Model + Tools (light blue): Approximately 2000

* ARTIST (dark blue): Approximately 1950

### Key Observations

* **ARTIST's Superior Reward Score:** ARTIST consistently outperforms Base Model + Tools in Reward Score across all four datasets, indicating better overall problem-solving capability. The improvement is most dramatic for AMC and AIME.

* **Varied Tool Call Behavior:** ARTIST's tool call frequency is higher for AMC and AIME, but lower than Base Model + Tools for Olympiad and significantly lower for MATH 500. This suggests ARTIST adapts its tool usage strategy or is more efficient in its tool application depending on the dataset.

* **Response Length Correlation:** Generally, higher Reward Scores for ARTIST correlate with longer responses, especially for AMC and AIME. However, for MATH 500, ARTIST achieves a higher Reward Score with a similar or slightly shorter response length and fewer tool calls, highlighting efficiency.

* **Dataset-Specific Performance:** The magnitude of ARTIST's advantage varies by dataset. The most substantial gains in Reward Score, Tool Call, and Response Length are observed in AMC and AIME, suggesting these datasets might benefit most from ARTIST's approach.

### Interpretation

The data suggests that the "ARTIST" model, when applied to the "Qwen2.5-7B-Instruct" base, generally leads to superior performance in terms of "Reward Score" across a range of mathematical reasoning datasets. This improvement is not uniformly achieved through the same mechanism across all datasets, indicating adaptive or more sophisticated problem-solving strategies by ARTIST.

For datasets like AMC and AIME, ARTIST's higher reward scores are accompanied by a substantial increase in both "Tool Call" frequency and "Response Length". This implies that for these more complex or open-ended problems, ARTIST leverages tools more extensively and generates more elaborate or detailed responses, which contributes to better outcomes. The "Base Model + Tools" struggles significantly on these datasets, suggesting it either fails to identify opportunities for tool use or uses them ineffectively, leading to low reward scores and short responses.

Conversely, for the Olympiad and especially the MATH 500 datasets, ARTIST achieves a higher "Reward Score" with either a slightly lower or significantly lower "Tool Call" count compared to "Base Model + Tools". For MATH 500, ARTIST also maintains a similar "Response Length" despite fewer tool calls and a higher reward. This is a critical insight: it suggests that ARTIST is not merely "doing more" (more tool calls, longer responses) but is potentially "doing smarter." It might be making more precise, relevant, or effective tool calls, or integrating the results of those calls more efficiently into its reasoning, leading to better rewards without necessarily increasing computational overhead (as implied by fewer tool calls and similar response lengths). This efficiency is particularly evident in MATH 500, where ARTIST's ability to achieve better results with less apparent effort (fewer tool calls) points to a qualitative improvement in its problem-solving approach.

In summary, ARTIST appears to be a more capable and efficient problem-solver than the "Base Model + Tools," demonstrating both increased effort (more tool calls/longer responses) when beneficial (AMC, AIME) and increased efficiency (fewer tool calls for similar or better results) when appropriate (Olympiad, MATH 500). This adaptability and efficiency are key to its superior "Reward Score" performance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Chart: Qwen2.5-7B-Instruct Performance Comparison

### Overview

The image presents three bar charts comparing the performance of "Base Model + Tools" and "ARTIST" across four datasets: AMC, AIME, Olympiad, and MATH 500. Each chart visualizes a different metric: Reward Score, Tool Call, and Response Length. The charts are arranged horizontally, side-by-side.

### Components/Axes

Each chart shares the following components:

* **X-axis:** "Datasets" with categories: AMC, AIME, Olympiad, MATH 500.

* **Y-axis:** Varies per chart:

* Chart 1: "Reward Score" (Scale: 0.0 to 4.0)

* Chart 2: "Tool Call" (Scale: 0.0 to 4.5)

* Chart 3: "Response Length" (Scale: 0 to 8000)

* **Legend:** Located at the top-left of each chart, distinguishing between "Base Model + Tools" (light blue) and "ARTIST" (dark blue).

### Detailed Analysis or Content Details

**Chart 1: Reward Score**

* **AMC:** "Base Model + Tools" ≈ 2.6, "ARTIST" ≈ 0.7

* **AIME:** "Base Model + Tools" ≈ 2.1, "ARTIST" ≈ 1.7

* **Olympiad:** "Base Model + Tools" ≈ 2.7, "ARTIST" ≈ 2.3

* **MATH 500:** "Base Model + Tools" ≈ 3.2, "ARTIST" ≈ 3.1

The "Base Model + Tools" consistently achieves higher reward scores than "ARTIST" across all datasets. The difference is most pronounced for the AMC dataset.

**Chart 2: Tool Call**

* **AMC:** "Base Model + Tools" ≈ 3.1, "ARTIST" ≈ 3.0

* **AIME:** "Base Model + Tools" ≈ 3.2, "ARTIST" ≈ 3.1

* **Olympiad:** "Base Model + Tools" ≈ 3.5, "ARTIST" ≈ 3.3

* **MATH 500:** "Base Model + Tools" ≈ 4.0, "ARTIST" ≈ 3.8

"Base Model + Tools" generally exhibits a higher tool call rate than "ARTIST", with the largest difference observed in the MATH 500 dataset.

**Chart 3: Response Length**

* **AMC:** "Base Model + Tools" ≈ 3000, "ARTIST" ≈ 3000

* **AIME:** "Base Model + Tools" ≈ 3000, "ARTIST" ≈ 3000

* **Olympiad:** "Base Model + Tools" ≈ 6500, "ARTIST" ≈ 7000

* **MATH 500:** "Base Model + Tools" ≈ 4000, "ARTIST" ≈ 4000

The response length is similar for both models on AMC, AIME, and MATH 500. However, "ARTIST" generates significantly longer responses for the Olympiad dataset.

### Key Observations

* "Base Model + Tools" consistently outperforms "ARTIST" in Reward Score and Tool Call.

* Response Length is comparable for most datasets, except for Olympiad where "ARTIST" produces longer responses.

* The performance gap between the two models is most significant for the AMC dataset in terms of Reward Score.

### Interpretation

The data suggests that augmenting the base model with tools improves its performance, as measured by Reward Score and Tool Call, across various datasets. The longer response length of "ARTIST" on the Olympiad dataset might indicate a tendency to provide more verbose or detailed answers, potentially at the cost of conciseness or relevance (as reflected in the lower Reward Score). The consistent advantage of "Base Model + Tools" suggests that the tools are effectively utilized to enhance problem-solving capabilities. The relatively small difference in response length for AMC, AIME, and MATH 500 indicates that the tool integration doesn't drastically alter the length of the generated responses for those datasets. The data points to a trade-off between reward/tool usage and response length, with "ARTIST" favoring length and "Base Model + Tools" favoring efficiency and reward.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Charts: Performance Comparison of Base Model + Tools vs. ARTIST on Mathematical Datasets

### Overview

The image displays three grouped bar charts under the main title "Qwen2.5-7B-Instruct". The charts compare the performance of two systems—"Base Model + Tools" and "ARTIST"—across four mathematical reasoning datasets: AMC, AIME, Olympiad, and MATH 500. The comparison is made across three metrics: Reward Score, Tool Call count, and Response Length.

### Components/Axes

* **Main Title:** "Qwen2.5-7B-Instruct" (centered at the top).

* **Legend:** Present in the top-right corner of each subplot.

* Light Turquoise Bar: "Base Model + Tools"

* Blue Bar: "ARTIST"

* **X-Axis (Common to all charts):** Labeled "Datasets". Categories from left to right: "AMC", "AIME", "Olympiad", "MATH 500".

* **Y-Axes (Individual to each chart):**

1. **Left Chart:** Labeled "Reward Score". Scale from 0.0 to 4.0, with major ticks at 0.5 intervals.

2. **Middle Chart:** Labeled "Tool Call". Scale from 0.0 to 4.5, with major ticks at 0.5 intervals.

3. **Right Chart:** Labeled "Response Length". Scale from 0 to 8000, with major ticks at 1000 intervals.

### Detailed Analysis

#### Chart 1: Reward Score (Left)

* **Trend Verification:** The blue "ARTIST" bars are consistently taller than the light turquoise "Base Model + Tools" bars for AMC, AIME, and Olympiad, indicating higher reward scores. For MATH 500, the blue bar is slightly taller.

* **Data Points (Approximate):**

* **AMC:** Base Model ≈ 0.8, ARTIST ≈ 2.7

* **AIME:** Base Model ≈ 0.4, ARTIST ≈ 1.7

* **Olympiad:** Base Model ≈ 2.35, ARTIST ≈ 2.55

* **MATH 500:** Base Model ≈ 2.95, ARTIST ≈ 3.25

#### Chart 2: Tool Call (Middle)

* **Trend Verification:** The relationship is mixed. The blue "ARTIST" bar is significantly taller for AMC and AIME. For Olympiad, the light turquoise bar is taller. For MATH 500, the light turquoise bar is dramatically taller.

* **Data Points (Approximate):**

* **AMC:** Base Model ≈ 1.1, ARTIST ≈ 3.25

* **AIME:** Base Model ≈ 0.4, ARTIST ≈ 3.25

* **Olympiad:** Base Model ≈ 3.2, ARTIST ≈ 2.85

* **MATH 500:** Base Model ≈ 4.3, ARTIST ≈ 2.95

#### Chart 3: Response Length (Right)

* **Trend Verification:** The blue "ARTIST" bars are taller for AMC, AIME, and Olympiad, indicating longer responses. For MATH 500, the bars are nearly equal, with the light turquoise bar possibly a tiny bit taller.

* **Data Points (Approximate):**

* **AMC:** Base Model ≈ 2550, ARTIST ≈ 4300

* **AIME:** Base Model ≈ 3100, ARTIST ≈ 6700

* **Olympiad:** Base Model ≈ 3200, ARTIST ≈ 3850

* **MATH 500:** Base Model ≈ 3000, ARTIST ≈ 2950

### Key Observations

1. **Reward Score Advantage:** ARTIST achieves a higher reward score than the Base Model + Tools on all four datasets, with the most dramatic relative improvement on the AIME dataset.

2. **Tool Usage Inversion:** While ARTIST uses tools much more frequently on AMC and AIME, the pattern reverses on Olympiad and especially on MATH 500, where the Base Model + Tools uses tools far more often.

3. **Response Length Correlation:** ARTIST generates substantially longer responses on AMC and AIME, which correlates with its higher tool usage and reward scores on those datasets. On MATH 500, where tool usage is lower for ARTIST, response lengths are similar between the two systems.

4. **Dataset Difficulty Gradient:** All metrics suggest the datasets vary in difficulty or nature. AMC and AIME show large performance gaps, Olympiad shows moderate gaps, and MATH 500 shows the smallest gaps, with the Base Model even leading in tool call frequency.

### Interpretation

The data suggests that the ARTIST method significantly improves the model's performance (as measured by Reward Score) on mathematical reasoning tasks compared to simply augmenting the base model with tools. However, this improvement is not achieved through a uniform increase in tool usage.

The relationship between tool calls, response length, and reward score is complex and dataset-dependent. On what are likely more challenging or procedurally intensive problems (AMC, AIME), ARTIST appears to leverage tools more aggressively and produce longer, higher-reward solutions. On other problem types (Olympiad, MATH 500), the base model with tools may rely more on brute-force tool application, while ARTIST achieves comparable or better rewards with fewer tool calls and more concise responses. This indicates ARTIST may be learning a more efficient or strategic policy for tool integration, rather than simply increasing tool frequency. The outlier is the MATH 500 dataset, where the base model's very high tool call count does not translate to a reward score advantage, hinting at potential inefficiency or misapplication of tools by the base model on that specific task distribution.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Charts: Qwen2.5-7B-Instruct Performance Comparison

### Overview

Three side-by-side bar charts compare the performance of two configurations ("Base Model + Tools" and "ARTIST") across four datasets (AMC, AIME, Olympiad, MATH 500) using three metrics: Reward Score, Tool Call, and Response Length. The charts use light blue (#ADD8E6) for "Base Model + Tools" and dark blue (#00008B) for "ARTIST".

### Components/Axes

1. **X-Axes (Datasets)**:

- AMC

- AIME

- Olympiad

- MATH 500

- Positioned at the bottom of each chart, evenly spaced.

2. **Y-Axes**:

- **Left Chart (Reward Score)**: 0.0 to 4.0 in 0.5 increments.

- **Middle Chart (Tool Call)**: 0.0 to 4.5 in 0.5 increments.

- **Right Chart (Response Length)**: 0 to 8000 in 1000 increments.

3. **Legends**:

- Located in the top-right corner of each chart.

- Light blue (#ADD8E6) = "Base Model + Tools"

- Dark blue (#00008B) = "ARTIST"

4. **Bar Structure**:

- Two bars per dataset (one for each configuration).

- Bars are grouped by dataset, with "Base Model + Tools" on the left and "ARTIST" on the right.

### Detailed Analysis

#### Reward Score

- **AMC**:

- Base Model + Tools: ~0.8

- ARTIST: ~2.7

- **AIME**:

- Base Model + Tools: ~0.4

- ARTIST: ~1.7

- **Olympiad**:

- Base Model + Tools: ~2.4

- ARTIST: ~2.6

- **MATH 500**:

- Base Model + Tools: ~3.0

- ARTIST: ~3.2

#### Tool Call

- **AMC**:

- Base Model + Tools: ~1.0

- ARTIST: ~3.2

- **AIME**:

- Base Model + Tools: ~0.3

- ARTIST: ~3.2

- **Olympiad**:

- Base Model + Tools: ~3.2

- ARTIST: ~2.9

- **MATH 500**:

- Base Model + Tools: ~4.3

- ARTIST: ~3.0

#### Response Length

- **AMC**:

- Base Model + Tools: ~2500

- ARTIST: ~4200

- **AIME**:

- Base Model + Tools: ~3000

- ARTIST: ~6700

- **Olympiad**:

- Base Model + Tools: ~3200

- ARTIST: ~3900

- **MATH 500**:

- Base Model + Tools: ~3000

- ARTIST: ~3000

### Key Observations

1. **Reward Score**:

- ARTIST outperforms Base Model + Tools in AMC (+2.9) and AIME (+1.3).

- Olympiad shows minimal difference (+0.2).

- MATH 500 has a small ARTIST advantage (+0.2).

2. **Tool Call**:

- Base Model + Tools dominates in Olympiad (+0.3) and MATH 500 (+1.3).

- ARTIST matches Base Model in AMC and AIME but uses more tools.

3. **Response Length**:

- ARTIST generates 68% longer responses in AIME.

- MATH 500 shows equal response lengths despite similar Tool Call scores.

### Interpretation

The data reveals task-specific performance patterns:

- **ARTIST** excels in AMC and AIME (likely reasoning-heavy tasks) with significantly higher Reward Scores and longer responses.

- **Base Model + Tools** performs better in Olympiad and MATH 500 (possibly math/logic tasks), using more tools effectively.

- The equal response lengths in MATH 500 suggest similar processing depth despite identical Tool Call scores.

- ARTIST's longer responses in AIME (+3700) may indicate over-engagement with tools, potentially reducing efficiency.

This suggests that while ARTIST generally demonstrates superior capability, the Base Model + Tools configuration may be more optimal for specific task types. The response length metric highlights potential trade-offs between thoroughness and efficiency.

DECODING INTELLIGENCE...