## Diagram: Comparison of RAG Architectures

### Overview

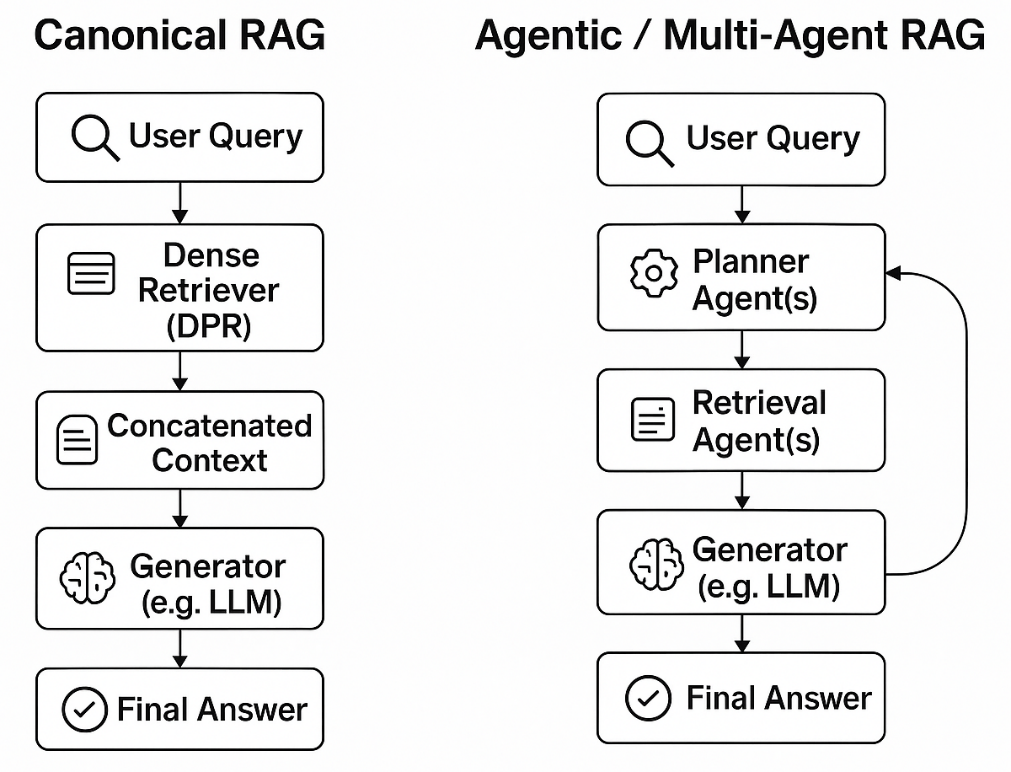

The image presents a side-by-side comparison of two Retrieval-Augmented Generation (RAG) system architectures. The left side illustrates a "Canonical RAG" pipeline, representing a standard, linear approach. The right side illustrates an "Agentic / Multi-Agent RAG" pipeline, representing a more complex, iterative approach involving specialized agents. Both diagrams use a flowchart format with labeled boxes connected by directional arrows.

### Components/Axes

The image is divided into two distinct, parallel vertical flowcharts.

**Left Flowchart: Canonical RAG**

* **Title:** "Canonical RAG" (top center of left section).

* **Components (from top to bottom):**

1. **Box 1:** Icon: Magnifying glass. Text: "User Query".

2. **Box 2:** Icon: Document with lines. Text: "Dense Retriever (DPR)".

3. **Box 3:** Icon: Document with lines. Text: "Concatenated Context".

4. **Box 4:** Icon: Brain. Text: "Generator (e.g. LLM)".

5. **Box 5:** Icon: Checkmark in a circle. Text: "Final Answer".

* **Flow:** Unidirectional, top-to-bottom. Arrows connect each box sequentially: 1 → 2 → 3 → 4 → 5.

**Right Flowchart: Agentic / Multi-Agent RAG**

* **Title:** "Agentic / Multi-Agent RAG" (top center of right section).

* **Components (from top to bottom):**

1. **Box 1:** Icon: Magnifying glass. Text: "User Query".

2. **Box 2:** Icon: Gear. Text: "Planner Agent(s)".

3. **Box 3:** Icon: Document with lines. Text: "Retrieval Agent(s)".

4. **Box 4:** Icon: Brain. Text: "Generator (e.g. LLM)".

5. **Box 5:** Icon: Checkmark in a circle. Text: "Final Answer".

* **Flow:** Primarily top-to-bottom with a critical feedback loop. Arrows connect: 1 → 2 → 3 → 4 → 5. Additionally, a curved arrow originates from the right side of Box 4 ("Generator") and points back to the right side of Box 2 ("Planner Agent(s)"), indicating an iterative or cyclical process.

### Detailed Analysis

The diagram contrasts two paradigms for implementing RAG systems.

**Canonical RAG Process:**

1. A **User Query** is received.

2. A single **Dense Retriever (DPR)** component processes the query to fetch relevant documents.

3. The retrieved documents are combined into a single block of **Concatenated Context**.

4. This context is passed to a **Generator (e.g. LLM)**, which produces the final response.

5. The process concludes with the **Final Answer**. The flow is strictly linear and one-pass.

**Agentic / Multi-Agent RAG Process:**

1. A **User Query** is received.

2. **Planner Agent(s)** analyze the query, likely decomposing it or formulating a retrieval strategy.

3. **Retrieval Agent(s)** execute the retrieval task based on the plan, potentially using multiple tools or strategies.

4. The retrieved information is processed by a **Generator (e.g. LLM)**.

5. The process concludes with the **Final Answer**.

6. **Critical Feedback Loop:** The output or state from the Generator is fed back to the Planner Agent(s). This allows the system to iteratively refine its plan, retrieve additional information, or correct errors based on intermediate results, creating a dynamic, multi-step reasoning process.

### Key Observations

1. **Structural Complexity:** The Agentic RAG diagram has an additional component ("Planner Agent(s)") and a non-linear feedback loop absent in the Canonical RAG.

2. **Agent Specialization:** The Agentic model explicitly separates planning ("Planner Agent(s)") from execution ("Retrieval Agent(s)"), suggesting a division of labor among specialized AI agents.

3. **Iterative Refinement:** The feedback loop from Generator to Planner is the most significant visual and conceptual difference. It transforms the process from a single, feed-forward pipeline into a potentially cyclical one where the system can self-correct or gather more information.

4. **Shared Foundation:** Both architectures share the same starting point ("User Query"), endpoint ("Final Answer"), and core generation component ("Generator (e.g. LLM)"). The innovation lies in the intermediate steps.

### Interpretation

This diagram visually argues for an evolution in RAG system design. The **Canonical RAG** represents a foundational, straightforward approach: retrieve once, then generate. It is simple and efficient but may lack robustness for complex queries requiring multi-faceted information or iterative reasoning.

The **Agentic / Multi-Agent RAG** represents a more sophisticated, cognitive-inspired architecture. By introducing a **Planner**, the system can strategically approach a query. The separation of **Retrieval Agents** allows for modular and potentially parallel information gathering. Most importantly, the **feedback loop** introduces a mechanism for **iterative refinement and verification**. The Generator's output isn't just the final answer; it can also be an intermediate state that informs the next planning step. This allows the system to, for example, check if the retrieved context is sufficient, identify knowledge gaps, and trigger new retrieval cycles—mimicking a human's research process.

The diagram suggests that moving from Canonical to Agentic RAG trades simplicity for increased capability, adaptability, and potential accuracy on complex tasks, at the cost of higher system complexity and likely greater computational overhead. The core message is that adding agency and iteration transforms RAG from a static lookup tool into a dynamic problem-solving system.